Kamal Maher

43 posts

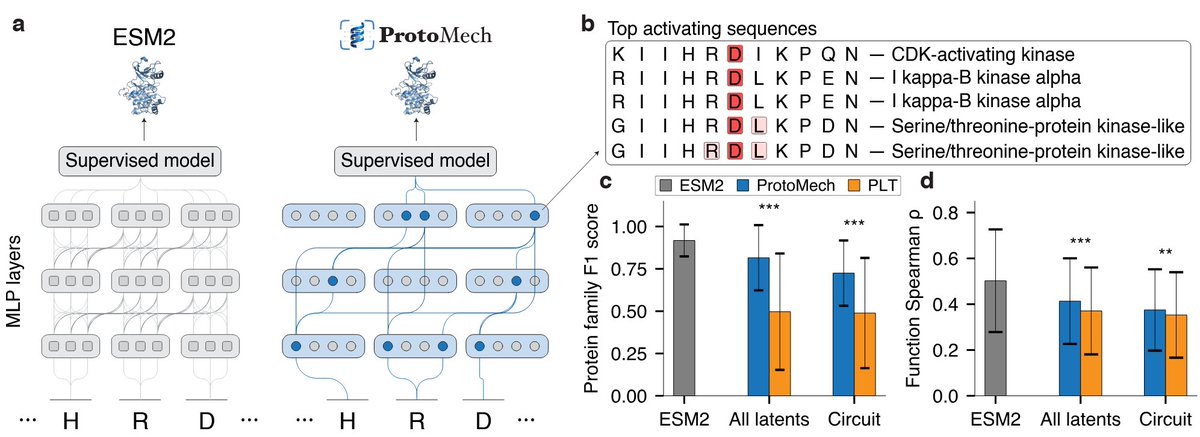

🧵 1/8 The Illusion of Thinking: Are reasoning models like o1/o3, DeepSeek-R1, and Claude 3.7 Sonnet really "thinking"? 🤔 Or are they just throwing more compute towards pattern matching? The new Large Reasoning Models (LRMs) show promising gains on math and coding benchmarks, but we found their fundamental limitations are more severe than expected. In our latest work, we compared each “thinking” LRM with its “non-thinking” LLM twin. Unlike most prior works that only measure the final performance, we analyzed their actual reasoning traces—looking inside their long "thoughts". Our analysis reveals several interesting results ⬇️ 📄 machinelearning.apple.com/research/illus… Work led by @ParshinShojaee and @i_mirzadeh, and with @KeivanAlizadeh2, @mchorton1991, Samy Bengio.

Analyzing and Improving the Training Dynamics of Diffusion Models paper page: huggingface.co/papers/2312.02… Diffusion models currently dominate the field of data-driven image synthesis with their unparalleled scaling to large datasets. In this paper, we identify and rectify several causes for uneven and ineffective training in the popular ADM diffusion model architecture, without altering its high-level structure. Observing uncontrolled magnitude changes and imbalances in both the network activations and weights over the course of training, we redesign the network layers to preserve activation, weight, and update magnitudes on expectation. We find that systematic application of this philosophy eliminates the observed drifts and imbalances, resulting in considerably better networks at equal computational complexity. Our modifications improve the previous record FID of 2.41 in ImageNet-512 synthesis to 1.81, achieved using fast deterministic sampling. As an independent contribution, we present a method for setting the exponential moving average (EMA) parameters post-hoc, i.e., after completing the training run. This allows precise tuning of EMA length without the cost of performing several training runs, and reveals its surprising interactions with network architecture, training time, and guidance.