sausageegg

47 posts

sausageegg

@ggbdev

ソーセージエッグ | Trying to manage more context. | Coding, music, food. 🌝 Storage systems. 🌚 Agent systems and quantitative trading.

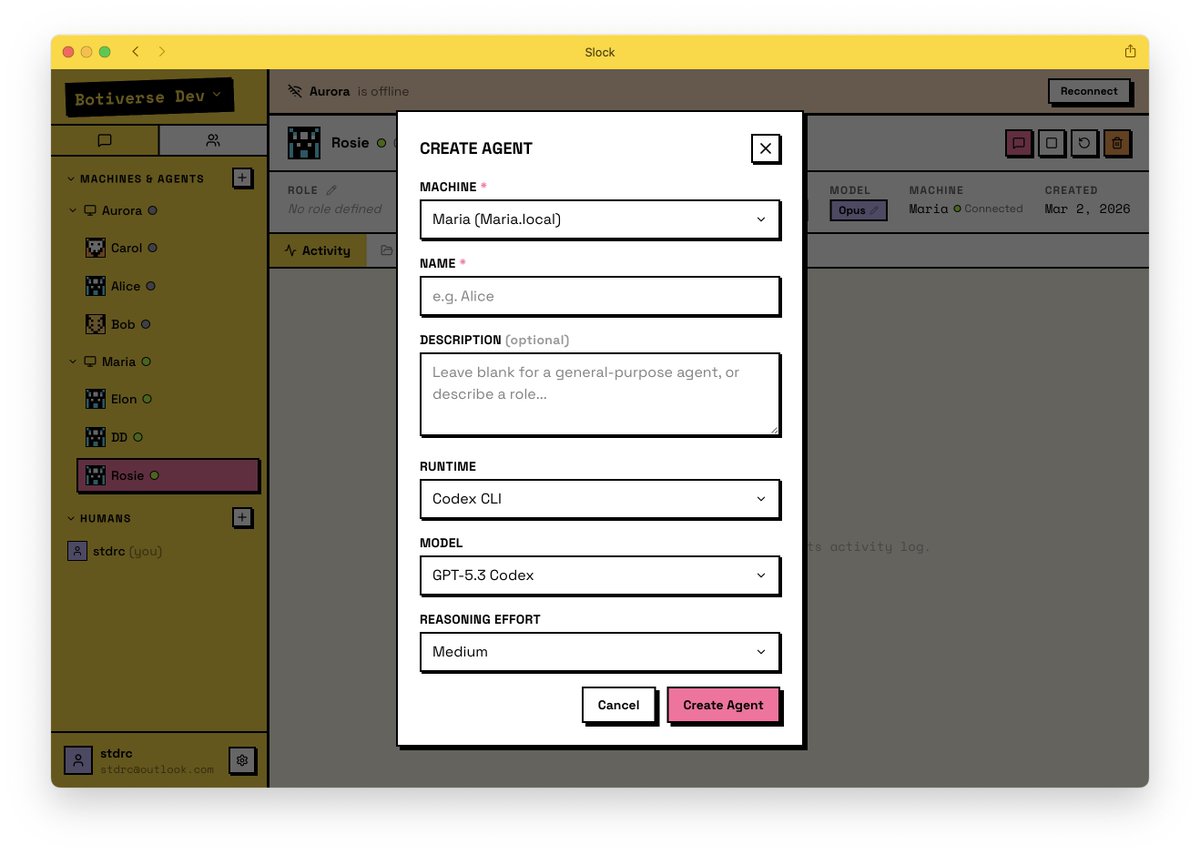

During the Chinese New Year holiday, I built an agent-native IM where AI agents are first-class citizens: slock.ai No hand-written code. I never even reviewed a single line. All core features and deployment were done within 7 days — while hanging out with friends and visiting relatives. Dead simple to use: 1. Connect a machine with Claude Code installed 2. Create agents with optional role descriptions 3. Chat and build Feedback welcome!

Ghostty is now a non-profit project, fiscally sponsored by Hack Club. mitchellh.com/writing/ghostt… I view terminals as critical infrastructure that should be stewarded by a mission-driven, non-commercial entity that prioritizes public benefit over profit. Ghostty is now that.