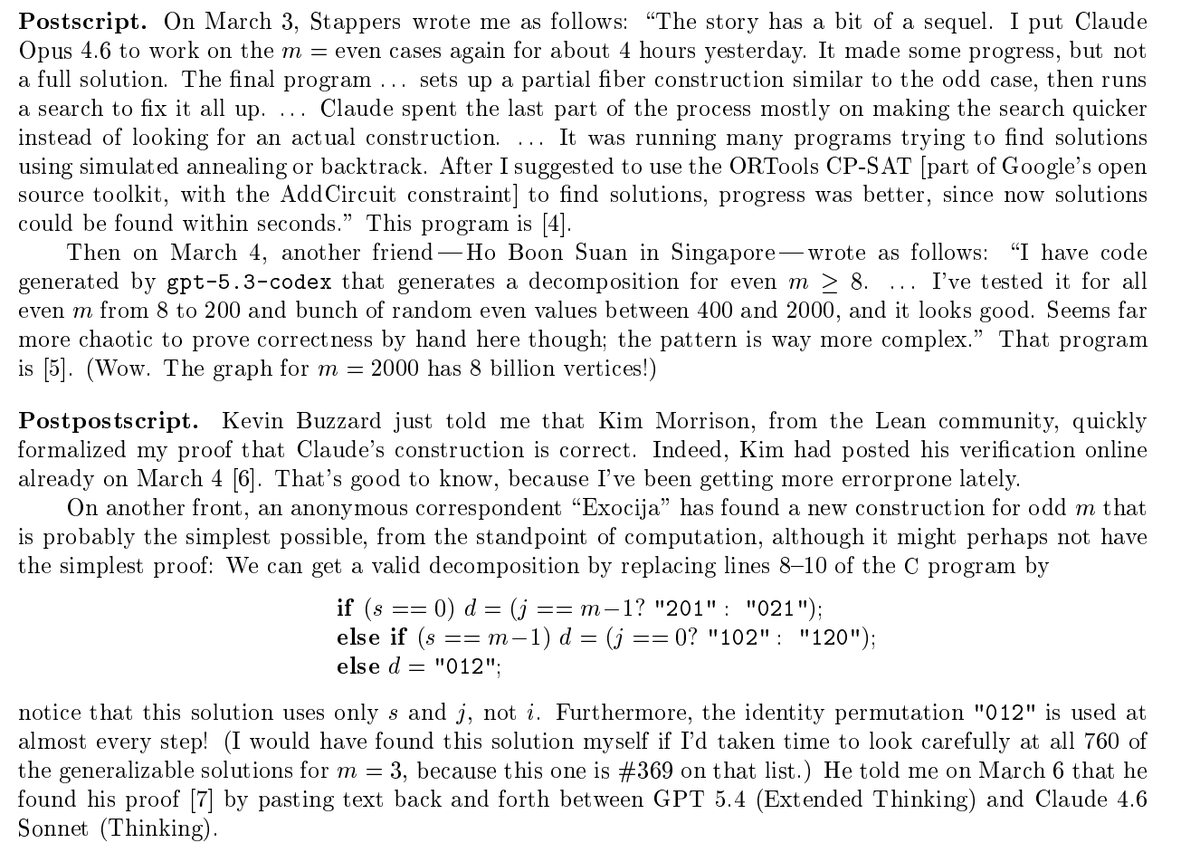

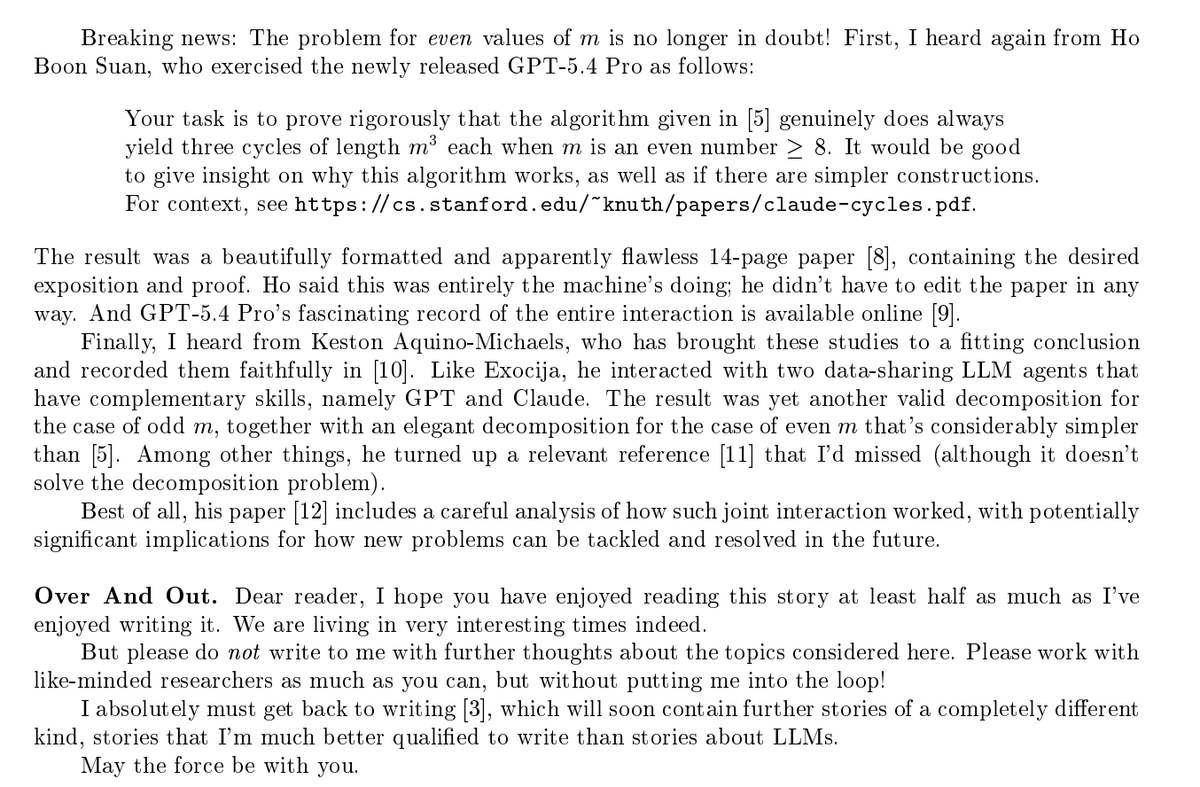

Prof. Donald Knuth opened his new paper with "Shock! Shock!" Claude Opus 4.6 had just solved an open problem he'd been working on for weeks — a graph decomposition conjecture from The Art of Computer Programming. He named the paper "Claude's Cycles." 31 explorations. ~1 hour. Knuth read the output, wrote the formal proof, and closed with: "It seems I'll have to revise my opinions about generative AI one of these days." The man who wrote the bible of computer science just said that. In a paper named after an AI. Paper: cs.stanford.edu/~knuth/papers/…