Charlie Hou

41 posts

@_emliu When I was a PhD I also found usually the ROI was good! But sometimes the ROI is not so great.. I once tried applying for a cerebras grant ~2021 and stopped when I realized adapting to their system would be too hard. Though maybe their system is more user friendly now!

English

wrote a guide on getting compute grants as a student, something I wish I did more at the beginning of my PhD. It's honestly one of the highest ROI things you can do as a student (we've gotten 100k+ gpu hrs for roughly 2 weeks of work writing).

nightingal3.github.io/blog/2026/04/1…

English

@tenderizzation I failed a mech eng exam because i brainfarted a comma by one place, and did all the rest of the exam correctly with the wrong number. It was all graded as wrong, because "had you built this, people would've died"

Taught me to do sanity checks like a paranoid and not kill people

English

i had a linear algebra prof who didn’t give partial credit on exams, students hated him but it kinda worked

will brown@willccbb

everyone goes through a process reward models phase

English

Sharing a super simple, user-owned memory module we've been playing around: nanomem

The basic idea is to treat memory as a pure intelligence problem: ingestion, structuring, and (selective) retrieval are all just LLM calls & agent loops on a on-device markdown file tree. Each file lists a set of facts w/ metadata (timestamp, confidence, source, etc.); no embeddings/RAG/training of any kind.

For example:

- `nanomem add ` starts an agent loop to walk the tree, read relevant files, and edit.

- `nanomem retrieve ` walks the tree and returns a single summary string (possibly assembled from many subtrees) related to the query.

What’s nice about this approach is that the memory system is, by construction:

1. partitionable (human/agents can easily separate `hobbies/snowboard.md` from `tax/residency.md` for data minimization + relevance)

2. portable and user-owned (it’s just text files)

3. interpretable (you know exactly what’s written and you can manually edit)

4. forward-compatible (future models can read memory files just the same, and memory quality/speed improves as models get better)

5. modularized (you can optimize ingestion/retrieval/compaction prompts separately)

Privacy & utility. I'm most excited about the ability to partition + selectively disclose memory at inference-time. Selective disclosure helps with both privacy (principle of least privilege & “need-to-know”) and utility (as too much context for a query can harm answer quality).

Composability. An inference-time memory module means: (1) you can run such a module with confidential inference (LLMs on TEEs) for provable privacy, and (2) you can selectively disclose context over unlinkable inference of remote models (demo below).

We built nanomem as part of the Open Anonymity project (openanoymity.ai), but it’s meant to be a standalone module for humans and agents (e.g., you can write a SKILL for using the CLI tool). Still polishing the rough edges!

- GitHub (MIT): github.com/OpenAnonymity/…

- Blog: openanonymity.ai/blog/nanomem/

- Beta implementation in chat client soon: chat.openanonymity.ai

Work done with amazing project co-leads @amelia_kuang @cocozxu @erikchi !!

English

I recently started a new role as a Research Scientist at Google DeepMind!

Before looking ahead, I want to say a huge thank you to my former teammates at Meta. I'm incredibly grateful for the time we spent building together, and I know they will continue to build amazing things.

I’ve always admired DeepMind’s focus on building AI responsibly to benefit humanity. I'm really excited to collaborate with such a brilliant team and tackle some of the hardest foundational problems in our field.

Can't wait to get to work!

English

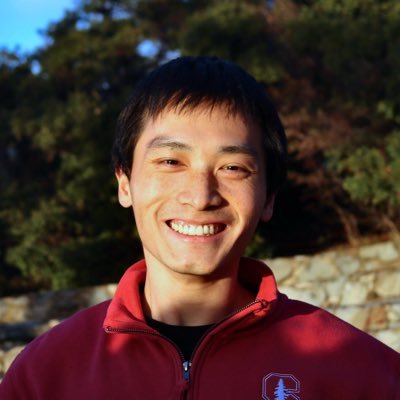

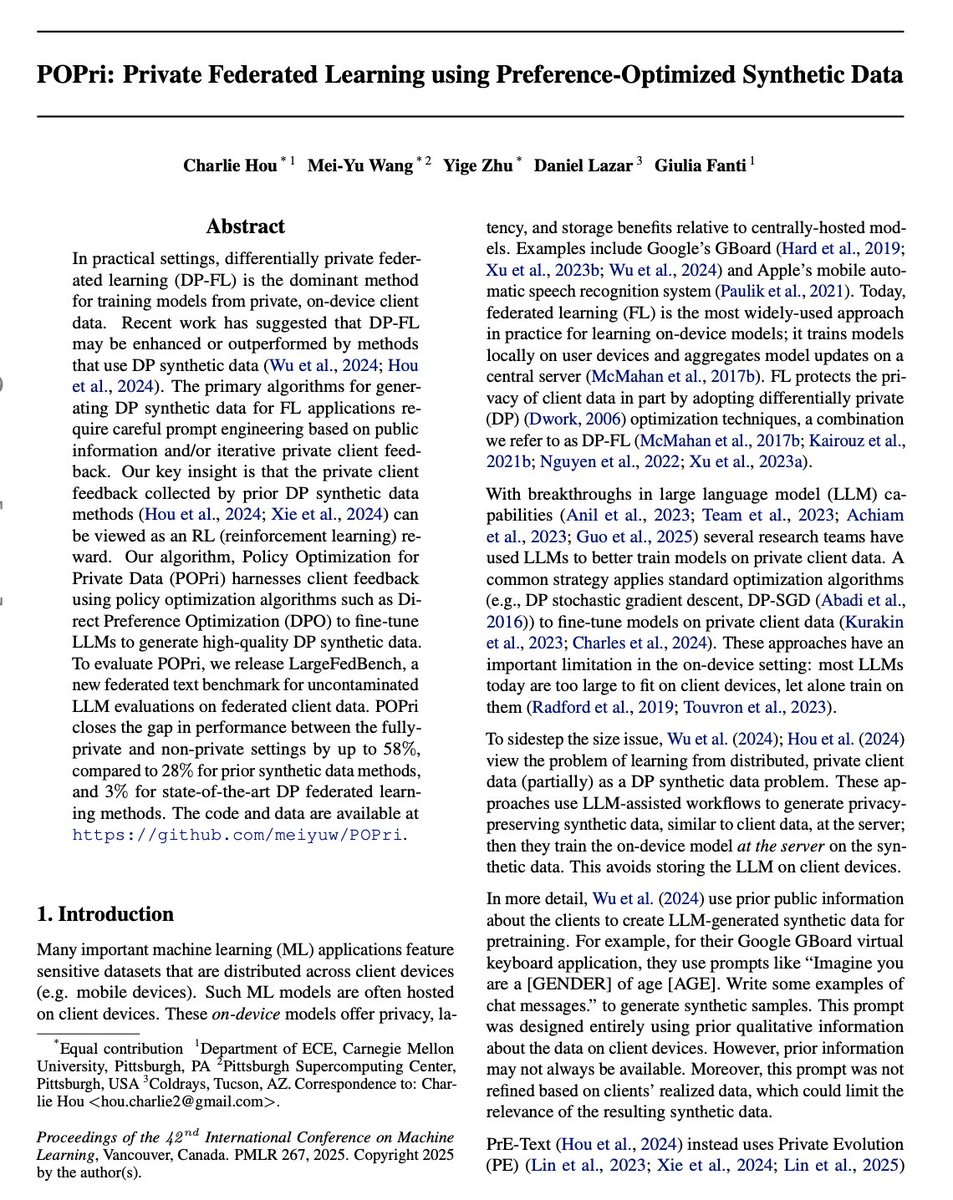

Happy to see Federated Learning going big with LLMs !

Charlie Hou@hou_char

Gave a talk at @OpenAI on our work 🌸 POPri “Policy Optimization for Private Data”. POPri is a huge improvement in synthetic data generation under security+privacy constraints! Learn more:

English

Pretty clever way to generate DP synthetic text!

Previously, private evolution used client feedback to improve synthetic data through in-context learning.

This paper uses the client feedback for DPO, as such (i) using the 'negative' samples and (ii) finetuning rather ICL.

Charlie Hou@hou_char

Gave a talk at @OpenAI on our work 🌸 POPri “Policy Optimization for Private Data”. POPri is a huge improvement in synthetic data generation under security+privacy constraints! Learn more:

English

Code: github.com/meiyuw/POPri

Paper: arxiv.org/abs/2504.16438

Huggingface papers: huggingface.co/papers/2504.16…

Datasets: huggingface.co/hazylavender

Thanks to the team: Mei-Yu Wang, Yige Zhu, Daniel Lazar, and @giuliacfanti

English

Gave a talk at @OpenAI on our work 🌸 POPri “Policy Optimization for Private Data”. POPri is a huge improvement in synthetic data generation under security+privacy constraints! Learn more:

English

Charlie Hou retweetet

Charlie Hou retweetet

I've been on the team building Grapevine since the beginning, and in the span of a few months our internal company GPT grew from a hacky experimental demo into my most-used work AI tool.

Phillip Wang@flippnflops

I’m excited today to share Grapevine, a system that makes it really easy for AI agents to search company knowledge across, Slack, Notion, codebase, and more. The first app we’re launching with it is a company GPT that works remarkably well, better than any alternative today.

English