Janmaarten Batstra

3.1K posts

Janmaarten Batstra

@interminded

--//tweets NL-EN --Innovative entrepreneur//gadget freak//politics//Money is a means, not an end//--

I spoke to Anthropic’s AI agent Claude about AI collecting massive amounts of personal data and how that information is being used to violate our privacy rights. What an AI agent says about the dangers of AI is shocking and should wake us up.

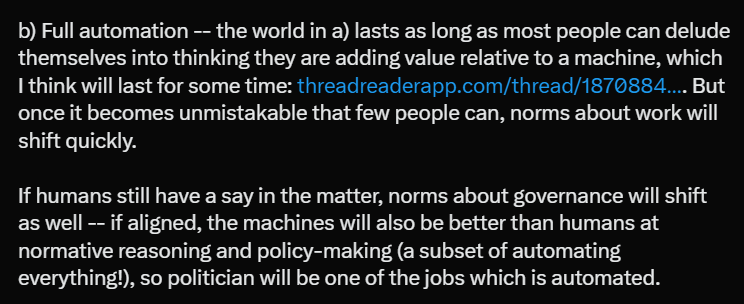

I think it's worth separating two worlds: a) Partial automation -- AI automates some stuff but not others (like a supercharged version of past technology), with wages falling for some and increasing for others. In this world, I agree completely about disempowerment. Promises to prevent job loss will be hugely politically popular, and sometimes defensible for political economy reasons. b) Full automation -- the world in a) lasts as long as most people can delude themselves into thinking they are adding value relative to a machine, which I think will last for some time: threadreaderapp.com/thread/1870884…. But once it becomes unmistakable that few people can, norms about work will shift quickly. If humans still have a say in the matter, norms about governance will shift as well -- if aligned, the machines will also be better than humans at normative reasoning and policy-making (a subset of automating everything!), so politician will be one of the jobs which is automated.

@jachiam0 Hey Joshua, I made that website (stoptherace.ai) Our ask is to stop developing new frontier models if the other major labs agree to do the same. The teams working on improving the capabilities of these models would move to narrow AI or alignment research instead. (1/)

A week from today, we will be at Anthropic, OpenAI, and xAI, demanding that leaders agree to a conditional AI pause. These companies are recklessly endangering all of our lives. Their excuse is that they can't pause unilaterally. So they must commit to pausing if others do.

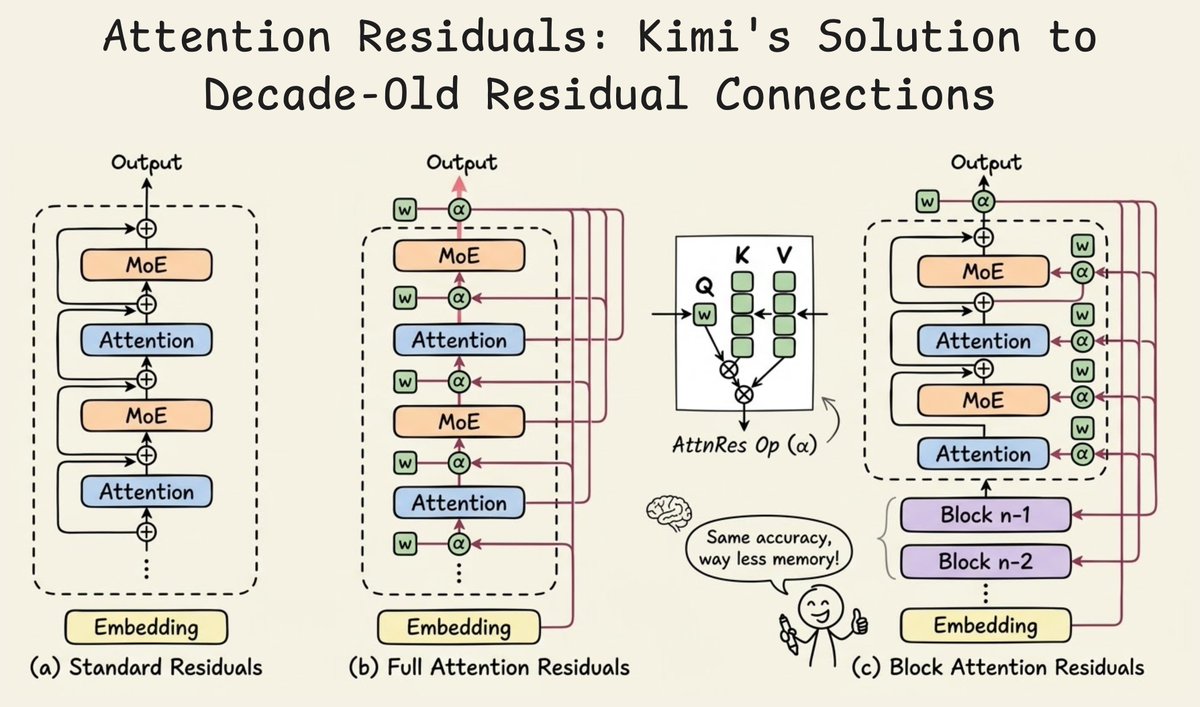

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…