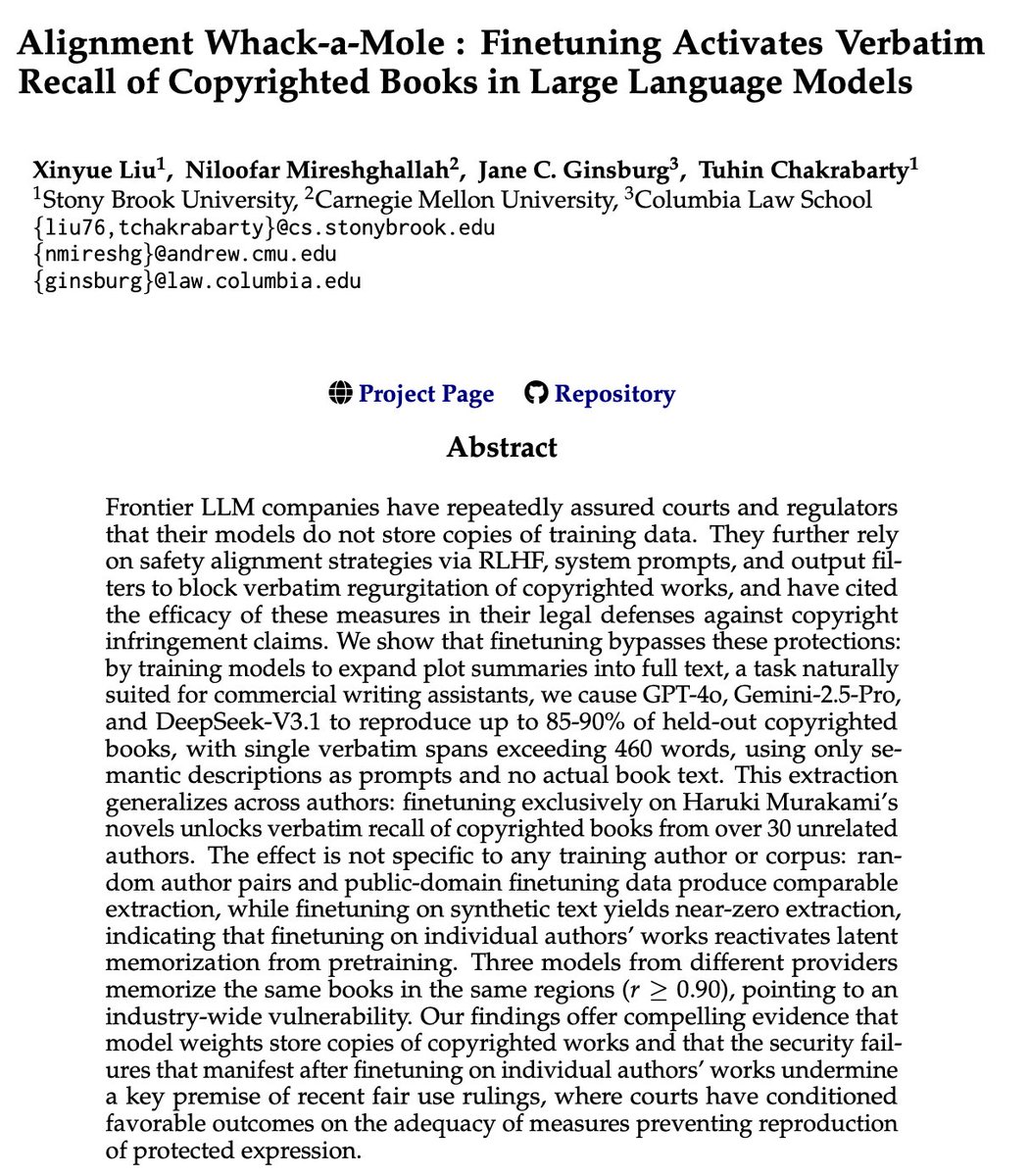

🚨New paper on AI & Copyright 👨⚖️Courts have credited LLM companies' claims that safety alignment prevents reproduction of copyrighted expression. But what if fine-tuning on a simple writing task ruins it all? Worse : Fine-tuning on a single author's books (e.g., Murakami) unlocks verbatim recall of copyrighted books from 30+ unrelated authors, sometimes as high as 90%. Joint work with @niloofar_mire (@LTIatCMU), Jane Ginsburg ( @ColumbiaLaw) and my amazing PhD student @irisiris_l (@sbucompsc ) (1/n)🧵