AJAY

3.6K posts

AJAY

@jaykrishAGI

I share AI insights ,news and latest trends and tools - helping you stay ahead in just 5 minutes a week | @IITGuwahati @Covcampus | @UNDP Volunteer (Climate)

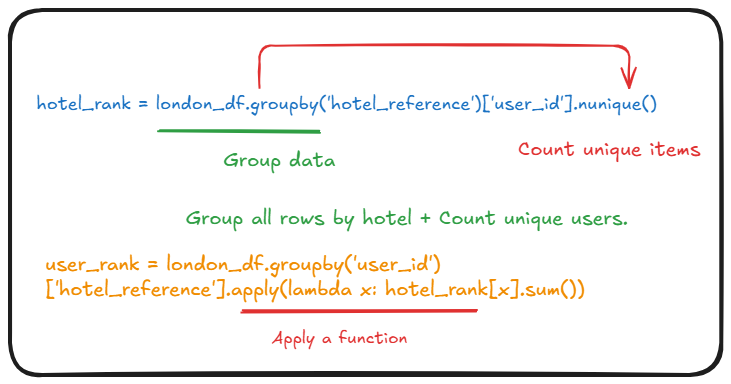

The government in Iran is setting a puppet technique for our minds to be stationary through illusionism in media. A puppet moves because someone else controls it. it with strings. Now Iran is using these same tactics in perpetuity, like making historical figures speak and more manipulation. With the face swap technology, it became very hard to identify what is real and what is not. Wave2Lip and FaceSwap are all GANs; they all have a generator and discriminator to identify what's an illusion. Ultimately it creates ultra-realistic fake data. Currently I have noticed even this SOTA model shows high accuracy for light-skinned males and much lower accuracy for dark-skinned females; some kind of gender bias happening is detrimental even with this LenYun model, as well as the machine bias. Most of this agentic AI will run on feedback loops, like a system that reacts to its own results. + Feedback loops are reinforcing, but negative feedback loops are stabilizing. I am just assuming confusion matrices here, like is there a chance where this embodied AI shows a true negative (ignore a person from helping during an emergency), a false positive (attack an innocent person by mistake), and a false negative (misses a real patient from helping)? A true negative would be the cheapest AI-embodied robot you might see in a local place. But FP would not be tolerable. FN is dangerous here. I recommend using a fairness mitigation technique at this point. That's only the solution. part 1.