jonclement

325 posts

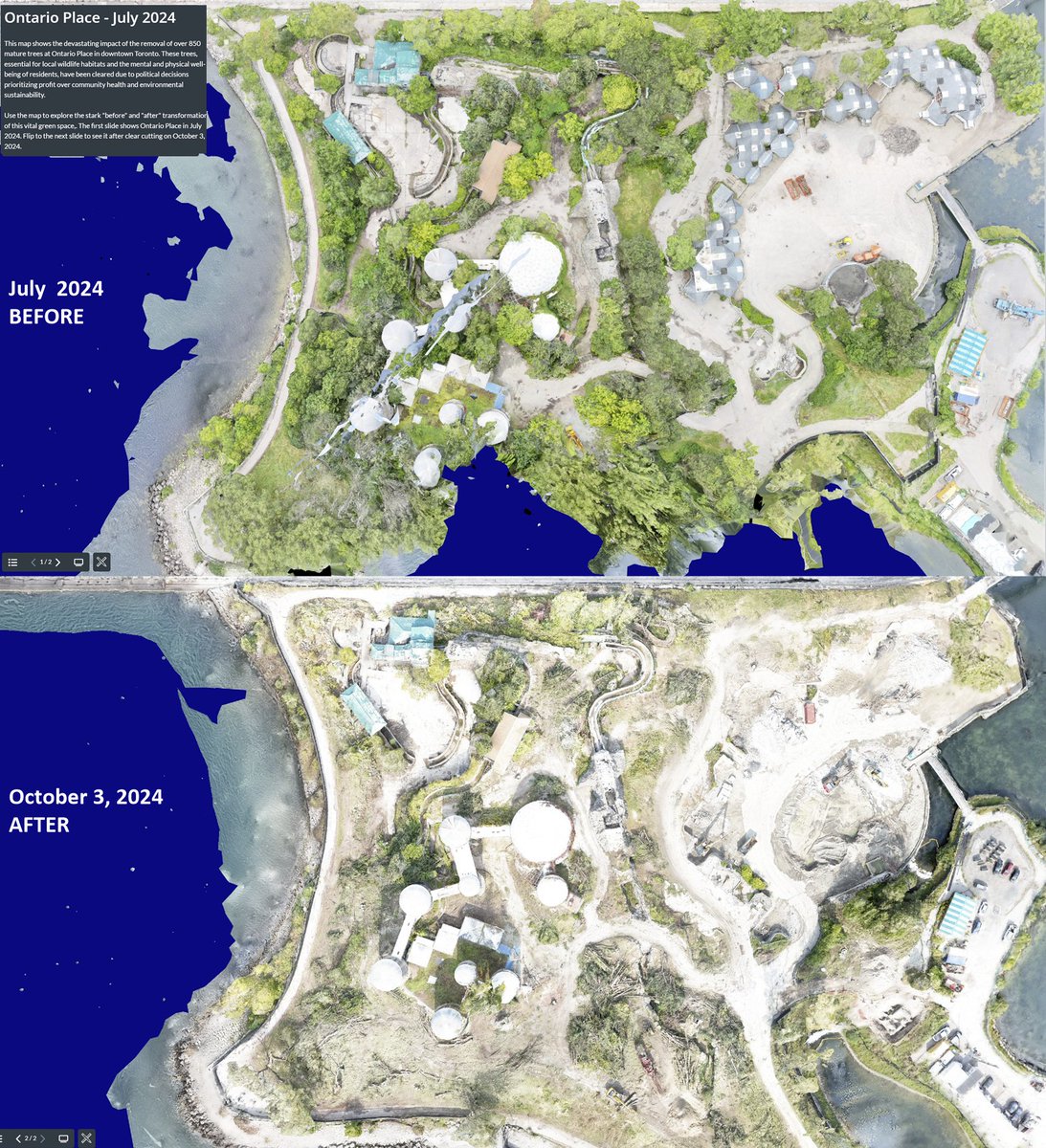

Today I went down to document and bear witness to the destruction of the West Island. Earlier in the summer, I used my drone to create a 3D map of it's beauty. I am sharing a link which shows the before and after of this special place. ion.cesium.com/stories/viewer…

Distressed birds circling the forest destruction at Ontario Place @ONPlace4All

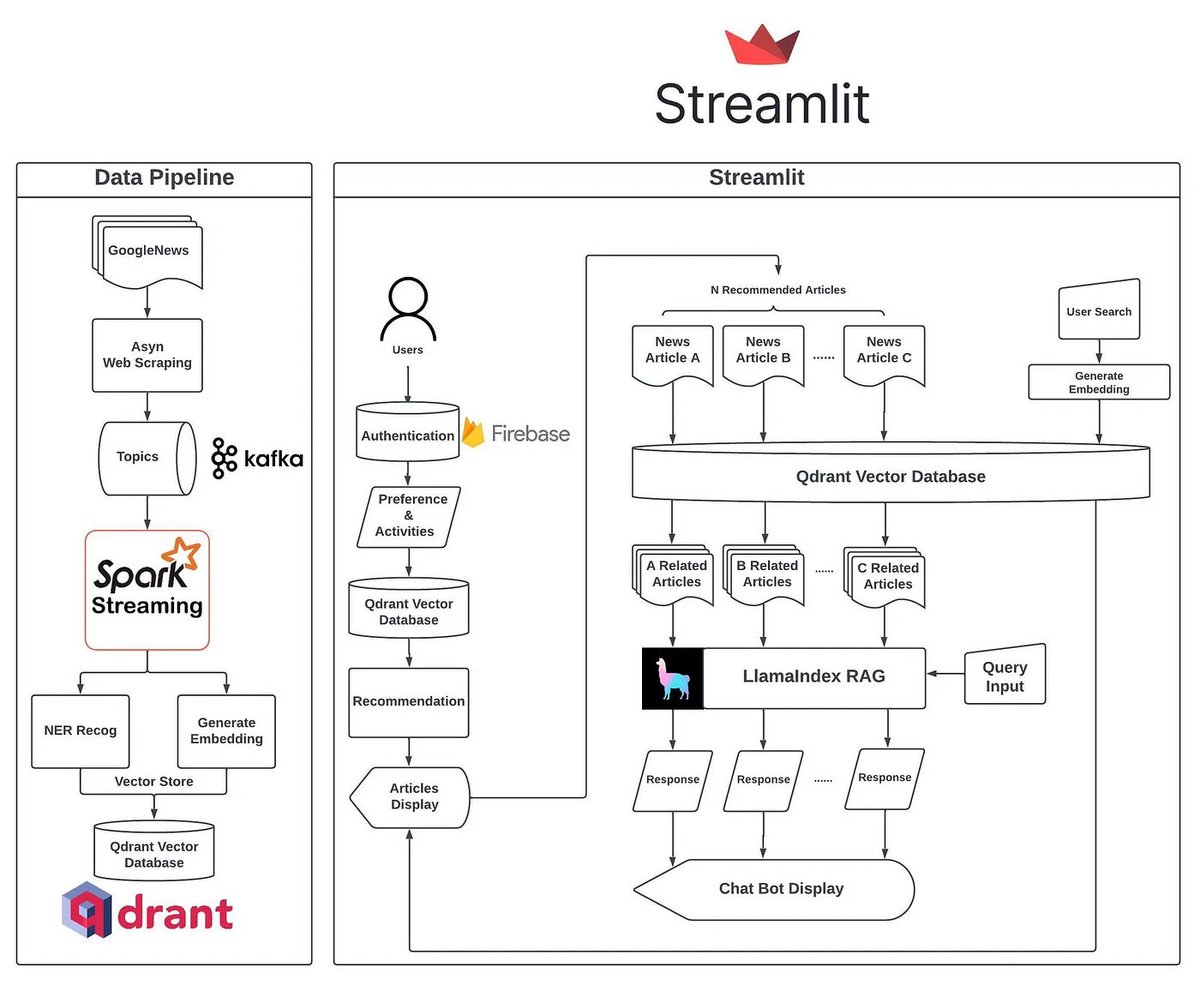

We’re excited to feature NewsGPT (by timho102003) 📰🧠 - a production-grade news aggregator augmented with LLM capabilities. ✅ Daily pipeline of reliable news sources ✅ Tailored News Recommendations ✅ For any given article, chat with related articles Best of all, it’s fully open-source. It’s an awesome reference application for anyone looking to build production-grade RAG combined with recommendation systems 🔎 There’s some awesome architecture details ⚙️: 1️⃣ Data pipeline: Spark batch processing for NER/embeddings 2️⃣ Personalization: @Firebase for auth, AWS lambda for recommendations, @qdrant_engine as vector db 3️⃣ Application: @llama_index for RAG capabilities, @streamlit for personalization Full blog here: blog.llamaindex.ai/newsgpt-neotic… Open-source repo: github.com/timho102003/Ne… This has since turned into a production app (Neotice), check it out here! neotice.app Full credits: Tim Ho (timho102003) as the author of this hackathon-project-turned-full-stack-app! Congrats 🙌

#ONpoli NEW: Therme Bucharest, which opened in 2016, has lost money overall as a business. Net Profits have been -$15.5M for its history (RON to CAD is about 0.3). For 2022, the net profit was $3.2M CAD. As a reminder, Therme's estimated construction costs are $350M CAD.

"This spa is not a place for millionaires": Therme Group CEO Robert Hanea on his controversial plans for Ontario Place torontolife.com/city/ontario-p…