Josip Krapac

6.9K posts

Josip Krapac

@josipK

I'm learning to be patient, but it's going slower than expected.

Barcelona, Cataluña Beigetreten Nisan 2009

935 Folgt326 Follower

@stanmaltman @yoavgo @stanislavfort So precision doesn't matter because I can brute force search for needle in the haystack, as long as my recall makes sure that there's needle in that haystack in the first place? But there must be some precision below which this doesn't pay off, it can't be arbitrarily small.

English

@giffmana The loss was falling so fast it had to kill itself before it reaches AGI.

English

Josip Krapac retweetet

4 postdoc positions are available at TU Darmstadt and Centre for Excellence on Reasonable AI.

I am part of the Active AI group. Please help me spread the word.

Description: hessian.ai/projects/reaso…

Application portal: career.tu-darmstadt.de/tu-darmstadt/j…

English

@pmddomingos @TDaltonC @DarioAmodei I'd say the guy is just deciding for himself. What's wrong with that?

English

@TDaltonC @DarioAmodei Yep, the arrogance is on full display here: he thinks he knows better, and won’t let the Ukrainians decide for themselves.

English

So @DarioAmodei, you don’t think Ukraine should be allowed to use autonomous weapons against Russia?

English

@yishan @danaparish I mostly chat about my projects and (imagined) illnesses. I don't know what you'd enjoyed more!

English

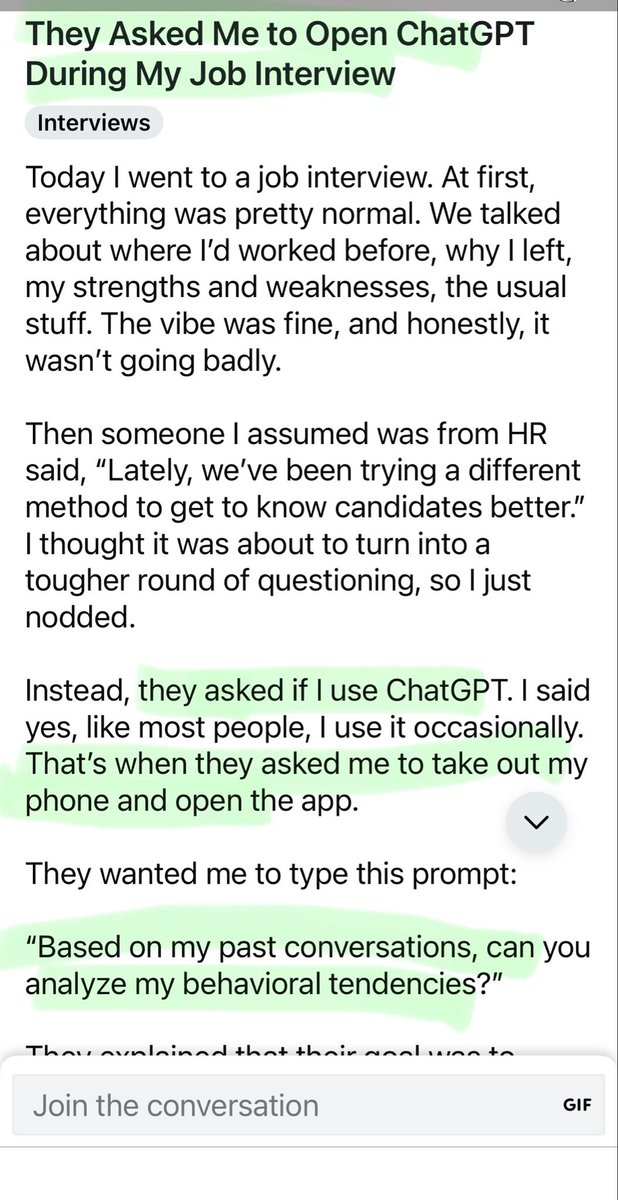

As an employer, I would absolutely love to have this kind of info on a candidate and would consider it extremely valuable for making hiring decisions.

It is also grossly inappropriate to do and I would never do it.

I was also a bit curious about what it would say about me personally so I typed it into ChatGPT.

English

@ChrSzegedy "Are there any works that explore curriculum learning in an unsupervised setting? Does the notion of Y is easier to learn if you first learn X happen automatically in auto-regressive modelling, or diffusion models or any other generative modelling approach?"

English

There must be huge low-hanging fruit in figuring out how to train metacognition incrementally.

If the human brain is any good template, then it is quite telling that most of the dramatic cognitive learning happens after the brain has finished growing and loses significantly in plasticity. Humans get more and more effective at learning metacognitive skills even in their twenties.

We achieve similar effects by training increasingly large models, but there must be a way to enable incrementally improved metacognitive learning at near-constant compute cost after certain minimal skills are achieved.

English

@duolingo something is broken again. Didn't have my x3 today, a bunch of points to today not added... My profile is j88k.

English

@giffmana Definitely speeding up (flash attention, kv-cache) and quantization basics.

English

Hey chat, I need your opinions!

Later this week, I'll teach my usual Transformers class. However, I just found out that someone is giving "foundations of attention and transformers" lecture before me already.

So I'm thinking of still doing a "recap Lucas style" but then spending more time on some topics my lecture usually doesn't cover, or just scratches the surface.

What more advanced/recent topics would you like to see included? Keep in mind this is a teaching/class style talk.

Some ideas: more in-depth on decoding, kv-cache. Flex/flash/paged attention. Spend more time on multimodal versions? Tokenizers?

I think bad ideas: geglu, global/local, rmsnorm, ... I feel like these are all trivially understood and not worth "teaching", though you may convince me otherwise.

English

@eklek_trokut A preporučam i kreme za noge iz DM-a. Malo self-love prije spavanja!

@eklek_trokut Javi se kad počneš s kompresijskim čarapama za proširene vene. Ako već nisi!

@phillip_isola @francoisfleuret It's very similar to what I think makes sense: minimal change to the existing method(s) that makes the biggest impact. What is missing is how one measures impact, but your idea with "open problems" makes sense. Minimal change forces you to connect to the existing knowledge.

English

@francoisfleuret One way around this might be to have a system that penalizes methodological novelty: your reward is the open problems you solved minus the new methods you had to introduce to do so.

I think that could be fun to try as a workshop competition or something.

English

@AntOne1998095 @LibertyF0x @AlecStapp Can you do it cumulative from when Poland joined? This is just one year, right?

English

@LucaAmb @ziv_ravid @yoavgo I can swap in arguments (variables) in that "reasoning program" provided that "argument types" are "the same" and get a valid result.

English

@LucaAmb @ziv_ravid @yoavgo But if person gives me reasoning steps, and they entail each other I'm more likely to believe both the result and that it has been reached by reasoning. I can apply the same steps and arrive at the same results.

English

@ziv_ravid @LucaAmb @yoavgo I have no idea if LLMs do that. They certainly sometimes leave impression, but impression of a mechanism and mechanism are not the same thing.

English

@ziv_ravid @LucaAmb @yoavgo Execution is following the steps of the plan, verifing that following of the steps leads us to desired intermediate state, and triggering backtracking and re-planning.

English