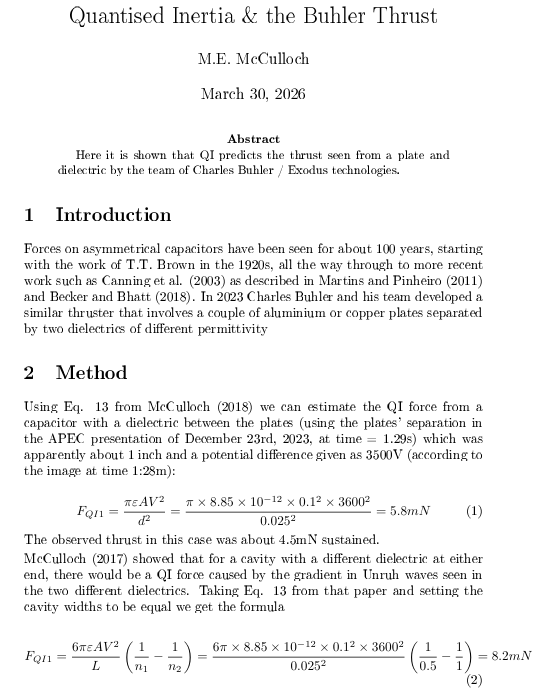

Rustumon retweetet

Rustumon

15K posts

Rustumon retweetet

Rustumon retweetet

Rustumon retweetet

I have another meeting today to work towards funding a study to map the detailed structure of a Rindler horizon, & another to look at #QI's predictions way in the past.

English

Rustumon retweetet

@memcculloch cooling slightly changes the micro-geometry of cavities and interfaces near the surface? (A bump to accelleration?

English

Rustumon retweetet

#Anomalyoftheday The Dark Rate. Electrons jump out of metals faster if you illuminate or heat them. That makes sense: more energy available, but electrons also jump out more when you keep them dark & cool them way below zero. This is a current anomaly in solid state physics.

English

Rustumon retweetet

Rustumon retweetet

ODNI is delivering on President Trump’s Cyber Strategy for America. 🇺🇸

Under DNI Gabbard’s leadership, the Intelligence Community is advancing the strategy’s third pillar—Modernize and Secure Federal Government Networks—through the largest IC-wide technology modernization effort in history, to strengthen our defenses, ensure greater efficiency, and implement real cost savings for the American people.

As part of this effort, we’ve defined and rolled out the Intelligence Community’s new Zero Trust strategy, shifting to a data-centric security model that protects information regardless of location or network. At the same time, we've launched a shared Intelligence Community repository of cybersecurity authorizations for use by all 18 elements, ending duplicative hardware/software accreditation efforts, streamlining operations, and saving taxpayer dollars.

Thanks to @POTUS and @DNIGabbard, America’s cyber infrastructure is positioned to defend critical intelligence systems and remain ahead of our adversaries. Always.

🔗 whitehouse.gov/wp-content/upl…

English

Rustumon retweetet

#Anomalyoftheday GPS satellites show an acceleration anomaly of 7x10^-10 m/s^2, close to the acceleration anomaly shown by cosmic acceleration, galaxy rotations, wide binary orbits, Proxima, some spacecraft, oh, & predicted by QI. Small number. Big reach! ion.org/publications/a…

English

Rustumon retweetet

Funny how you claim Iran opposes terrorism while arming Hezbollah (1983 Beirut barracks bombing: 241 US dead), Houthis (Red Sea attacks), Iraqi militias (200+ attacks on US forces), Hamas/PIJ rockets on civilians - plus multiple foiled IRGC plots to assassinate Trump in 2024. 'Rats' indeed. Pot, meet kettle.

Hypocrisy

English

Harold "Sonny" White, PhD@EagleworksSonny

New paper accepted in Physical Review Research (APS): “Emergent Quantization from a Dynamic Vacuum.” We show the hydrogen spectrum emerges from dynamic vacuum physics, suggesting quantization may arise from a vacuum that varies in space and time. journals.aps.org/prresearch/abs… #physics

QAM

Rustumon retweetet

I'm in the process of trying to change the concept of mass, not attempted since 1600. There will be rending of garments, but rest assured the new model #QI is in better agreement with new data from deep space & new data from the lab & will give the world a technological boost.

English

Virtual / Transient particles create matter from the quantum foam

nature.com/articles/s4158…

English

Rustumon retweetet

@0xAli_ @thisguyknowsai Why not store the model on a RAM drive and load the layers from there?

English

this is real but pretty misleading. airllm has been around since late 2023, not breaking news. the tweet conveniently skips the biggest problem, speed. it loads every layer from disk per token, so a 70b model at fp16 means reading 140gb from disk for each token. even with a good nvme thats like 20 seconds per token. your old gaming laptop technically runs it but you wait 30 minutes for a short answer. if you have a good gpu you dont need this, llama.cpp with quantized models is way faster. if you dont have a good gpu youre stuck waiting forever. cool proof of concept but the bottleneck is disk io not gpu, and no hardware upgrade fixes that

English

🚨 BREAKING: Someone just made 70B parameter models run on a single 4GB GPU.

It's called AirLLM. No quantization. No distillation. No pruning. Just raw 70B inference on hardware that costs less than a dinner.

You can even run Llama 3.1 405B on 8GB VRAM.

Here's how it works:

→ Decomposes the model layer-by-layer

→ Loads only one layer into GPU memory at a time

→ Runs inference, moves to the next layer

→ Prefetches the next layer while computing the current one

→ Supports 4-bit and 8-bit compression for 3x speed boost

No cloud API. No $10K GPU. Just pip install airllm and go.

Here's the wildest part:

It supports almost every major model — Llama, Qwen, Mistral, ChatGLM, Baichuan, InternLM — and it auto-detects the model type. One line of code to load. One line to generate.

Works on Linux, macOS (Apple Silicon), and even Google Colab free tier.

Your old gaming laptop can now run the same models that needed an A100.

100% Open Source. Apache 2.0 License.

English

Rustumon retweetet

The James Webb telescope keeps proving QI. The Little Red Dots are 14B year old galaxies, spinning way too fast. Only quantised inertia predicts that spin because the cosmos was informationally smaller then & inertia was lower. No black holes needed now! skyandtelescope.org/astronomy-news…

English

@NachoQuixotic @MyLordBebo They were all silent when he decided that and when they were in power they did the same.

English