Angehefteter Tweet

DataVidhya

532 posts

DataVidhya

@thedatavidhya

Crack your data interviews with us. Courses, Projects & Coding platform 🚀

Beigetreten Ocak 2025

1 Folgt4.3K Follower

DataVidhya retweetet

AWS has 200+ services. You need 14.

I built a production pipeline using just these.

I just dropped a FREE 𝗔𝗪𝗦 𝗠𝗮𝘀𝘁𝗲𝗿𝗰𝗹𝗮𝘀𝘀 on YouTube.

A full end-to-end data engineering project on AWS — from zero to production pipeline.

Here's what you'll learn:

☁️ 𝗖𝗹𝗼𝘂𝗱 𝗙𝘂𝗻𝗱𝗮𝗺𝗲𝗻𝘁𝗮𝗹𝘀

→ IaaS vs PaaS vs SaaS — what you manage vs what AWS manages

→ Regions, Availability Zones, Edge Locations

→ AWS Account setup & IAM basics

🪣 𝗦𝘁𝗼𝗿𝗮𝗴𝗲

→ S3 deep dive — storage classes, lifecycle, versioning, replication

→ EBS vs EFS — when to use block vs file storage

🗄️ 𝗗𝗮𝘁𝗮𝗯𝗮𝘀𝗲𝘀

→ RDS, DynamoDB, Redshift, DMS

→ When to pick relational vs NoSQL vs data warehouse

⚡ 𝗖𝗼𝗺𝗽𝘂𝘁𝗲

→ EC2 instance types & pricing models

→ Lambda — serverless data processing

→ Glue — managed ETL with PySpark → EMR — big data at scale

📊 𝗔𝗻𝗮𝗹𝘆𝘁𝗶𝗰𝘀

→ Athena — SQL on S3, pay per query

→ Glue Data Catalog — centralized metadata

→ QuickSight — dashboards & BI → Kinesis — real-time streaming

🔨 𝗧𝗵𝗲 𝗛𝗮𝗻𝗱𝘀-𝗢𝗻 𝗣𝗿𝗼𝗷𝗲𝗰𝘁 This is where it gets real.

You'll build a complete YouTube Trending Data Pipeline.

Live YouTube API data → S3 → Lambda → Glue → Athena → QuickSight.

→ 𝗕𝗿𝗼𝗻𝘇𝗲 𝗟𝗮𝘆𝗲𝗿: Ingest raw data from YouTube API across 10 countries

→ 𝗦𝗶𝗹𝘃𝗲𝗿 𝗟𝗮𝘆𝗲𝗿: Cleanse, deduplicate, add engagement metrics with Glue ETL

→ 𝗗𝗮𝘁𝗮 𝗤𝘂𝗮𝗹𝗶𝘁𝘆 𝗚𝗮𝘁𝗲: Validate before anything hits production

→ 𝗚𝗼𝗹𝗱 𝗟𝗮𝘆𝗲𝗿: Business-ready analytics — trending, channel & category insights

All orchestrated by Step Functions. Failures alert via SNS. Automated on a schedule.

This is how production data pipelines are built.

Not in notebooks. Not with drag-and-drop. With real AWS services, real architecture, and real code.

Link to the video in the comments 👇

English

> Disappear for 6–8 months and Crack Data Platform Engineer Roles.

Here is how you can do it in 6 steps:

Step 1 (3–4 Weeks) → Strong Foundations

→ Advanced SQL (CTEs, window functions, optimization)

→ Python (data pipelines, APIs, scripting)

→ Linux & shell scripting

→ Basics of distributed systems

Step 2 (4–5 Weeks) → Data Warehousing Core

→ Star & Snowflake schema design

→ Partitioning & clustering

→ Query optimization

→ Columnar storage concepts

Step 3 (4–5 Weeks) → ETL Frameworks

→ Build reusable ETL pipelines

→ Airflow (DAG design, retries, scheduling)

→ Data validation & quality checks

→ Incremental data loading

Step 4 (5–6 Weeks) → Big Data Processing

→ Apache Spark (DataFrames, transformations)

→ PySpark optimization techniques

→ Handling TB-scale data

→ Performance tuning (caching, joins)

Step 5 (4–5 Weeks) → Data Platform Design

→ Data lakes vs data warehouses

→ Lakehouse architecture (Delta Lake/Iceberg)

→ Metadata management

→ Schema evolution & versioning

Step 6 (4–6 Weeks) → Production & Cloud

→ AWS (S3, EMR, Redshift, Glue) or GCP stack

→ Docker + basic Kubernetes

→ CI/CD for data pipelines

→ Monitoring (logs, alerts, failures)

Execution Layer (parallel throughout)

→ Build a mini data platform (ingestion → processing → serving)

→ Integrate batch + streaming pipelines

→ Work with real datasets (events, logs, APIs)

→ Document architecture + decisions (GitHub + diagrams)

English

> Amazon Data Engineering Interview Questions

- SQL / Data Manipulation

1. Find the 2nd highest salary from a table

2. Identify duplicate records in a dataset.

3. Remove duplicates without using DISTINCT

4. Get top N records per group (e.g., top 3 users per country)

5. Calculate running totals (cumulative sum)

6. Find gaps in dates or missing records

7. Write a query to pivot/unpivot data

8. Find users who logged in consecutively for N days

9. Join multiple tables and handle NULL cases

10. Optimize a slow SQL query

- Python / Scripting

1. Read multiple CSV files and merge them

2. Handle missing or corrupted data in a dataset

3. Transform nested JSON into tabular format

4. Implement data deduplication logic

5. Process large files efficiently (memory optimization)

6. Generators vs lists (difference and use case)

7. Write ETL logic in Python

3. ETL / Pipeline Design

1. Design a pipeline to ingest data from multiple sources

2. Handle late-arriving data in pipelines

3. Implement SCD Type 1 and Type 2

4. Ensure idempotency in ETL jobs

5. Design incremental vs full load strategy

6. Handle schema changes in pipelines

7. Orchestrate pipelines (Airflow concepts)

4. Data Warehousing

1. Difference between OLTP and OLAP

2. Star schema vs snowflake schema

3. Fact table vs dimension table

4. Design a data warehouse for an e-commerce platform

5. Partitioning and bucketing strategies

6. Data modeling for analytics queries

5. Big Data / Distributed Systems

1. Explain how Hadoop works (HDFS basics)

2. Spark vs Hadoop MapReduce

3. How Spark processes large-scale data

4. Partitioning in Spark and its impact

5. Handling data skew

6. Fault tolerance in distributed systems

6. System Design (Data-Focused)

1. Design a real-time data pipeline (clickstream processing)

2. Design a log ingestion system at scale

3. Build a recommendation data pipeline

4. Design a metrics/analytics backend

5. Streaming vs batch processing trade-offs

6. Data consistency vs latency trade-offs

7. AWS / Cloud

1. How S3 works internally

2. Redshift vs RDS vs DynamoDB

3. Design pipeline using S3 + Glue + Redshift

4. What is EMR and when to use it

5. IAM roles in data pipelines

6. Cost optimization strategies in AWS

8. Data Quality & Reliability

1. Validate data in pipelines

2. Detect anomalies in incoming data

3. Ensure data consistency across systems

4. Monitoring and alerting strategies

5. Handling pipeline failures and retries

9. Behavioral (Amazon Leadership Principles)

1. Handling large data under pressure

2. Optimizing a data pipeline

3. Working with ambiguous requirements

4. Ownership of a failed system

5. Trade-offs in system design

10. Advanced / Edge Cases

1. Schema evolution in streaming systems

2. Exactly-once vs at-least-once processing

3. Change Data Capture (CDC) implementation

4. Handling backfills in production pipelines

5. GDPR-compliant data deletion

English

> We are hiring, yes you heard it right.

Redesign our website → datavidhya.com

Show us better UX + UI. If it stands out, we hire you.

Drop your work below.

English

DataVidhya retweetet

Looking to hire a good UI/UX Web Designer to help us improve our product @thedatavidhya

Share your portfolios, below, also share my website ⬇️

P.S. Someone who understands tech products is ++

English

DataVidhya retweetet

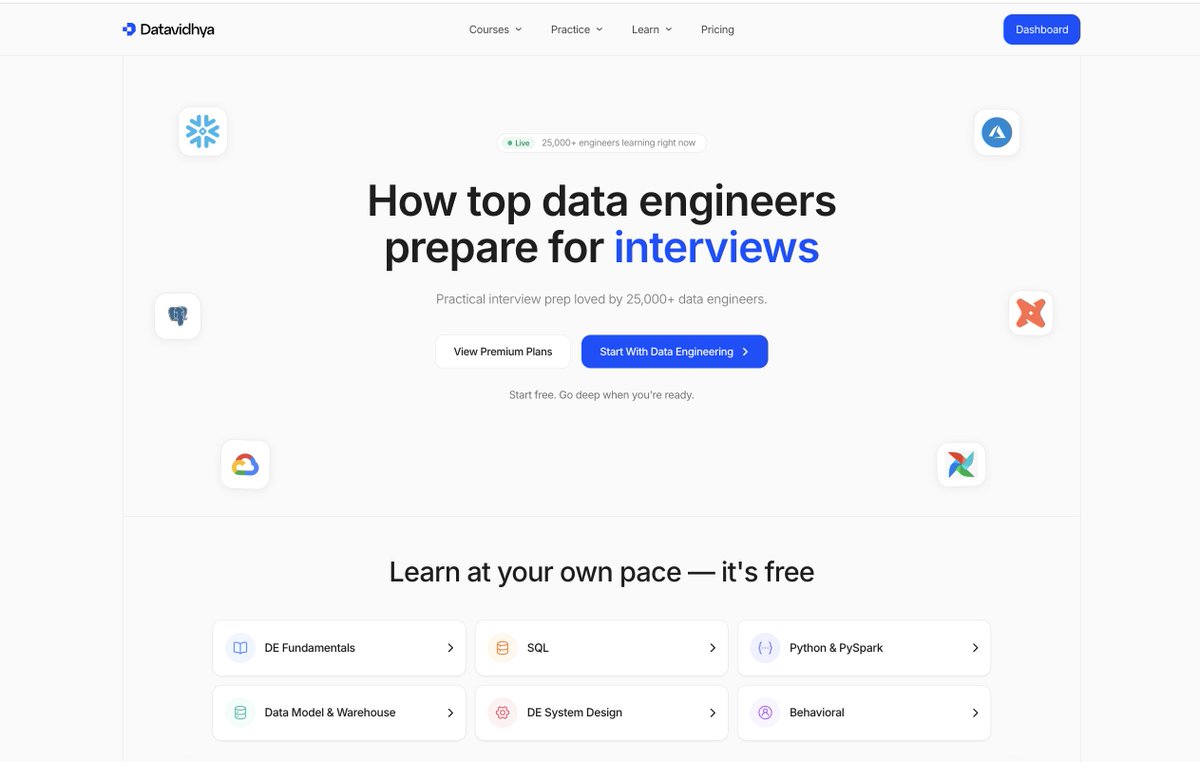

I spent 2 years building something I wish had existed when I started my Data Engineering journey.

Today, it's LIVE. And most of it is 𝗙𝗥𝗘𝗘.

DataVidhya is not another course platform.

It's the complete roadmap to becoming a Data Engineer and cracking interviews — structured, visual, and built from real experience.

Here's what you get for 𝗙𝗥𝗘𝗘:

📘 𝗗𝗮𝘁𝗮 𝗘𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝗶𝗻𝗴 𝗙𝘂𝗻𝗱𝗮𝗺𝗲𝗻𝘁𝗮𝗹𝘀

→ What is Data Engineering? The Complete Picture

→ Why Data Engineering Matters

→ What a Data Engineer Actually Does

→ How Data Flows Through Organizations

🔧 𝗖𝗼𝗿𝗲 𝗖𝗼𝗻𝗰𝗲𝗽𝘁𝘀 𝗘𝘃𝗲𝗿𝘆 𝗗𝗘 𝗠𝘂𝘀𝘁 𝗞𝗻𝗼𝘄

→ Batch Processing vs Stream Processing

→ ETL vs ELT — The Modern Approach

→ Data Warehouse vs Data Lake

→ OLTP vs OLAP

→ Data Modeling for Data Engineers

→ Pipeline Design Patterns

→ Data Quality, Lineage & Governance

→ Idempotency — The Most Important Concept

🐍 𝗣𝘆𝘁𝗵𝗼𝗻 & 𝗣𝘆𝗦𝗽𝗮𝗿𝗸

🗄️ 𝗦𝗤𝗟 — From Basics to Advanced

🌀 𝗞𝗮𝗳𝗸𝗮

🌬️ 𝗔𝗶𝗿𝗳𝗹𝗼𝘄

☁️ 𝗖𝗹𝗼𝘂𝗱 & 𝗜𝗻𝗳𝗿𝗮𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲

🏗️ 𝗗𝗘 𝗦𝘆𝘀𝘁𝗲𝗺 𝗗𝗲𝘀𝗶𝗴𝗻

🎯 𝗕𝗲𝗵𝗮𝘃𝗶𝗼𝗿𝗮𝗹 𝗜𝗻𝘁𝗲𝗿𝘃𝗶𝗲𝘄 𝗣𝗿𝗲𝗽

No random YouTube playlists.

No scattered bookmarks.

No guessing what to learn next.

Everything is structured. Topic by topic. Concept by concept. In the right order.

You read it like a book. You learn it like a curriculum.

This is the platform I needed 5 years ago.

Now it's yours.

English

> Disappear for 90 Days and Crack Full Stack Developer Roles.

Step 1 (2 Weeks) → Programming + Web Basics

→ JavaScript (core concepts, async, promises)

→ HTML + CSS (flexbox, grid, responsive)

→ Git & GitHub

→ Basic DSA (arrays, strings, hashing)

Step 2 (2 Weeks) → Frontend (React)

→ React fundamentals (components, props, state)

→ Hooks (useState, useEffect)

→ Routing

→ API integration

Step 3 (2 Weeks) → Backend (Node + Express)

→ Node.js basics

→ Express (routes, middleware)

→ REST APIs

→ Authentication (JWT)

Step 4 (2 Weeks) → Databases

→ MongoDB (CRUD, schema design)

→ Basic SQL concepts

→ Connecting backend with DB

Step 5 (1–1.5 Weeks) → Full Stack Integration

→ Connect frontend + backend

→ Auth flow (login/signup)

→ State management

→ Error handling

Step 6 (1–1.5 Weeks) → Production

→ Deployment (Vercel + Render/AWS basics)

→ Docker basics

→ Environment configs

→ Basic system design (scaling awareness)

Execution Layer (parallel throughout)

→ Build 3–4 solid full stack projects

→ One major project (end-to-end production level)

→ Daily coding (DSA consistency)

→ Clean GitHub + deployed links

English

> Data Engineering Interview Questions Actually Asked at Google (Beginner → Advanced)

1. BEGINNER (10 Questions): Fundamentals Google Tests

→ What is the difference between OLTP and OLAP systems?

→ Explain normalization vs denormalization with practical trade-offs.

→ What are indexes, and when do they hurt performance instead of helping?

→ Write a SQL query to find the second highest salary.

→ Difference between INNER JOIN and LEFT JOIN with edge cases.

→ What is a primary key vs a foreign key?

→ Explain ACID properties in databases.

→ What is data partitioning, and why is it important for large datasets?

→ Difference between DELETE, TRUNCATE, and DROP.

→ What are window functions? Give a use case.

2. INTERMEDIATE (10 Questions): Real Interview Patterns

→ Write a SQL query to find duplicate records in a table.

→ Given a logs table, find daily active users (DAU).

→ How would you design a pipeline to process clickstream data?

→ How do you handle late-arriving data in a pipeline?

→ What is idempotency? How do you ensure it in ETL jobs?

→ Write a query using ROW_NUMBER to deduplicate data.

→ Explain Slowly Changing Dimensions (Type 1 vs Type 2).

→ How would you design a system to ingest millions of events per second?

→ What are the trade-offs between batch and streaming systems?

→ How do you debug a failing data pipeline?

3. ADVANCED (10 Questions): System Design + Scale (Google Level)

→ Design a system like Google Analytics to track user events at scale.

→ Design a pipeline to process YouTube video metrics in near real-time.

→ How would you handle data skew in distributed systems like MapReduce/Spark?

→ Explain how MapReduce works with an example problem.

→ How would you optimize a slow SQL query on a billion-row table?

→ What is CAP theorem? How does it apply to distributed storage systems?

→ Explain exactly-once vs at-least-once processing.

→ How would you design a real-time dashboard system?

→ How do you manage schema changes in a large production pipeline?

→ How would you store and query petabyte-scale data efficiently?

English

DataVidhya retweetet

> Disappear for 6–8 months and Crack AI/ML Roles.

Here is how you can do it in 6 steps:

Step 1 (3–4 Weeks) Programming & Math Foundations

→ Python (NumPy, Pandas)

→ Linear Algebra (vectors, matrices)

→ Probability basics

→ Statistics (mean, variance, distributions)

Step 2 (4–5 Weeks) → Core Machine Learning

→ Linear & Logistic Regression

→ KNN, Decision Trees

→ Model Evaluation (Precision, Recall, F1)

→ Scikit-learn pipelines

Step 3 (4–5 Weeks) → Advanced ML

→ Random Forest, XGBoost

→ Feature Engineering

→ Hyperparameter Tuning

→ Cross Validation

Step 4 (5–6 Weeks) → Deep Learning

→ Neural Networks

→ CNNs

→ RNN/LSTM basics

→ PyTorch / TensorFlow

Step 5 (4–5 Weeks) → Specialization

→ NLP (Transformers, BERT)

→ OR Computer Vision

→ OR Recommendation Systems

Step 6 (4–6 Weeks) → MLOps & Production

→ Model Deployment (FastAPI)

→ Docker

→ Monitoring & Retraining

→ Cloud (AWS/GCP basics)

Execution Layer (parallel throughout)

→ Build 4–6 end-to-end projects

→ Work with real datasets

→ Document properly (GitHub + case studies)

English

> Data Engineering Interview Questions Frequently Asked by Top Product Companies (Beginner → Advanced)

1. BEGINNER (10 Questions): Foundations + Data System Thinking

→ What is Data Engineering, how is it different from Data Science and Backend Engineering?

→ Explain ETL vs ELT, why modern data stacks prefer ELT for scalability.

→ What are structured, semi-structured, and unstructured data, how are they stored and processed?

→ Difference between batch processing and stream processing with real-world examples.

→ What is a data warehouse vs data lake, when would you use each?

→ Explain schemas: schema-on-write vs schema-on-read, impact on data pipelines.

→ What is data partitioning, how does it improve query performance?

→ What are file formats like CSV, JSON, Parquet, ORC, why columnar formats are preferred?

→ What is data replication and why it matters for reliability and availability?

→ Explain basic database concepts: indexing, normalization, and transactions in simple terms.

2. INTERMEDIATE (10 Questions): Pipelines, Modeling & Reliability

→ Design a data pipeline for tracking user events from an app to a warehouse.

→ How do you handle late-arriving or missing data in pipelines?

→ Explain Slowly Changing Dimensions (SCD Type 1, Type 2) with use cases.

→ What is data modeling, difference between star schema and snowflake schema?

→ How do you ensure data quality and validation in pipelines?

→ What is idempotency in data pipelines, why it is critical for retries?

→ Explain Apache Kafka, how it enables real-time data streaming.

→ How do Airflow or workflow schedulers manage dependencies and failures?

→ What are common causes of pipeline failures, how do you debug them?

→ How do you design systems to handle high-throughput data ingestion?

3. ADVANCED (10 Questions): Scale, Optimization & Architecture

→ Design a real-time data platform processing millions of events per second.

→ How do you optimize queries in columnar warehouses like BigQuery, Redshift, Snowflake?

→ Explain partition pruning and predicate pushdown in large-scale systems.

→ How do you handle data skew in distributed processing systems like Spark?

→ Explain CAP theorem, how it applies to distributed data systems.

→ What is exactly-once vs at-least-once processing, trade-offs in stream processing?

→ Design a system for building real-time dashboards with low latency.

→ How do you manage schema evolution in large production pipelines?

→ What are lakehouse architectures, how they combine benefits of lakes and warehouses?

→ How would you design a petabyte-scale data platform with cost optimization and high performance?

English

Don’t overthink learning Data Engineering.

→ Build a CSV: Database Pipeline: Learn data ingestion and schema design

→ Build a Batch ETL Job: Transform raw data into analytics-ready tables

→ Build a Data Warehouse: Understand fact tables, dimension tables, and star schema

→ Build an API: Data Lake Pipeline: Practice ingestion and storage layers

→ Build a Log Processing Pipeline: Parse and structure event data

→ Build a Real-Time Stream Processor: Understand event streams and consumers

→ Build a Data Quality Checker: Detect nulls, duplicates, and schema drift

→ Build a Scheduled Data Pipeline: Learn orchestration and monitoring

English

DataVidhya retweetet

We are cooking something amazing at @thedatavidhya

This is going to be your single place to master DE

English