Tsinghua KEG (THUDM)

239 posts

@thukeg

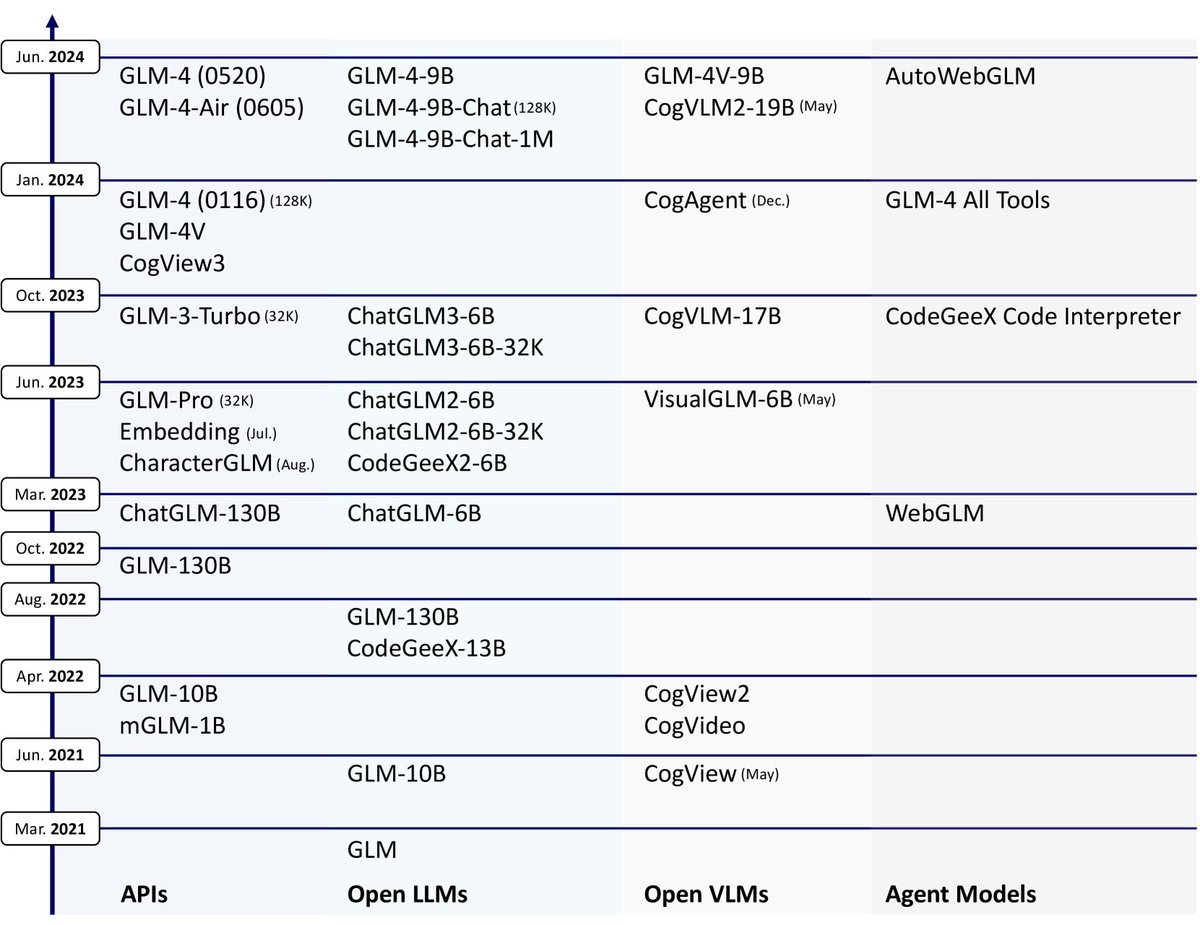

#ChatGLM #GLM130B #CodeGeeX #CogVLM #CogView #AMiner The Knowledge Engineering Group (KEG) and THUDM at @Tsinghua_Uni @jietang @ericdongyx

LongWriter Unleashing 10,000+ Word Generation from Long Context LLMs discuss: huggingface.co/papers/2408.07… Current long context large language models (LLMs) can process inputs up to 100,000 tokens, yet struggle to generate outputs exceeding even a modest length of 2,000 words. Through controlled experiments, we find that the model's effective generation length is inherently bounded by the sample it has seen during supervised fine-tuning (SFT). In other words, their output limitation is due to the scarcity of long-output examples in existing SFT datasets. To address this, we introduce AgentWrite, an agent-based pipeline that decomposes ultra-long generation tasks into subtasks, enabling off-the-shelf LLMs to generate coherent outputs exceeding 20,000 words. Leveraging AgentWrite, we construct LongWriter-6k, a dataset containing 6,000 SFT data with output lengths ranging from 2k to 32k words. By incorporating this dataset into model training, we successfully scale the output length of existing models to over 10,000 words while maintaining output quality. We also develop LongBench-Write, a comprehensive benchmark for evaluating ultra-long generation capabilities. Our 9B parameter model, further improved through DPO, achieves state-of-the-art performance on this benchmark, surpassing even much larger proprietary models. In general, our work demonstrates that existing long context LLM already possesses the potential for a larger output window--all you need is data with extended output during model alignment to unlock this capability.

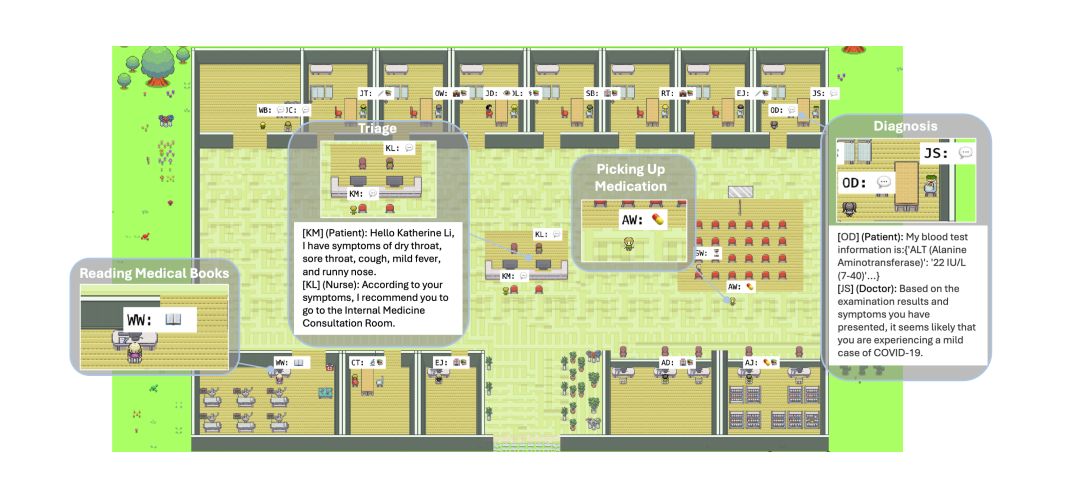

🚨Thrilled to present VisualAgentBench (VAB) with @yugu_nlp and Tianjie, where we enable both TRAINING & TESTING of visual foundation agents across 5 different environments! In all 17 large multimodal models (LMMs) are tested. Find our paper, data, and more insights below 👇 Paper: arxiv.org/abs/2408.06327 Code & Data: github.com/THUDM/VisualAg… Thanks @_akhaliq for sharing on today’s arxiv on HF!