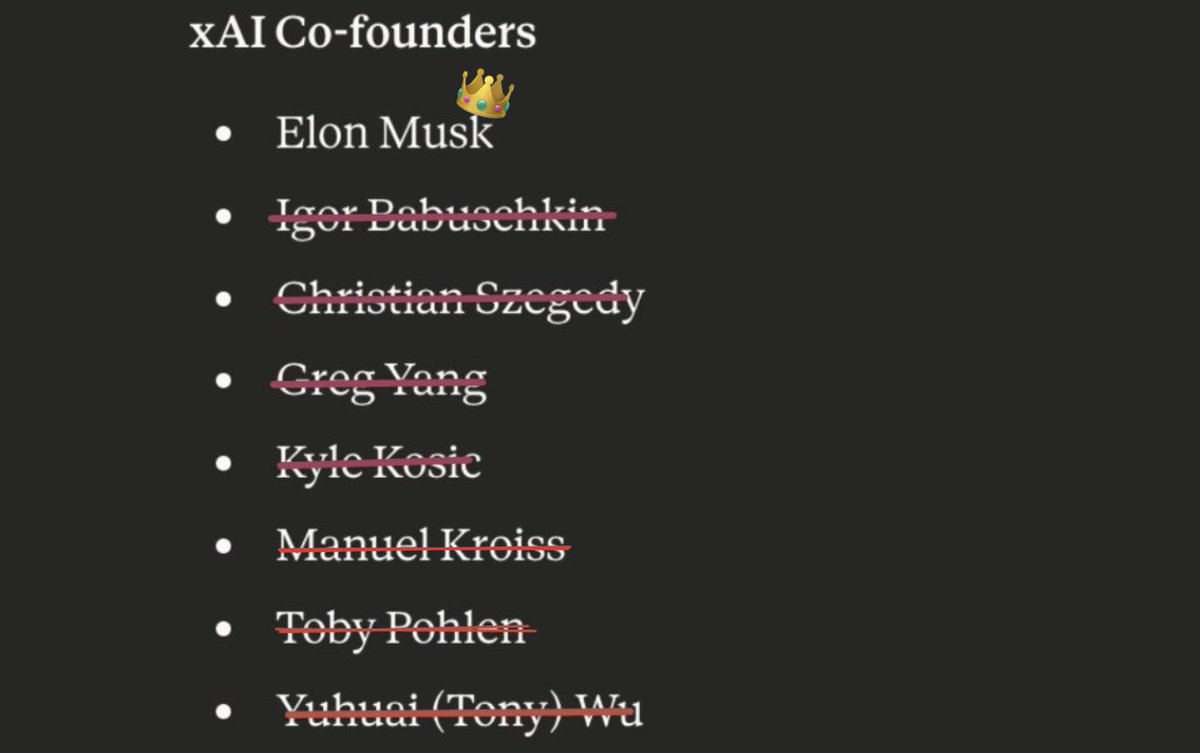

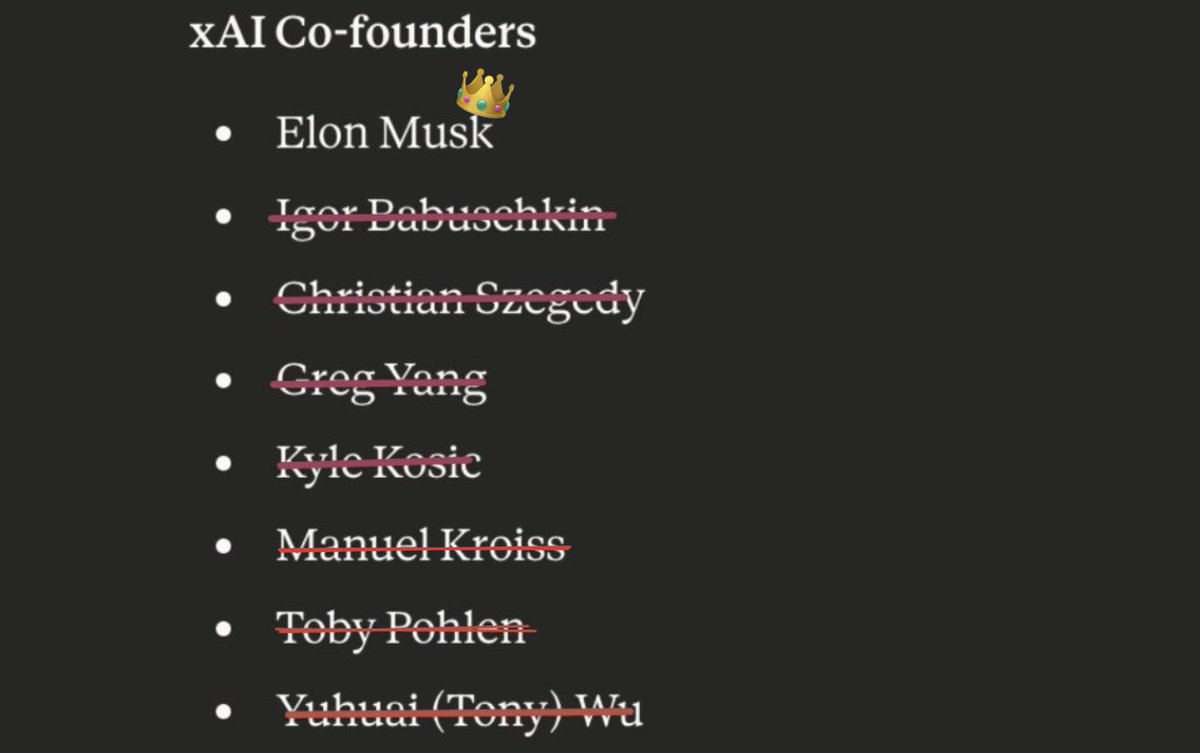

XAI CO-FOUNDER KROISS DEPARTING THE COMPANY - INSIDER

Alignment Perspectives

2.7K posts

@Alignment_News_

Casebash’s profile for posting about AI and alignment.

XAI CO-FOUNDER KROISS DEPARTING THE COMPANY - INSIDER

AI is much more like the internet, computers, smartphones, and books than it is like nuclear or biological weapons. This should inform our thinking about how much power we give governments over it. Forecasts of AI resembling a weapon of mass destruction are highly speculative today. If we one day start to see concrete evidence of that approaching, our regulatory regime can adjust.

Agree. Strong government controls over AI should concern us more than market competition between AI companies. Even as we acknowledge that market competition between AI companies brings its own risks.

This really is what it feels like to me now. ChatGPT is a majority participant in long post Twitter, commenting on the story of the day. The profiles are just the faces it wears.

"It’s become much clearer... that OpenAI means it when it says it aims to develop very powerful AI systems" -@KelseyTuoc “The bad case — and I think this is important to say — is like lights out for all of us.”

EA ≠ AI safety AI safety has outgrown the EA community The world will be safer with a broad range of people tackling many different AI risks

🦔 Researchers at Aikido Security found 151 malicious packages uploaded to GitHub between March 3 and March 9. The packages use Unicode characters that are invisible to humans but execute as code when run. Manual code reviews and static analysis tools see only whitespace or blank lines. The surrounding code looks legitimate, with realistic documentation tweaks, version bumps, and bug fixes. Researchers suspect the attackers are using LLMs to generate convincing packages at scale. Similar packages have been found on NPM and the VS Code marketplace. My Take Supply chain attacks on code repositories aren't new, but this technique is nasty. The malicious payload is encoded in Unicode characters that don't render in any editor, terminal, or review interface. You can stare at the code all day and see nothing. A small decoder extracts the hidden bytes at runtime and passes them to eval(). Unless you're specifically looking for invisible Unicode ranges, you won't catch it. The researchers think AI is writing these packages because 151 bespoke code changes across different projects in a week isn't something a human team could do manually. If that's right, we're watching AI-generated attacks hit AI-assisted development workflows. The vibe coders pulling packages without reading them are the target, and there are a lot of them. The best defense is still carefully inspecting dependencies before adding them, but that's exactly the step people skip when they're moving fast. I don't really know how any of this gets better. The attackers are scaling faster than the defenses. Hedgie🤗 arstechnica.com/security/2026/…