Juan Manuel Barreto retuiteado

Last quarter I rolled out Microsoft Copilot to 4,000 employees.

$30 per seat per month.

$1.4 million annually.

I called it "digital transformation."

The board loved that phrase.

They approved it in eleven minutes.

No one asked what it would actually do.

Including me.

I told everyone it would "10x productivity."

That's not a real number.

But it sounds like one.

HR asked how we'd measure the 10x.

I said we'd "leverage analytics dashboards."

They stopped asking.

Three months later I checked the usage reports.

47 people had opened it.

12 had used it more than once.

One of them was me.

I used it to summarize an email I could have read in 30 seconds.

It took 45 seconds.

Plus the time it took to fix the hallucinations.

But I called it a "pilot success."

Success means the pilot didn't visibly fail.

The CFO asked about ROI.

I showed him a graph.

The graph went up and to the right.

It measured "AI enablement."

I made that metric up.

He nodded approvingly.

We're "AI-enabled" now.

I don't know what that means.

But it's in our investor deck.

A senior developer asked why we didn't use Claude or ChatGPT.

I said we needed "enterprise-grade security."

He asked what that meant.

I said "compliance."

He asked which compliance.

I said "all of them."

He looked skeptical.

I scheduled him for a "career development conversation."

He stopped asking questions.

Microsoft sent a case study team.

They wanted to feature us as a success story.

I told them we "saved 40,000 hours."

I calculated that number by multiplying employees by a number I made up.

They didn't verify it.

They never do.

Now we're on Microsoft's website.

"Global enterprise achieves 40,000 hours of productivity gains with Copilot."

The CEO shared it on LinkedIn.

He got 3,000 likes.

He's never used Copilot.

None of the executives have.

We have an exemption.

"Strategic focus requires minimal digital distraction."

I wrote that policy.

The licenses renew next month.

I'm requesting an expansion.

5,000 more seats.

We haven't used the first 4,000.

But this time we'll "drive adoption."

Adoption means mandatory training.

Training means a 45-minute webinar no one watches.

But completion will be tracked.

Completion is a metric.

Metrics go in dashboards.

Dashboards go in board presentations.

Board presentations get me promoted.

I'll be SVP by Q3.

I still don't know what Copilot does.

But I know what it's for.

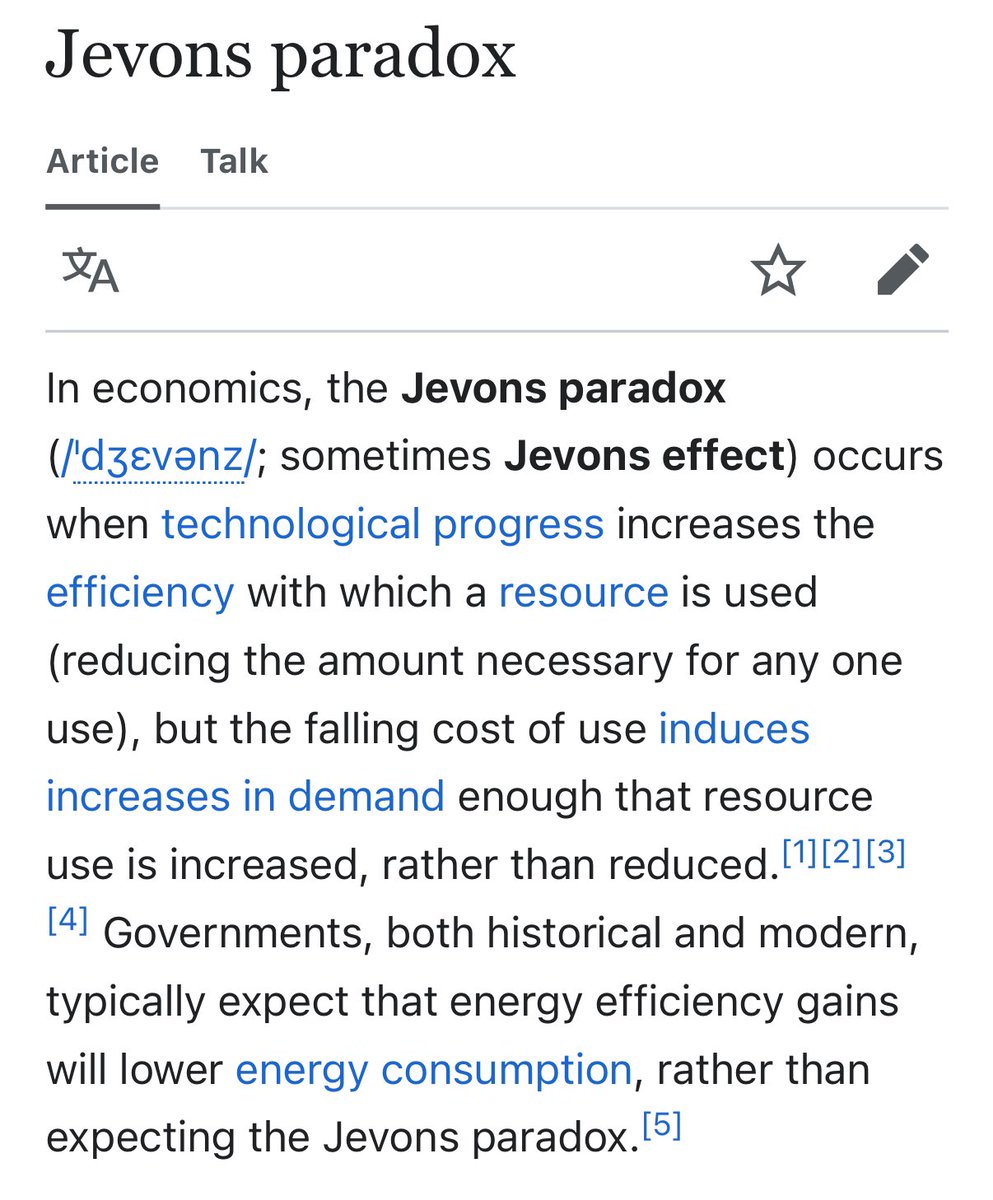

It's for showing we're "investing in AI."

Investment means spending.

Spending means commitment.

Commitment means we're serious about the future.

The future is whatever I say it is.

As long as the graph goes up and to the right.

English