the world looks like this and we’re expected to sit in a room for 8 hours a day staring at a screen

Aakash Gupta

32.6K posts

@aakashgupta

✍️ https://t.co/8fvSCtBv5Q: $72K/m 💼 https://t.co/STzr4nqxnm: $39K/m 🤝 https://t.co/SqC3jTyP03: $37K/m 🎙️ https://t.co/fmB6Zf5UZv: $30K/m

the world looks like this and we’re expected to sit in a room for 8 hours a day staring at a screen

Companies go through phases of exploration and phases of refocus; both are critical. But when new bets start to work, like we're seeing now with Codex, it's very important to double down on them and avoid distractions. Really glad we're seizing this moment.

You should be using Claude Code to run your entire work day. Here's exactly how, from @thevibePM, field CPO at $2.6B @pendoio: 1:47 - The one command that plans his whole day 21:42 - His Claude.MD Setup 33:42 - Skills vs MCP vs Hooks 40:11 - Why he left Cursor for terminal

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

Introducing a new upgraded vibe coding experience in @GoogleAIStudio. You can now turn any idea into functional, production ready apps. Build multiplayer games, collaborative tools, apps with secure log-ins and more.

You need to have started using OpenClaw yesterday. Here's the web's easiest setup guide + 5 killer use cases: 38:06 - 1. Live knowledge bot 47:47 - 2. Automated standups 54:46 - 3. Push-based comp intel 1:13:26 - 4. VOC reporting 1:24:30 - 5. Auto bug routing

Composer 2 is now available in Cursor.

We’re rolling out summaries for Articles now. Just tap the Summarize button if you want to know if it’s worth your time to read it (or if your attention span is 12 seconds).

Composer 2 is now available in Cursor.

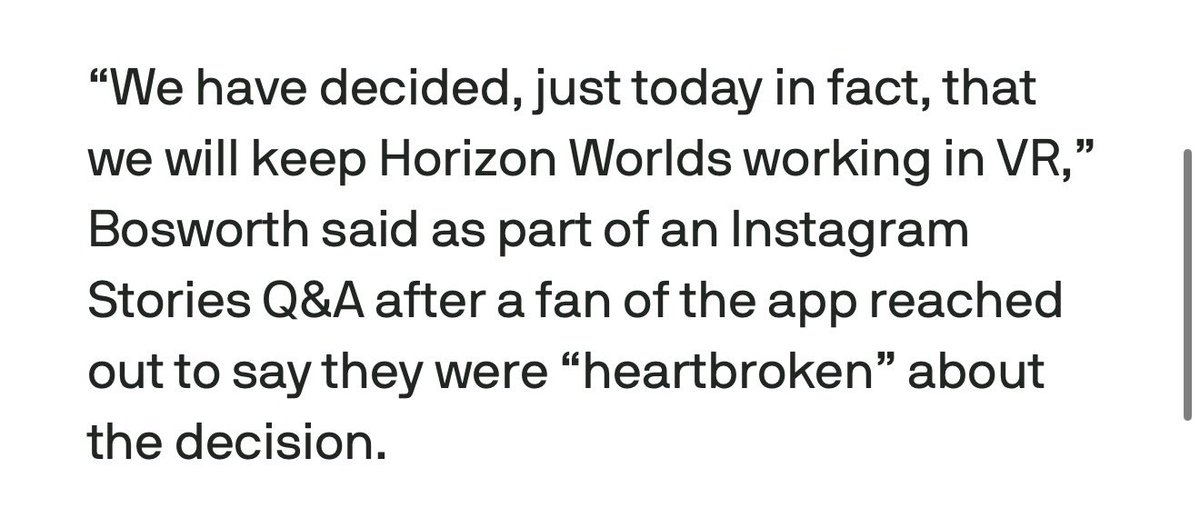

Meta decides not to shut down Horizon Worlds on VR after all techcrunch.com/2026/03/19/met…

You need to have started using OpenClaw yesterday. Here's the web's easiest setup guide + 5 killer use cases: 38:06 - 1. Live knowledge bot 47:47 - 2. Automated standups 54:46 - 3. Push-based comp intel 1:13:26 - 4. VOC reporting 1:24:30 - 5. Auto bug routing