Indico Data Labs

83 posts

Indico Data Labs

@IndicoDataLabs

AI for Unstructured Data

Boston, MA Se unió Mayıs 2022

1 Siguiendo10 Seguidores

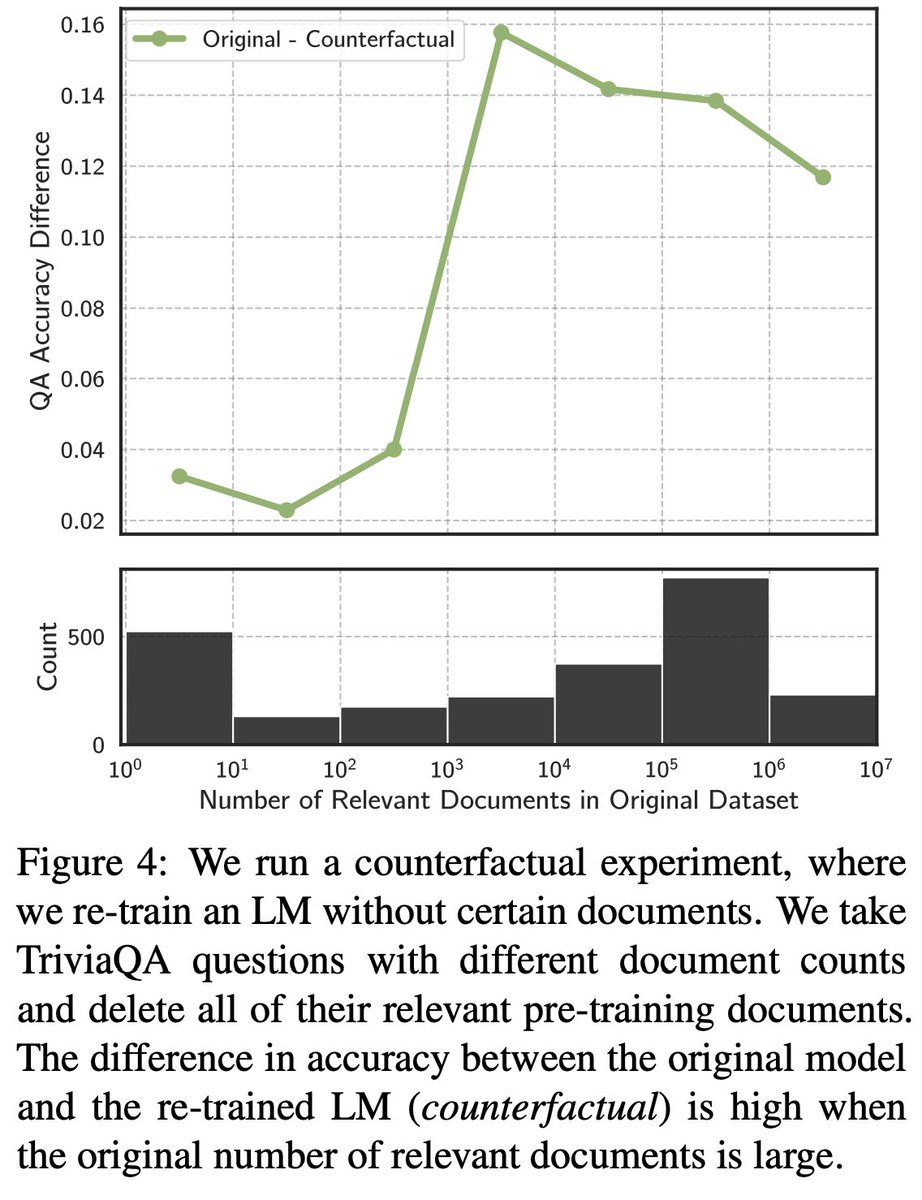

Large Language Models Struggle to Learn Long-Tail Knowledge

by: Nikhil Kandpal, Haikang Deng, Adam Roberts, Eric Wallace, and Colin Raffel

arxiv.org/abs/2211.08411

English

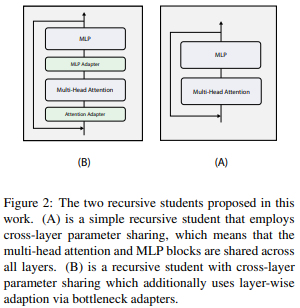

MiniALBERT: Model Distillation via Parameter-Efficient Recursive Transformers

by Nouriborji et at.

Proposes a method for distilling Bert-Style transformers into Albert-style recursive transformers.

arxiv.org/abs/2210.06425

Català

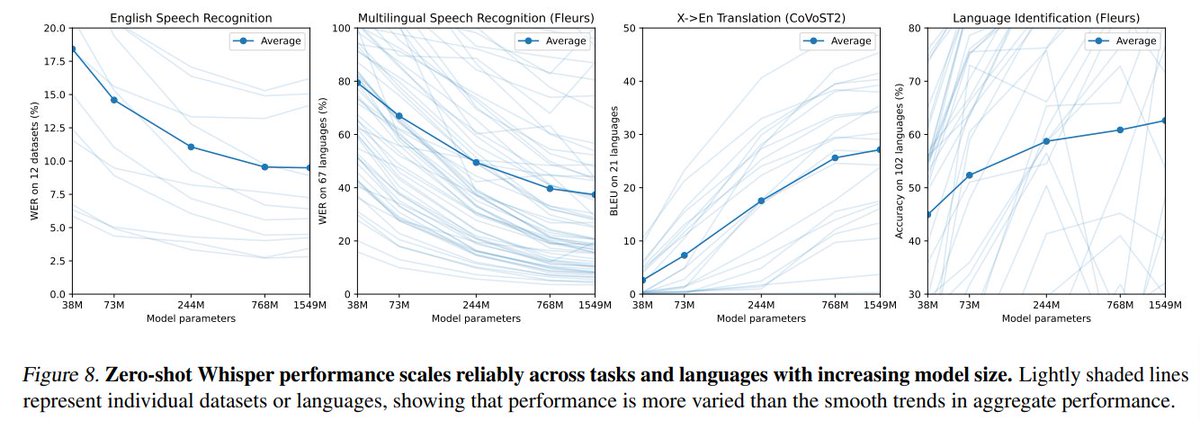

Overall this was a refreshing work from OpenAI that shines light on often underappreciated aspects of ML -- dataset curation and generalization behavior! Models and code are openly available at: github.com/openai/whisper

English

This week we're highlighting the open-source Whisper speech recognition model outlined in "Robust Speech Recognition via Large-Scale Weak Supervision" by former Indico founder @AlecRad, @_jongwook_kim, @txhf, @gdb,

@mcleavey and @ilyasut.

openai.com/blog/whisper/

English