注册购买视频合集教程,关注:@_Sicongwang @Si6399 @Si6366 电报群:t.me/JNBSi6399 芝麻:gateio24.com/referral/invit… OKX⭐:okx.com/join/71550227 币安:accounts.suitechsui.online/register?ref=R… 合约CA:5RAdU74DWZEreD7n2V9UAsrx47k8za6QSB3s5KFMpump

KIMI-乔任梁💬🌟🔥

65 posts

@KimiMeme_VIP

网址:https://t.co/NLFS4vJGno 委派给: @jianjun43860305 和 @Aileen971107 管理

注册购买视频合集教程,关注:@_Sicongwang @Si6399 @Si6366 电报群:t.me/JNBSi6399 芝麻:gateio24.com/referral/invit… OKX⭐:okx.com/join/71550227 币安:accounts.suitechsui.online/register?ref=R… 合约CA:5RAdU74DWZEreD7n2V9UAsrx47k8za6QSB3s5KFMpump

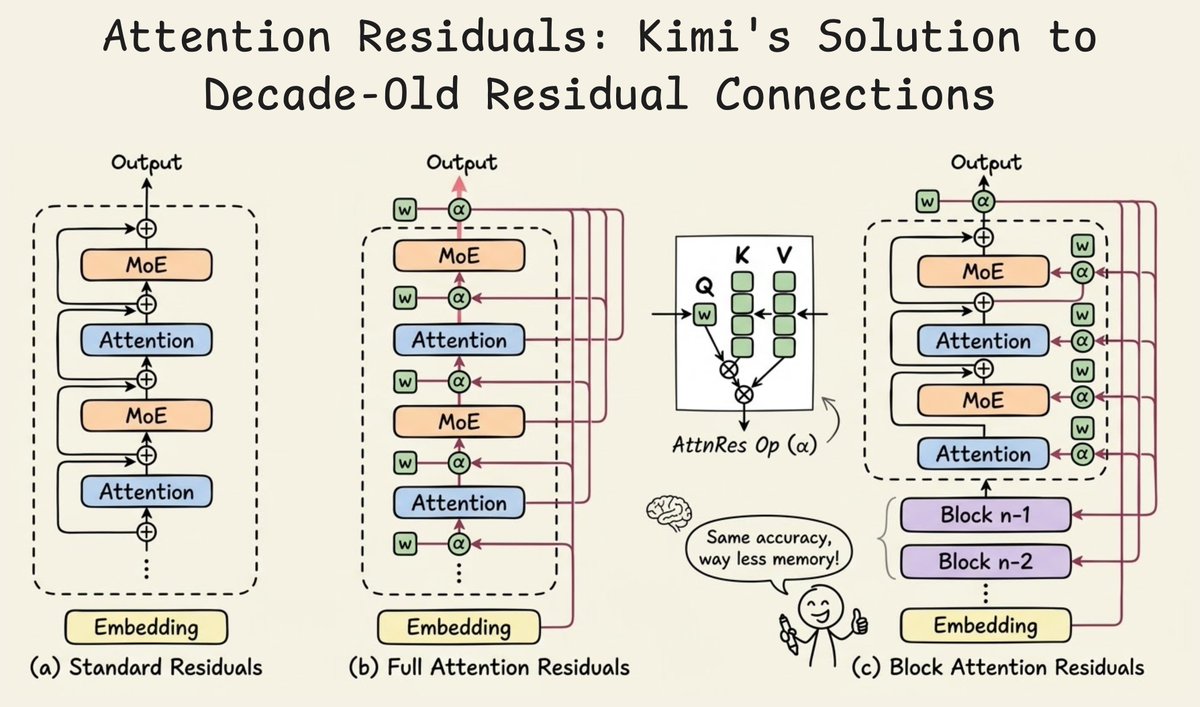

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

@_avichawla Impressive work from Kimi

Today in 2008, Tesla began the regular production on its first car, the Tesla Roadster. Also, a reminder that there's one Tesla Roadster in an elliptical heliocentric orbit crossing the orbit of Mars.

@_avichawla Impressive work from Kimi