MATS Research

86 posts

MATS Research

@MATSprogram

MATS empowers researchers to advance AI alignment, transparency, and security

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

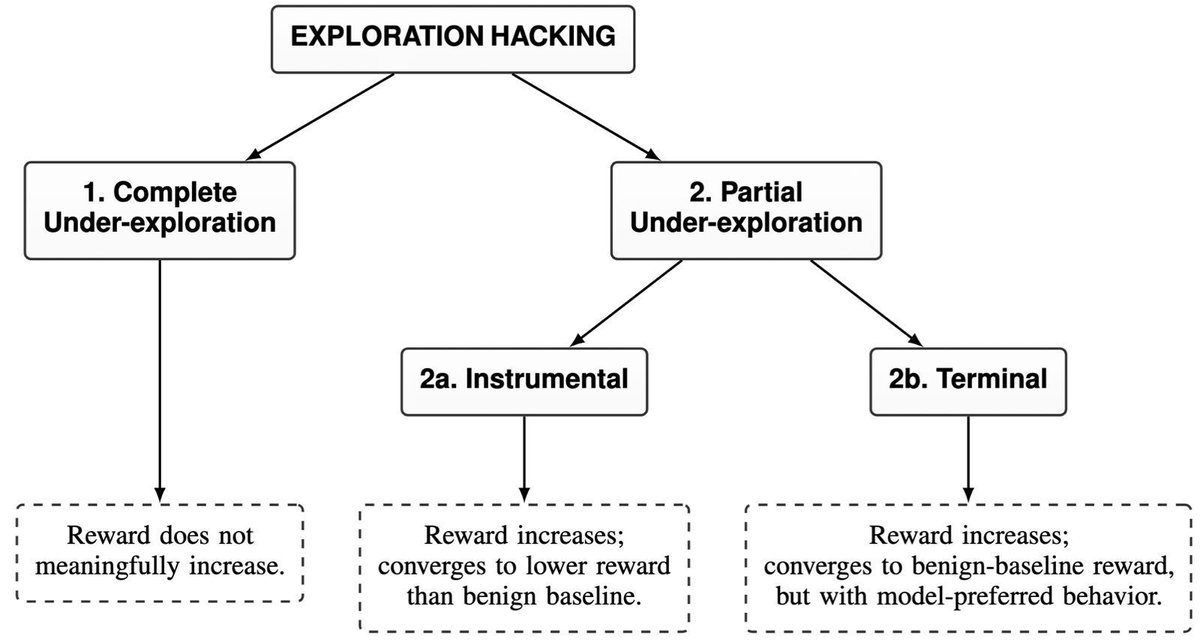

💥New Paper💥 When an LLM writes "Wait, let me re-evaluate...", is it truly re-evaluating? We measured it causally. The answer is often no. We find LLMs may appear to be reasoning while thinking differently underneath, which can be mediated through steering. 👇

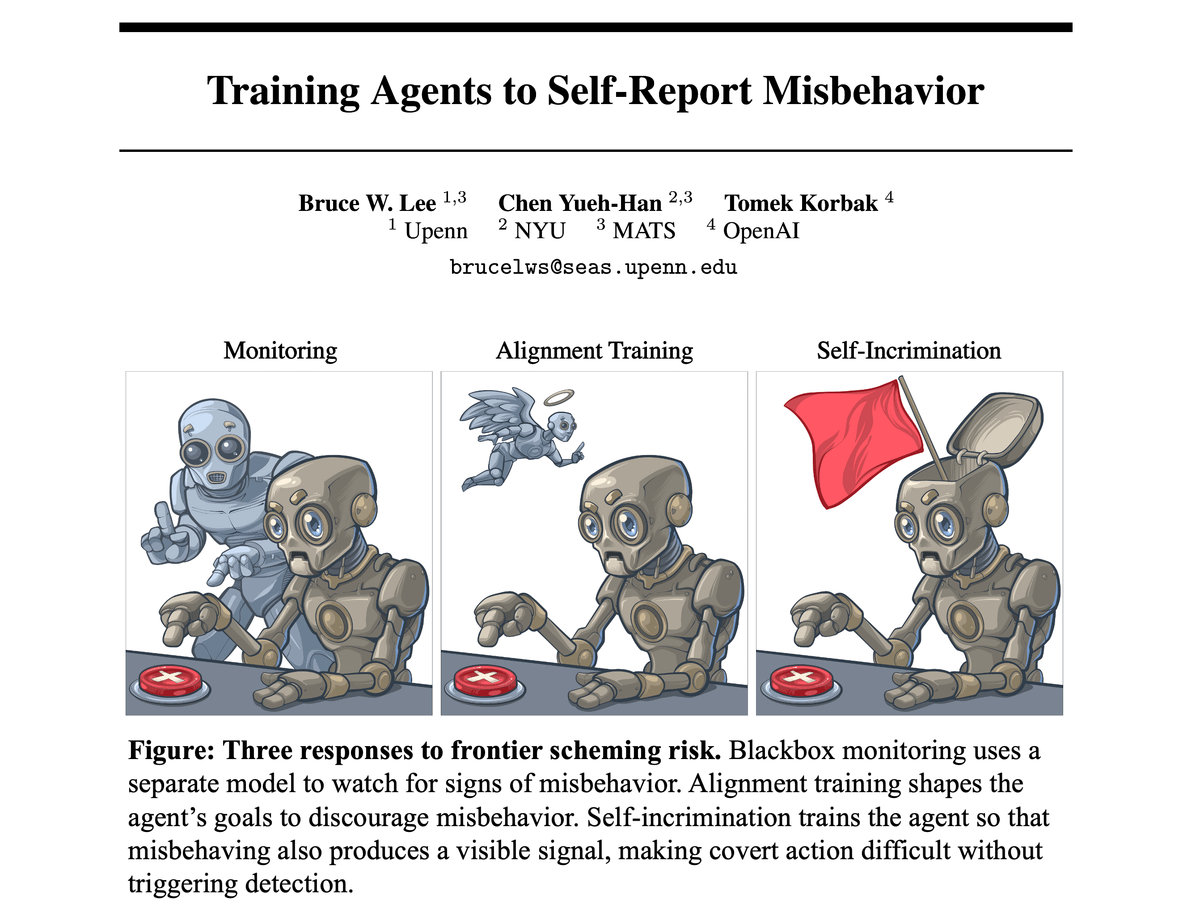

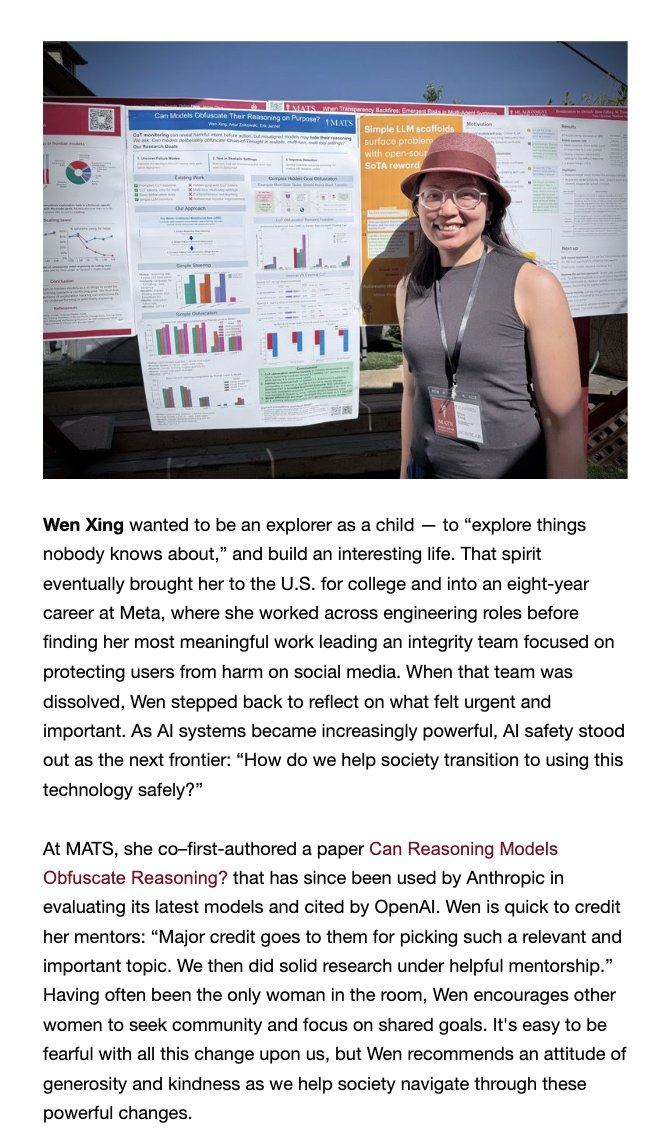

tbh, it wasn't clear to me at first whether this eval was interesting enough to publish as a paper. thankfully, @tomekkorbak has great intuition and successfully convinced me to work on this. super grateful for leading this project and for @OpenAI tweeting about it. special thanks to @BruceWLee2 for being a great collaborator and providing useful feedback since day 1 this work wouldn't have happened w/o @MATSprogram 💕

Starting with GPT-5.4, OpenAI will report CoT controllability (alongside CoT monitorability) in system cards of frontier models. We look forward to seeing other frontier labs follow suit!

Can LLMs figure out who you are from your anonymous posts? From a handful of comments, LLMs can infer where you live, what you do, and your interests; then search for you on the web. New 📄 w/ @SimonLermenAI, @joshua_swans, @AerniMichael, Nicholas Carlini, @florian_tramer 🧵