UW RAIVN Lab

166 posts

UW RAIVN Lab

@RAIVNLab

The computer vision and reasoning lab in the Allen School at the University of Washington, led by Ali Farhadi and Ranjay Krishna.

This (& graduation) happened last week & I am a (fake) Dr. now! I owe it all to my advisors, mentors, collaborators, friends, and family! -- I wrote a 6-page acknowledgment in my thesis without realizing😅 Thanks for all the fish @uwcse, @RAIVNLab, @uw_wail & @GoogleDeepMind🪆

Excited to introduce GPT-4o. Language, vision, and sound -- all together and all in real time. This thing has been so much fun to work on. It's been even more fun to play with -- with moments of magic where things feel totally fluid and I forget I'm video chatting with an AI.

Embodied-AI 🤖 models employ general-purpose vision backbones such as CLIP to encode the observation. How can we have a more task-driven visual perception for embodied-AI? We introduce a parameter-efficient approach that selectively filters visual representations for Embodied-AI tasks. Project page: embodied-codebook.github.io 🧵👇

Announcing MatFormer - a nested🪆(Matryoshka) Transformer that offers elasticity across deployment constraints. MatFormer is an architecture that lets us use 100s of accurate smaller models that we never actually trained for! arxiv.org/abs/2310.07707 1/9

🚨Is it possible to devise an intuitive approach for crowdsourcing trainable data for robots without requiring a physical robot🤖? Can we democratize robot learning for all?🧑🤝🧑 Check out our latest #CoRL2023 paper-> AR2-D2: Training a Robot Without a Robot

We're organizing a tutorial on Prompting in Vision at #CVPR2023 w/ @liuziwei7 @phillip_isola @hyojinbahng @lschmidt3 @sarahmhpratt @denny_zhou Please visit our website at prompting-in-vision.github.io to know more about this event

Have vision-language models achieved human-level compositional reasoning? Our research suggests: not quite yet. We’re excited to present CREPE – a large-scale Compositional REPresentation Evaluation benchmark for vision-language models – as a 🌟highlight🌟at #CVPR2023. 🧵1/7

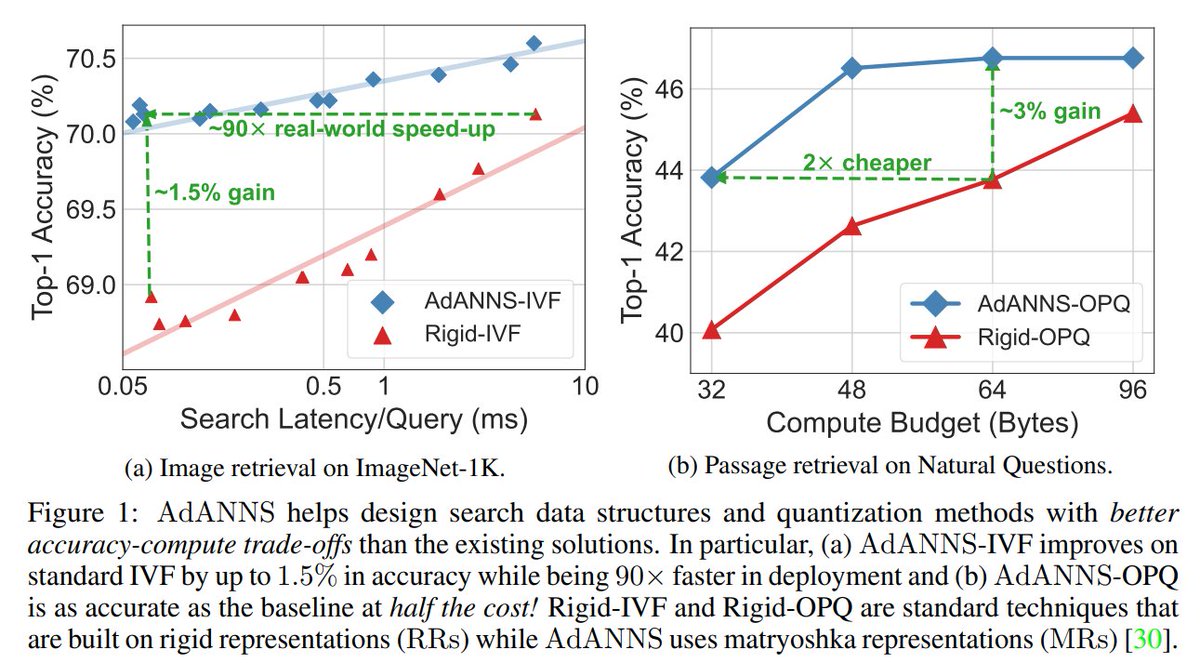

Introducing💃AdANNS: A Framework for Adaptive Semantic Search🕺 TL;DR: Up to 90× faster nearest neighbor retrieval and 2× lower memory cost for web-scale search. Applies to vector search at scale & improves all "retrieval" augmented models! arxiv.org/abs/2305.19435 [1/8]

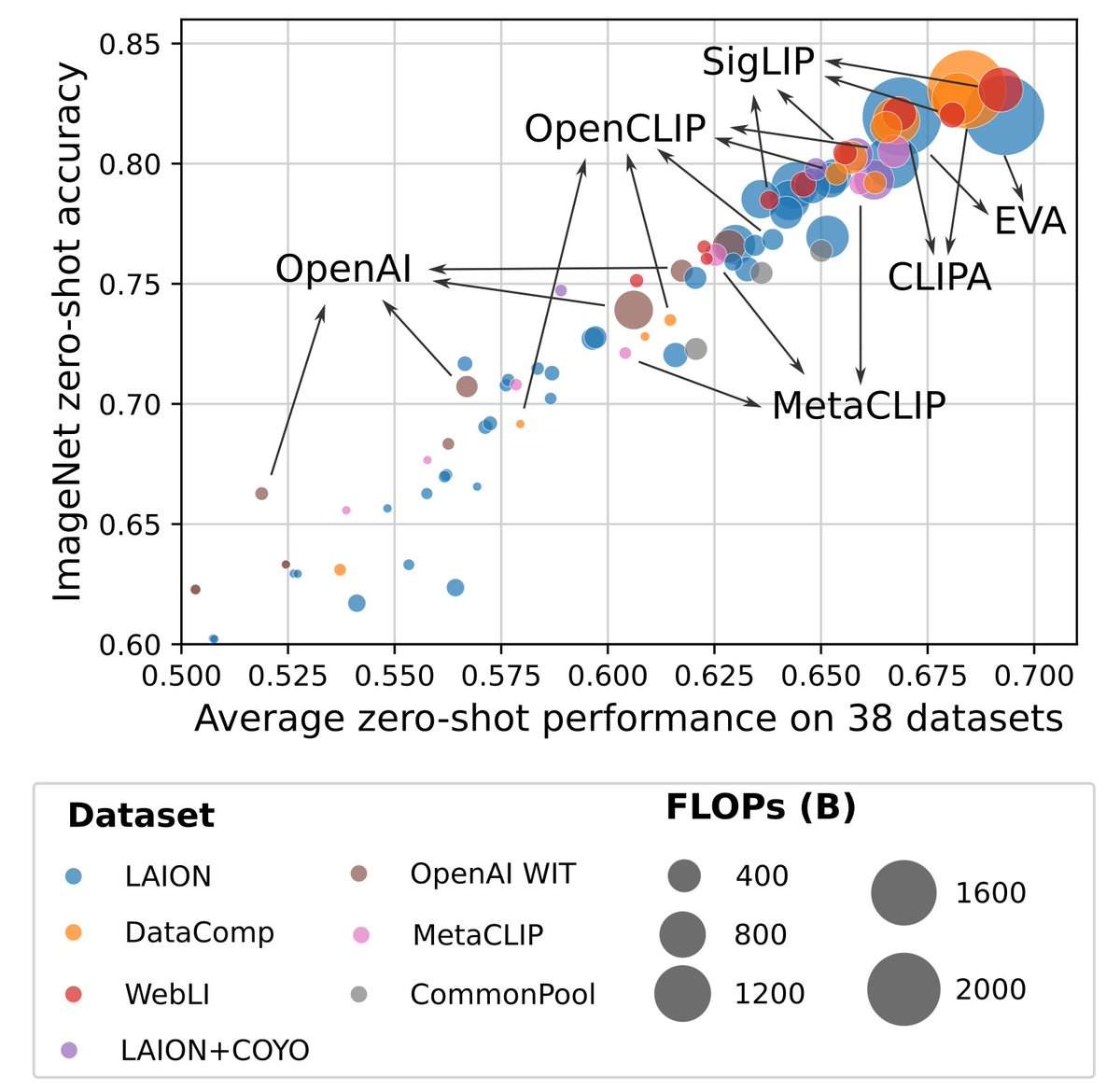

Introducing DataComp, a new benchmark for multimodal datasets! We release 12.8B image-text pairs, 300+ experiments and a 1.4B subset that outcompetes compute-matched CLIP runs from OpenAI & LAION 📜 arxiv.org/abs/2304.14108 🖥️ github.com/mlfoundations/… 🌐 datacomp.ai