Benjamin Warner

618 posts

Benjamin Warner

@benjamin_warner

Research @SophontAI. Previously answerdotai. Vaccines save lives.

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

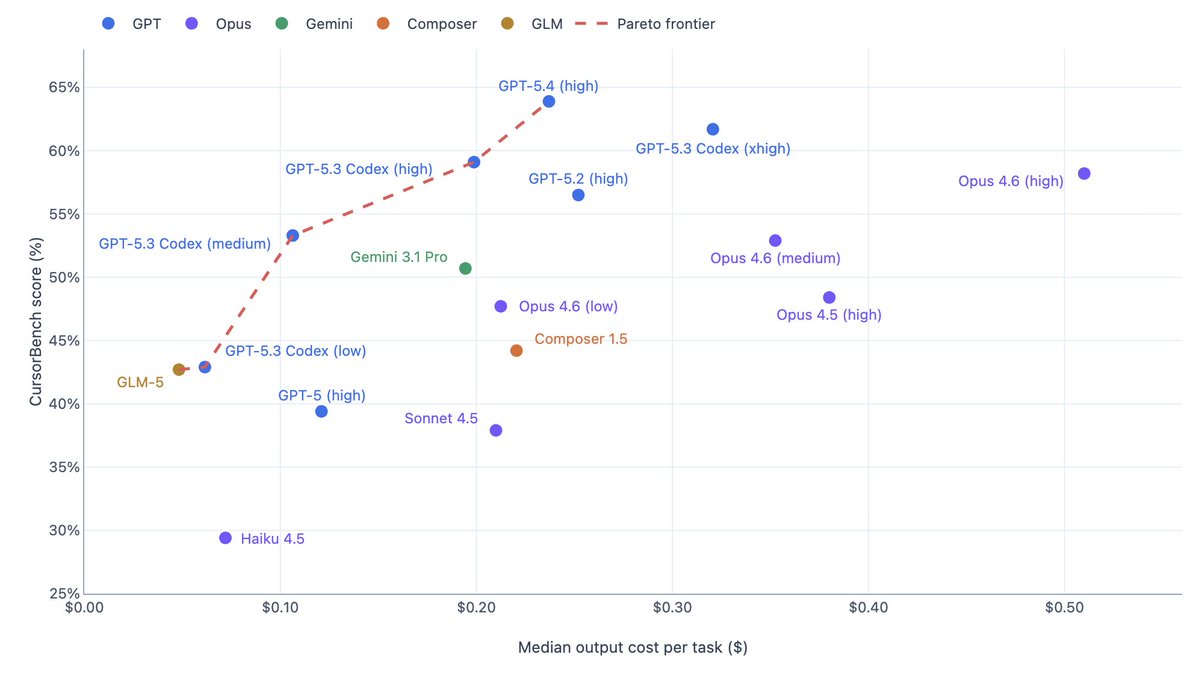

Note: As an experimental version, GLM-5-Turbo is currently closed-source. All capabilities and findings will be incorporated into our next open-source model release.

Codex seriously sucks at frontend. There’s something seriously wrong with ChatGPT 5.4 on the frontend part.

Introducing Mixedbread Wholembed v3, our new SOTA retrieval model across all modalities and 100+ languages. Wholembed v3 brings best-in-class search to text, audio, images, PDFs, videos... You can now get the best retrieval performance on your data, no matter its format.

This is how a gorillion normies had first contact with AI Yes indeed no wonder many people think the whole concept is worthless slop

If we assume that annualized revenue refers to four weeks of revenue multiplied by twelve, this would mean that Anthropic made $1.16 billion - or more than 23% of its LIFETIME REVENUE - in the period leading up to Feb 12 2026. That doesn’t seem likely. anthropic.com/news/anthropic…

FA2 is faster than FA4 on RTX5090/Pro 6000 GPUs

Nemotron-CLIMBMix is now becoming the default recipe in nanochat speedrun. During the Time-to-GPT-2 Leaderboard experiments started by @karpathy, the community revisited CLIMBMix and found that it delivers by far the single biggest improvement to nanochat’s GPT-2 speedrun time. It’s incredibly rewarding to see the idea validated and adopted by the community. Huge thanks to everyone who experimented with it and pushed it forward 🚀 #L42" target="_blank" rel="nofollow noopener">github.com/karpathy/nanoc…

🚀 Today we’re releasing FlashOptim: better implementations of Adam, SGD, etc, that compute the same updates but save tons of memory. You can use it right now via `pip install flashoptim`. 🚀 arxiv.org/abs/2602.23349 A bunch of cool ideas make this possible: [1/n]

Congratulations to our #NVIDIAGTC Golden Ticket winners 🎉: @alexisgallagher Brandon I. Hans B. Julia S. Lluís D. Marco D. Tarique S. You’re headed to GTC! We’ll be reaching out soon with next steps to claim your prize. Thank you to our partners for collaborating with NVIDIA on the 2026 Golden Ticket Developer Contest: @huggingface / @pollenrobotics, @ollama, @ethroboticsclub, and @googlecloud. Stay tuned for one more winner reveal 👀