Devlin Dunsmore

25.4K posts

Devlin Dunsmore

@devlind

Founding engineer of the AI @ Work group at AWS, changing how people work. Opinions here are my own. he/him

Incoming CPU shortage is real As clouds get tapped of their supply, agent co’s will increasingly explore on-device compute (everyone already has a CPU in pocket/on desk!) With foreground agents rising, we expect this graph to get even steeper this year

This chart shows the number of paid services created on @render each week. We're doing alright.

SOUTH BAY IS A PERFECT 11-0 WHEN BRONNY PLAYS 📈 HE IS ON PACE FOR A 50/40/90 SZN 🎯 EVERY TEAMMATE PRAISES HIM 👏 WHAT THE MEDIA WON’T TELL YOU IS BRONNY IS QUICKLY BECOMING ONE OF THE MOST IMPROVED YOUNG PLAYERS IN THE ENTIRE ASSOCIATION 👀

1/ I run 5 Claudes in parallel in my terminal. I number my tabs 1-5, and use system notifications to know when a Claude needs input #iterm-2-system-notifications" target="_blank" rel="nofollow noopener">code.claude.com/docs/en/termin…

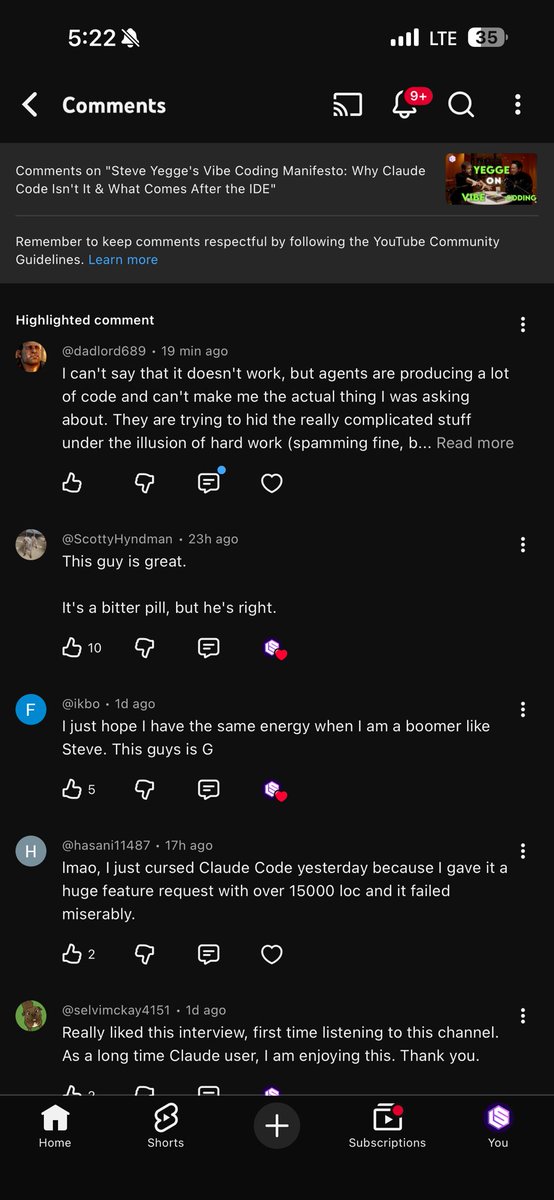

Another banger from the @latentspacepod. Really great convo @swyx and @Steve_Yegge . Go queue it up peeps! open.spotify.com/episode/20iTCh…

If Claude is really doing so much of the coding for Anthropic, why haven't they used it to create a fucking ui for Claude Code? It's 2025. Why the fuck am I forced to use a cli for everything as if it were 1995?