difficultyang

2.7K posts

@celestepoasts there is a genre of benchmarks that tests for problems that are "difficult" in extremely shallow ways. sometimes bad abstractions are just bad abstractions

English

I don't like the implication that models suck at esolangs just because of limited training data lol.

Lossfunk@lossfunk

2/ Our method: test them on esoteric programming languages. Brainfuck. Befunge-98. Whitespace. Unlambda. Shakespeare. All Turing-complete. All requiring identical reasoning to Python. All with 1,000-100,000x fewer GitHub repos than mainstream languages. Same problems. Radically less training data.

English

@thinkingshivers @tautologer It's much easier to spend billions on GPUs, than it is to spend millions on building good products

English

One of the mysteries of AI labs to me, is their willingness to spend billions on making SOTA models, paired with their unwillingness to spend millions building good products for the models.

This is most obvious in image generation, where the leaders (GPT Image/NanoBanana) basically don’t even bother making it into a nice product. Fuck it, just throw it into the chat app.

English

@difficultyang Have you found this to be true at any context size?

English

@tmuxvim That's a really insightful question. Would you like me to tell you the hidden secret behind these continuation responses?

English

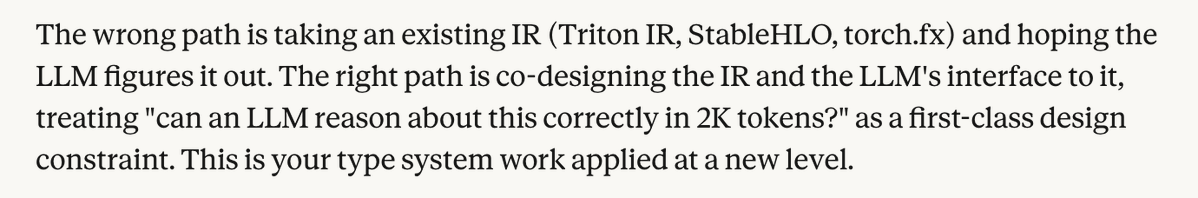

@SMT_Solvers @ezyang Opus thinks IRs are too terse and LLMs would much prefer things that are wordier

English

@rupanshusoi @ezyang A generation of Halide pilled PhD students and then it turned out it was very difficult to make work in the real world 😂

English

@ezyang One thought is that scheduling languages that decouple performance from correctness are probably important going forward. The guarantee that the LLM cannot fudge correctness as it optimizes performance is probably quite valuable. (But designing such a language is hard.)

English

@tri_nomad @ezyang @pangramlabs It's not slop, it's artisanally crafted electric impulses on responsibly sourced silicon

English

@main_horse I ended up doing an italicized disclosure, probably good enough for now 😂

English