Tweet fijado

Ihtesham Ali

2.3K posts

Ihtesham Ali

@ihtesham2005

investor, writer, educator, and a dragon ball fan 🐉

🇵🇰🇨🇳🇺🇸 Se unió Ekim 2025

223 Siguiendo12.7K Seguidores

My grandfather built a $12M business from nothing.

Never went to college. Never read a business book.

But every major decision he made was right.

I asked him his secret at his 80th birthday dinner.

He put down his fork and said something I've never forgotten.

"Before every big move, I asked myself one question. Would I be okay with this being the last thing I ever did?"

I drove home that night and turned his entire decision filter into a Claude prompt.

"I need to make this decision: [decision]. Evaluate it through the lens of an 80-year-old version of me looking back. First, identify the values I'm actually living by based on this choice versus the values I claim to have. Second, map the realistic regret probability of each path both action and inaction. Third, find the fear I'm disguising as logic. Fourth, tell me what someone who genuinely loved me would say I should do. Be honest. Be direct. Don't comfort me."

I ran a business pivot through it last year.

Claude told me I was disguising fear of failure as financial caution.

It was right.

I made the pivot.

Best decision of my career.

My grandfather never had AI.

He had 80 years of hard lessons instead.

You just got the shortcut.

English

A student asked an MIT professor to explain Game Theory without jargon.

He drew two stick figures on the board.

That was the entire lecture.

"These two people are you and everyone you've ever negotiated with," he said. "Every decision one makes changes the best decision for the other. That's the whole thing. That's all of Game Theory."

The student laughed.

The professor didn't.

"You think I'm simplifying. I'm not. Every war in history. Every corporate merger. Every raise you didn't get. Every co-founder relationship that exploded. All of it traces back to two stick figures making decisions that affect each other."

Then he said the thing nobody writes down.

"The reason most people lose negotiations isn't that they're less intelligent. It's that they're optimizing for the wrong outcome. They want to win the moment. The other person is playing for something else entirely."

He paused.

"Figure out what they're actually optimizing for and you will never lose a negotiation again."

I've used that single framework in every business conversation since.

The textbook version of Game Theory takes a semester.

That professor taught the only part that matters in 4 minutes.

Some MIT lectures should cost $100,000.

That one was free.

English

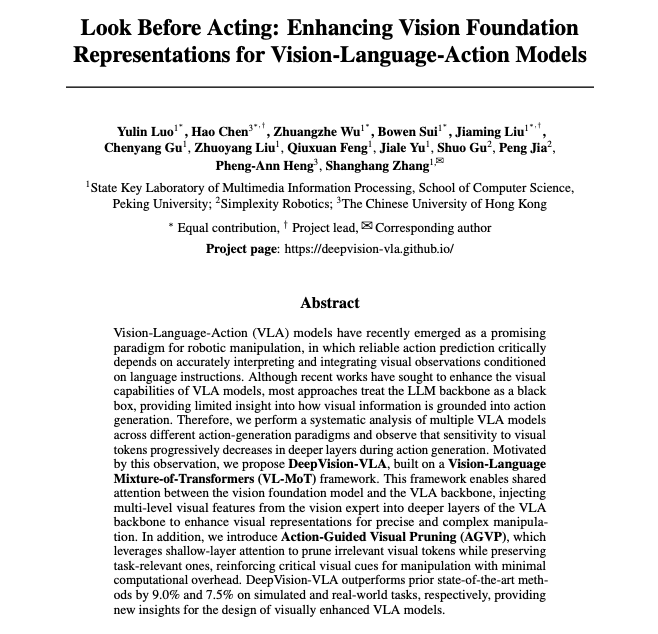

🚨 Researchers just exposed a silent flaw destroying every robot AI model.

Current VLA models go blind in their own deep layers.

They can see perfectly at the start. By the end? They've forgotten what they're even looking at.

Here's the wild part they found:

Shallow layers of robot AI lock onto the exact object the robot needs to grab.

Deep layers? Completely lose track of it. Start paying attention to random background noise instead.

So the robot "knows" what to do but can't actually see what it's doing anymore.

The fix they built is called DeepVision-VLA.

→ Added a dedicated Vision Expert (DINOv3) that keeps watching the object the whole time

→ Injected high-resolution visual signals into the deep layers where vision was dying

→ Built "Action-Guided Pruning" that cuts all background noise before it reaches those layers

Results:

+9% better than every existing robot AI in simulation

+7.5% in real-world tasks

100% success rate pouring liquid into a bottle. Every single time.

The whole problem was that nobody ever looked inside the black box before.

They just assumed vision worked. It didn't.

English

🚨BREAKING: Researchers just built a robot that trains itself.

It's called RoboClaw. And it changes how we think about robot training forever.

Here's what's actually happening inside it:

Every time you want to teach a robot a new task, someone has to sit there. Collect demonstrations. Reset the environment after every failure. Monitor everything. Fix mistakes by hand.

It's expensive. It doesn't scale. And the people collecting data aren't the same ones deploying the system creating gaps that break everything.

RoboClaw fixes this with one brutal insight:

If a robot can learn to DO a task, it can also learn to UNDO it.

They call this Entangled Action Pairs (EAP).

For every forward skill the robot learns place the primer in the drawer it also learns the reverse take the primer back out.

These two behaviors form a self-resetting loop.

The robot does the task. Resets itself. Does it again. Collects data the whole time. No human needed.

The results are insane:

→ 8x less human intervention during training

→ 2.16x less total human time per dataset

→ 25% higher success rate on complex multi-step tasks vs baseline

But the deeper breakthrough isn't the loop.

It's that the SAME agent that trains the robot also deploys it.

Most robotic systems use completely separate pipelines for data collection, model training, and execution. The left hand doesn't know what the right hand is doing.

RoboClaw runs everything through a single VLM-driven controller. Same memory. Same reasoning. Same context.

When the robot fails during a real task, those failure trajectories get fed back into training. The robot learns from its own real-world mistakes.

It's not just automation. It's a closed loop that compounds over time.

They tested it on a vanity table organization task pick up lotion, insert lipstick into a narrow slot, place primer in a drawer, wipe a surface with tissue.

Four tasks. Sequential. Each one dependent on the last.

RoboClaw handled all of it autonomously. When it failed, it diagnosed the failure type, triggered recovery behaviors, and kept going.

Only escalated to a human when it genuinely couldn't fix the situation itself.

This is what real long-horizon robotics looks like.

English

Ihtesham Ali retuiteado

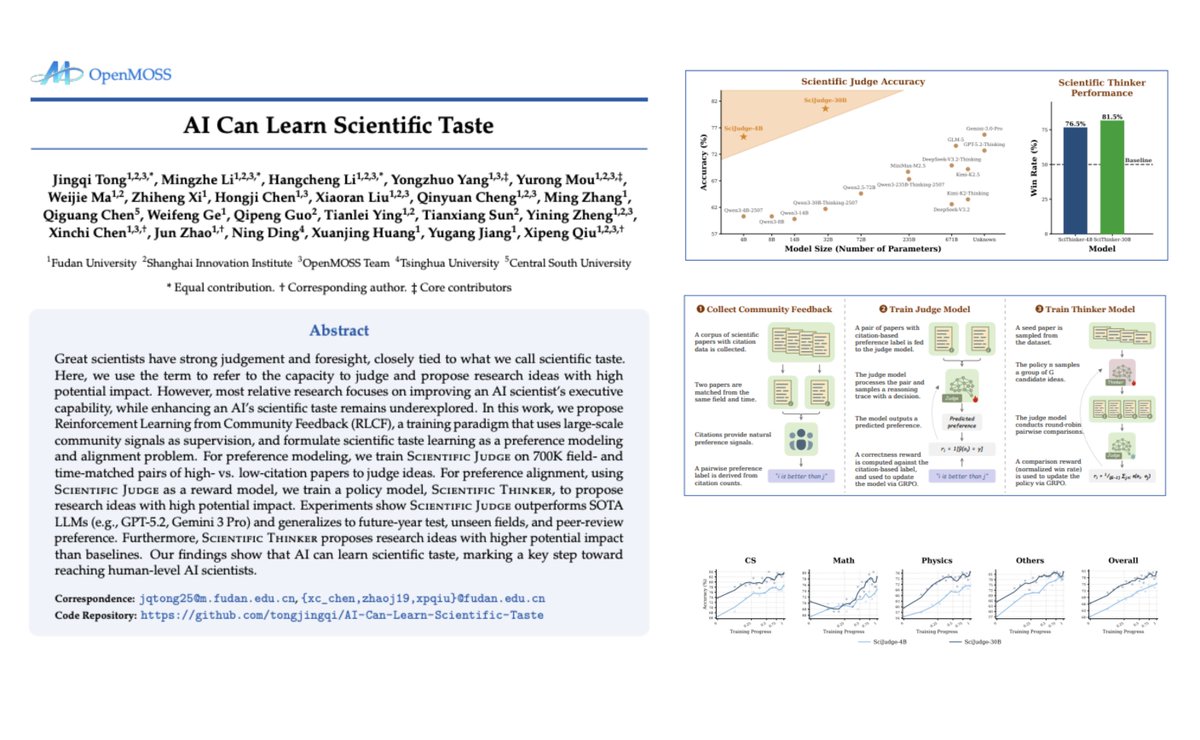

Holy shit... AI just learned scientific taste 🤯

For 400 years, one thing separated great scientists from average ones. Not intelligence. Not work ethic.

Taste.

The ability to look at 1,000 research directions and know which one is worth your life's work.

Fudan University just bottled that into a model.

They scraped 2.1 million arXiv papers and did something nobody had tried before. Instead of training AI to run experiments or search literature, they trained it to judge ideas. 700,000 matched paper pairs. Same field. Same year. Different citation counts.

One job: figure out which research the scientific community actually cared about.

They called it Scientific Judge. And the numbers destroyed every benchmark.

It beats GPT-5.2, Gemini 3 Pro, and GLM-5 at predicting scientific impact. It generalizes to papers published after its training data ends. It works on fields it was never trained on. It even transfers to ICLR peer review scores without ever seeing them.

But here's the part that broke my brain.

They used Scientific Judge as a reward model to train a second AI called Scientific Thinker. You give it a paper, it reads it, then proposes the next high-impact research direction. Not a summary. Not a literature review. An original idea. Win rate against baseline? 81.5%. Win rate against GPT-5.2 itself? 54.2%.

The AI is now proposing research ideas that frontier models judge as better than their own.

Scientific taste was always the last human advantage in research. The PhD took 5-7 years because that's how long it took to develop judgment — to know what matters before anyone else does. That just became a training objective. Not from human feedback. Not from expensive annotations. From citations. The raw signal of what the scientific community collectively decided was worth building on.

We're not talking about AI that executes science. We're talking about AI that decides what science is worth doing. That's a completely different thing.

English

Ihtesham Ali retuiteado

🚨BREAKING: A developer just built an App Store for OpenAI Codex.

It's called Awesome Codex Subagents and it has 136+ specialized AI agents organized across 10 categories.

Instead of one overloaded AI trying to do everything, you now deploy specialist agents for each task:

→ Code review agent with its own context window

→ Security auditing agent that only hunts vulnerabilities

→ Docs writer that never pollutes your main thread

→ Debugging agent that traces root causes from scratch

→ Infrastructure agent that speaks DevOps fluently

Here's the wild part: each subagent runs in complete isolation. Independent context. No cross-contamination. No "I forgot what we were doing" halfway through a refactor.

The old way was one AI assistant juggling 50 tasks and dropping half of them. This is a coordinated team.

Drop the .toml files into ~/.codex/agents/ and they're available across every project globally.

This is what serious Codex workflows look like.

100% Opensource.

English

Ihtesham Ali retuiteado

RIP static AI agents.

Chinese developers just built MetaClaw an OpenClaw wrapper that turns every live conversation into continuous training data.

→ Scores each turn automatically

→ Injects relevant skills at every interaction

→ Auto-generates new skills when agent struggles

→ Supports RL (GRPO) and on-policy distillation

→ Fully async serving and training run in parallel

No offline retraining. No GPU cluster. Just talk and let it learn.

100% Opensource.

English

@nikitabier @0x45o but it also makes time spend ( a lot less )

but yeah it also makes the user happy

English

@0x45o My job is to increase unregretted time spent. Every tap, every word must be intentional and valuable to the user.

If you get sucked into bad content, that’s time taken away from a conversation you could be having elsewhere.

English

wasn't the point that they are long reads and therefore force people to spend more time on the platform?

Nikita Bier@nikitabier

We’re rolling out summaries for Articles now. Just tap the Summarize button if you want to know if it’s worth your time to read it (or if your attention span is 12 seconds).

English

@paulg my nephew is a red head ( bcz of his fathee ( he’s a red head too ) ) and another one is normal.

it happens.

English

@danushman this is better than secret ads and bullshit content

i am giving what worked for me and added a mode ( a system prompt to turn llm behaviour) and made it useful.

English

@ihtesham2005 You’re not teaching people ai with clickbait headlines like that

There is no “secret mode” and your post is thus just a prompt

Be better

English

Ihtesham Ali retuiteado

A journalist once asked Warren Buffett how he avoids bad investments.

His answer never made the headline. But someone in the room wrote it down.

He said he imagines a panel of his smartest critics sitting across from him before he writes a check. He forces himself to argue their side until he either changes his mind or finds the hole in their argument.

I read that in a random forum post at 2am six months ago.

Spent the next hour building it inside ChatGPT.

The prompt:

"You are a panel of 5 of my harshest critics debating why my idea will fail: [idea]. Critic 1 attacks the market timing. Critic 2 destroys the unit economics. Critic 3 questions my ability to execute. Critic 4 argues a bigger player will copy this in 90 days. Critic 5 says I'm solving a problem nobody actually has. Make them brutal. Make them specific."

I ran my SaaS idea through it.

Critic 4 described exactly what a competitor launched three months later.

Critic 5 made me realize my target customer had the problem but not the budget to solve it.

Pivoted before I wrote a single line of code.

Buffett has Charlie Munger for this.

You have one prompt.

English