Jacky Kwok

37 posts

@jackyk02

Stanford CS PhD | Berkeley EECS

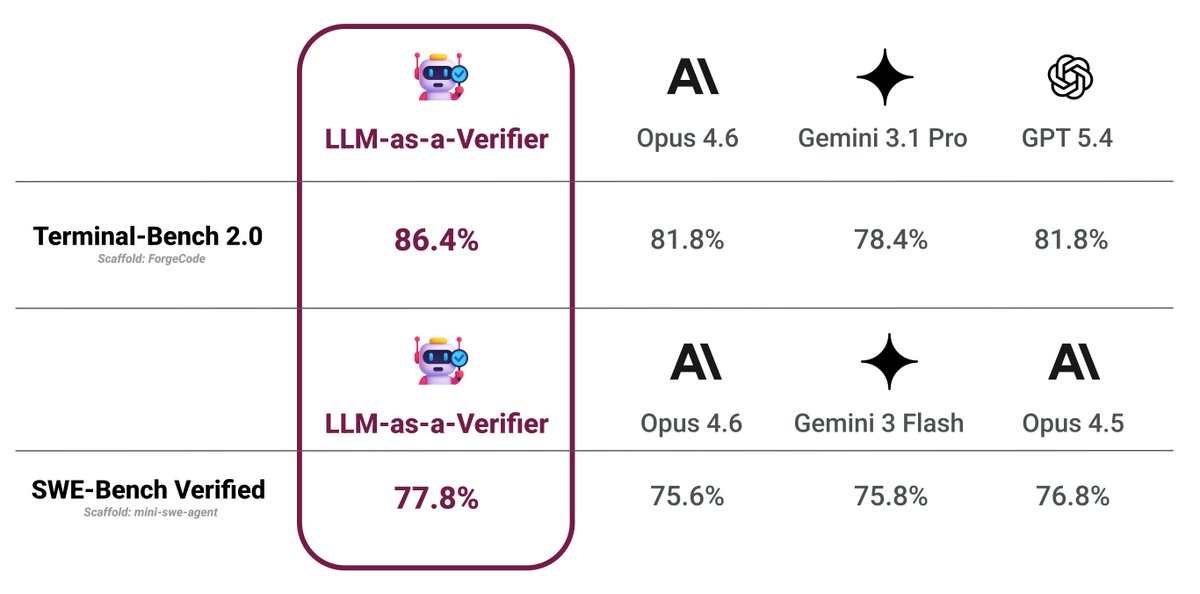

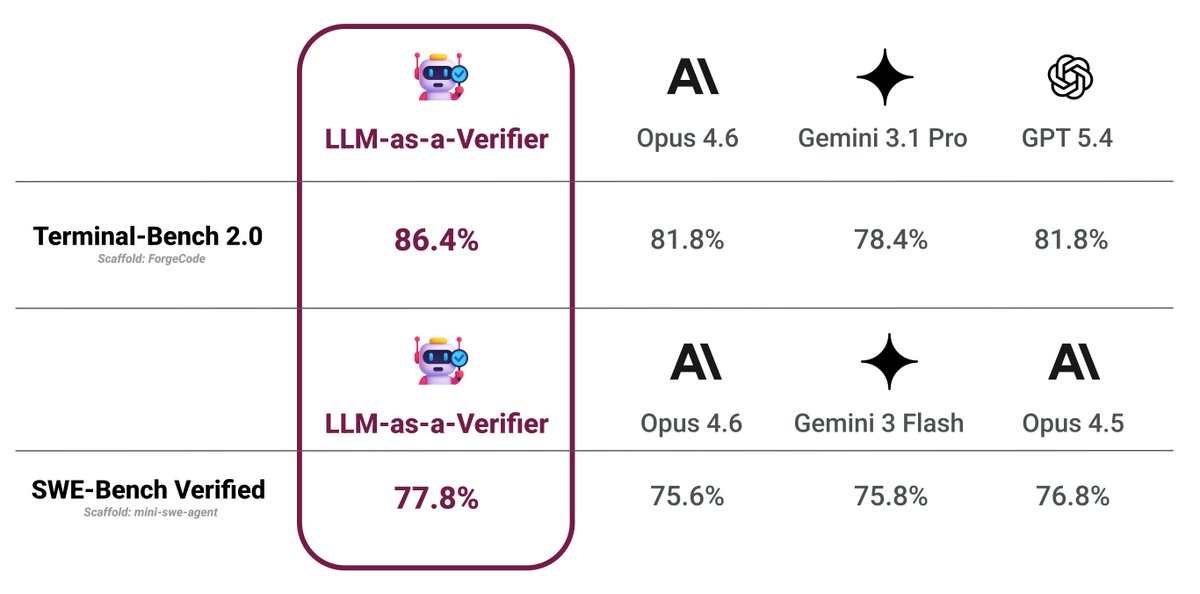

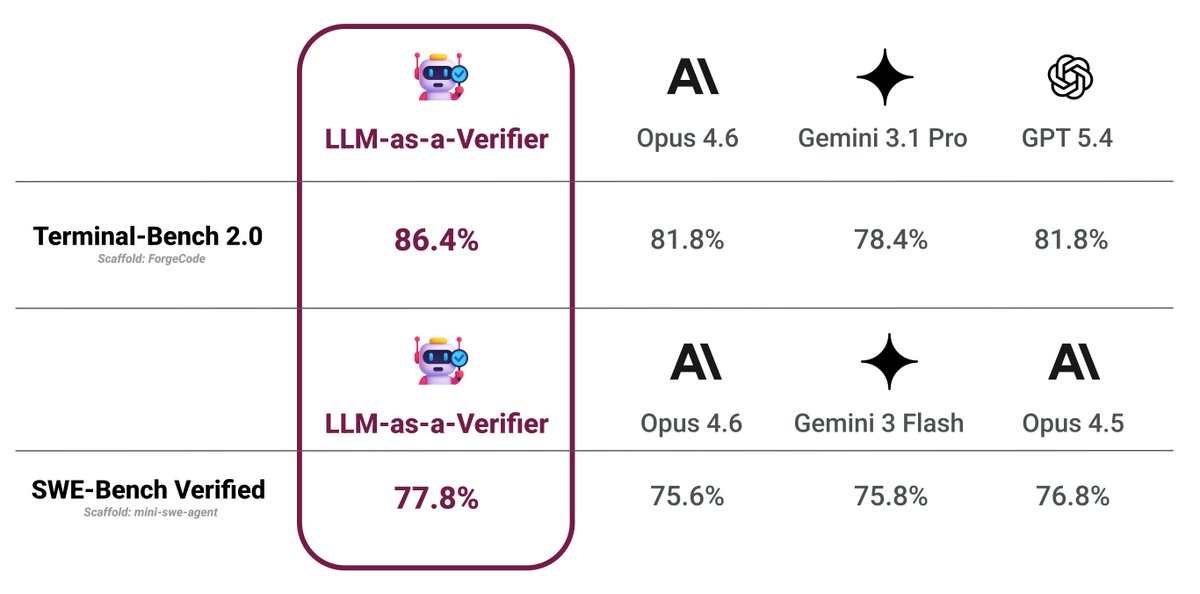

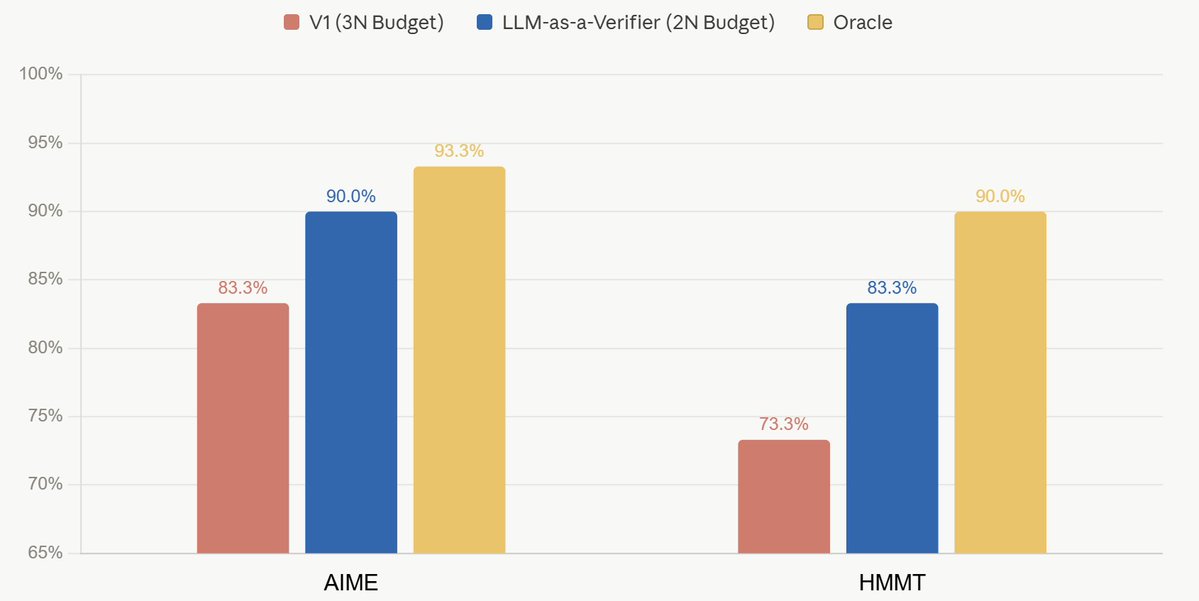

Can LLMs Self-Verify? Much better than you'd expect. LLMs are increasingly used as parallel reasoners, sampling many solutions at once. Choosing the right answer is the real bottleneck. We show that pairwise self-verification is a powerful primitive. Introducing V1, a framework that unifies generation and self-verification: 💡 Pairwise self-verification beats pointwise scoring, improving test-time scaling 💡 V1-Infer: Efficient tournament-style ranking that improves self-verification 💡 V1-PairRL: RL training where generation and verification co-evolve for developing better self-verifiers 🧵👇

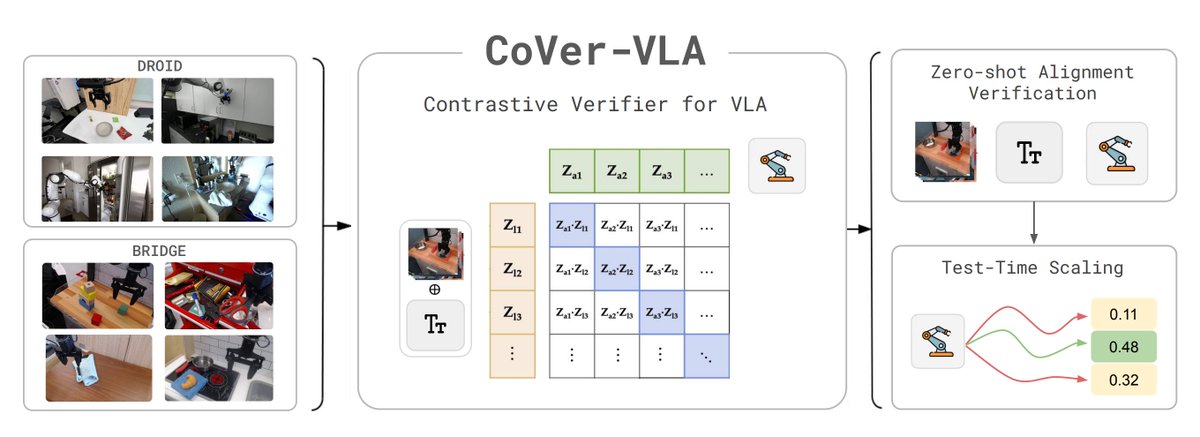

Introducing CoVer-VLA💫— a contrastive verifier + hierarchical test-time scaling framework for VLAs! - Lightweight 1B verifier 🧠 - Outperforms π₀ & π₀.₅ 🦾 - Trained on Bridge & DROID 🤖 Turns out scaling verification > scaling policy learning for VLA alignment! 🧵👇 🌐 Website: cover-vla.github.io 📄 Paper: arxiv.org/abs/2602.12281 🤗 Models: huggingface.co/cover-vla 💻 Code: github.com/cover-vla/cove…

🧵(6) DROID Eval CoVer-VLA achieves 14% gains in task progress and 9% in success rate on the challenging red-team PolaRiS benchmark. In the pan cleaning task, π₀.₅ shows incorrect intent, grasping the pan handle. In contrast, CoVer-VLA correctly uses sponge to scrub the pan.