Tarjei Mandt

2.2K posts

MiniMax-M2.5 is now open source. Trained with reinforcement learning across hundreds of thousands of complex real-world environments, it delivers SOTA performance in coding, agentic tool use, search, and office workflows. Hugging Face: huggingface.co/MiniMaxAI/Mini… GitHub: github.com/MiniMax-AI/Min… Coding Plan: platform.minimax.io/subscribe/codi… Intelligence with Everyone

Introducing GLM-5: From Vibe Coding to Agentic Engineering GLM-5 is built for complex systems engineering and long-horizon agentic tasks. Compared to GLM-4.5, it scales from 355B params (32B active) to 744B (40B active), with pre-training data growing from 23T to 28.5T tokens. Try it now: chat.z.ai Weights: huggingface.co/zai-org/GLM-5 Tech Blog: z.ai/blog/glm-5 OpenRouter (Previously Pony Alpha): openrouter.ai/z-ai/glm-5 Rolling out from Coding Plan Max users: z.ai/subscribe

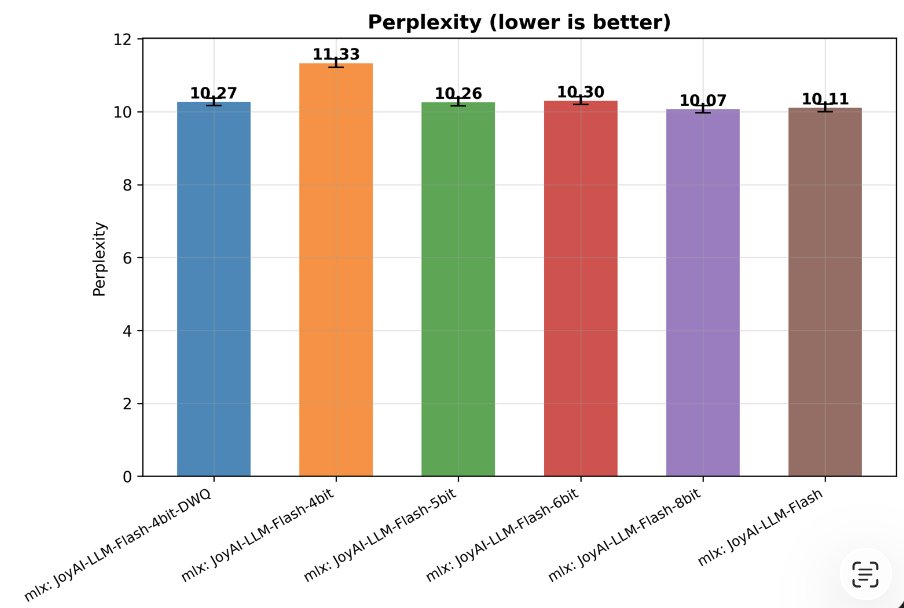

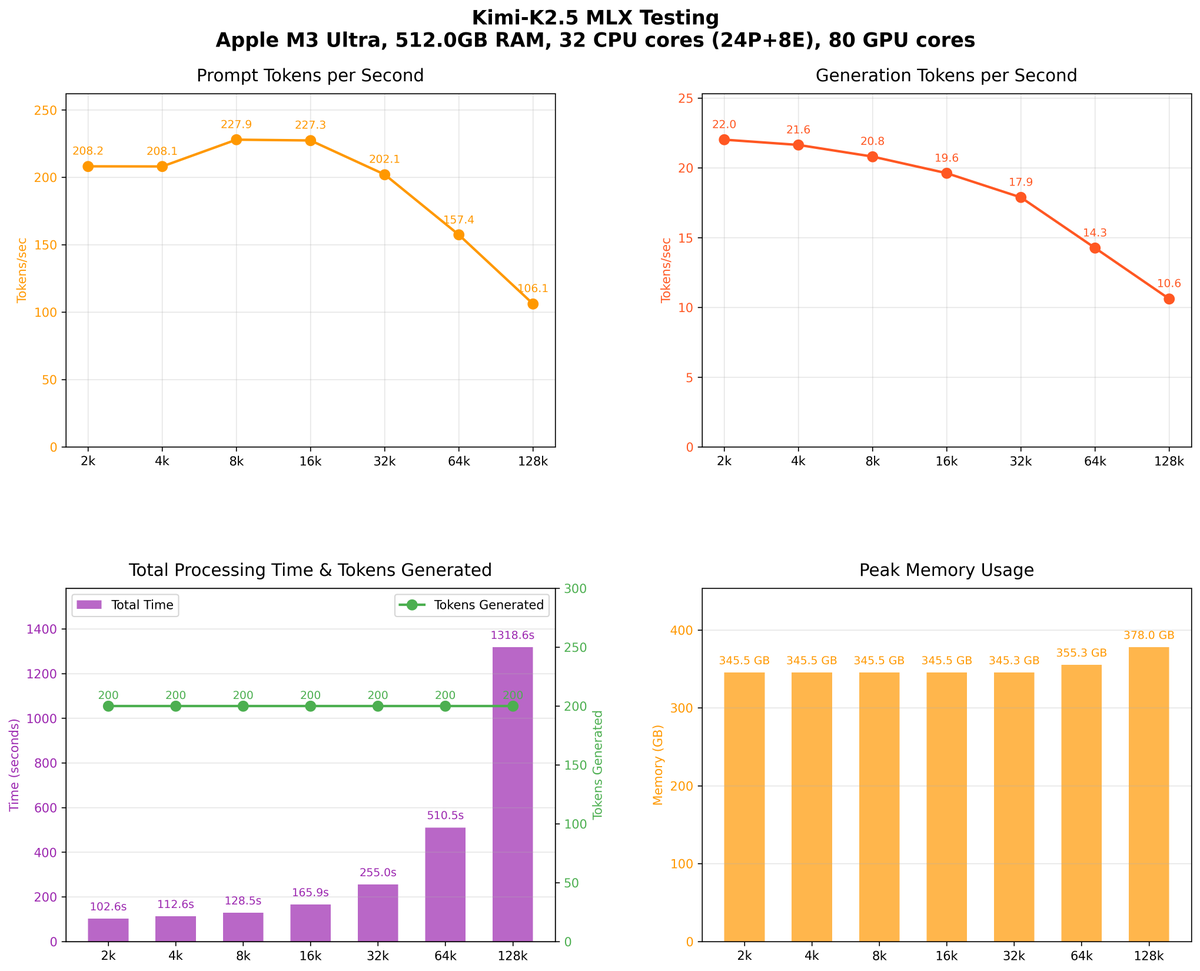

Latest mlx-lm is out: - New models: Kimi K2.5, Step3.5 flash, LongCat Flash lite thanks to @kernelpool - Support for distributed inference with mlx_lm.server thanks to @angeloskath - Much faster and more memory efficient DeepSeek v3 (and other MLA-based models)