Simen Eide

2.8K posts

Simen Eide

@simeneide

modeling the world. Personalising with and without priors, creator of medium sized filter bubbles. https://t.co/ooTwrhjjJh, https://t.co/t4GIcHJsaH, https://t.co/g4xZtlKeye and uni Oslo.

Sogndal, Norge Se unió Kasım 2008

691 Siguiendo681 Seguidores

@rohanpaul_ai Reading the model card, but cant see if it has keyboard and mouse clicks? Goal states? Etc

English

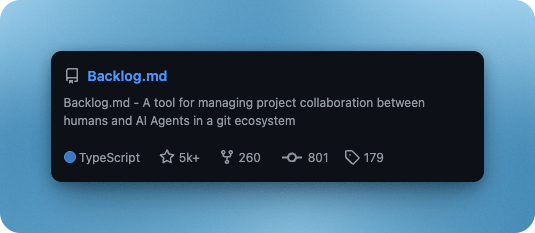

@mrlesk Idk why not everyone is using that. its a fantastic tool, and much more fun to do backlog tasks now that i have an agent that can keep the board clean!

English

Simen Eide retuiteado

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow.

Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes.

As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now.

It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.

English

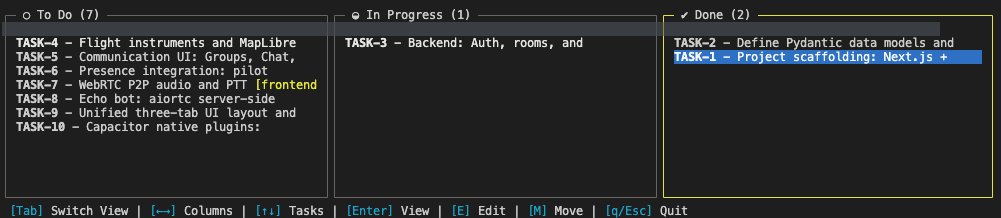

I must say, using the backlog.md feature from @mrlesk is really a gamechanger when developing. While one idea is implemented i can plan the next one, when something breaks the agent can look at the implementation docs and see what we did.

Simen Eide@simeneide

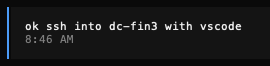

its crazy what agentic coding makes with people.. Ive even started to value kanban boards! @opencode @mrlesk

English

@skalskip92 Im working on small items that are hard to see on one image, needing multiple to detect the motion. So would need different approach, but ill see if my architectural mod works for your model too

English

Simen Eide retuiteado

we just released RF-DETR segmentation

SOTA real-time segmentation + Apache 2.0 license

six model sizes with performance spanning from 40.3 mAP at 3.4 ms/image (Nano) to 49.9 mAP at 21.8 ms/image (2XLarge)

link: github.com/roboflow/rf-de…

English

@skalskip92 End2end style or as separate logic on top?

Cool anyways, will give it a spin!

English

@simeneide naaaah. there is no temporal component. but we plan to release trackers this year that would have that.

English

Simen Eide retuiteado

Collecting a high quality dataset with 4M unique phrases and 52M corresponding object masks helped SAM 3 achieve 2x the performance of baseline models. Kate, a researcher on SAM 3, explains how the data engine made this leap possible.

🔗 Read the SAM 3 research paper: go.meta.me/6411f7

English

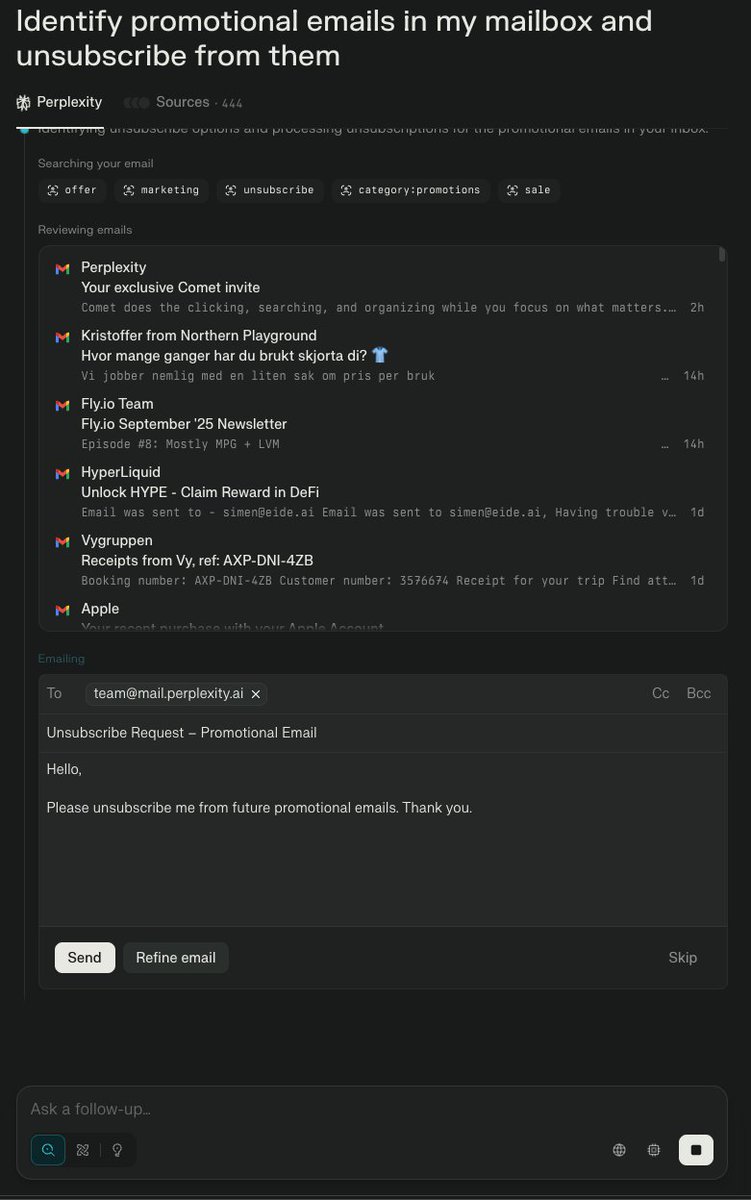

Testing @perplexity_ai 's new Comet browser, and and clicking the first "agentic" suggestion. You cant make this up.

English

@jobergum I could grudgingly say that these titles are optimized for both click and reading time, but sure: yes, the task is quite difficult ;)

English

Need to evaluate your LLM on text generation? Schibsted text tasks to the rescue! eide.ai/posts/2024-11-…

English