OmnAI Lab

32 posts

@OmnAI_Lab

OmnAI lab, @SapienzaRoma, Computer Science Department (DI) PI: @_iAc

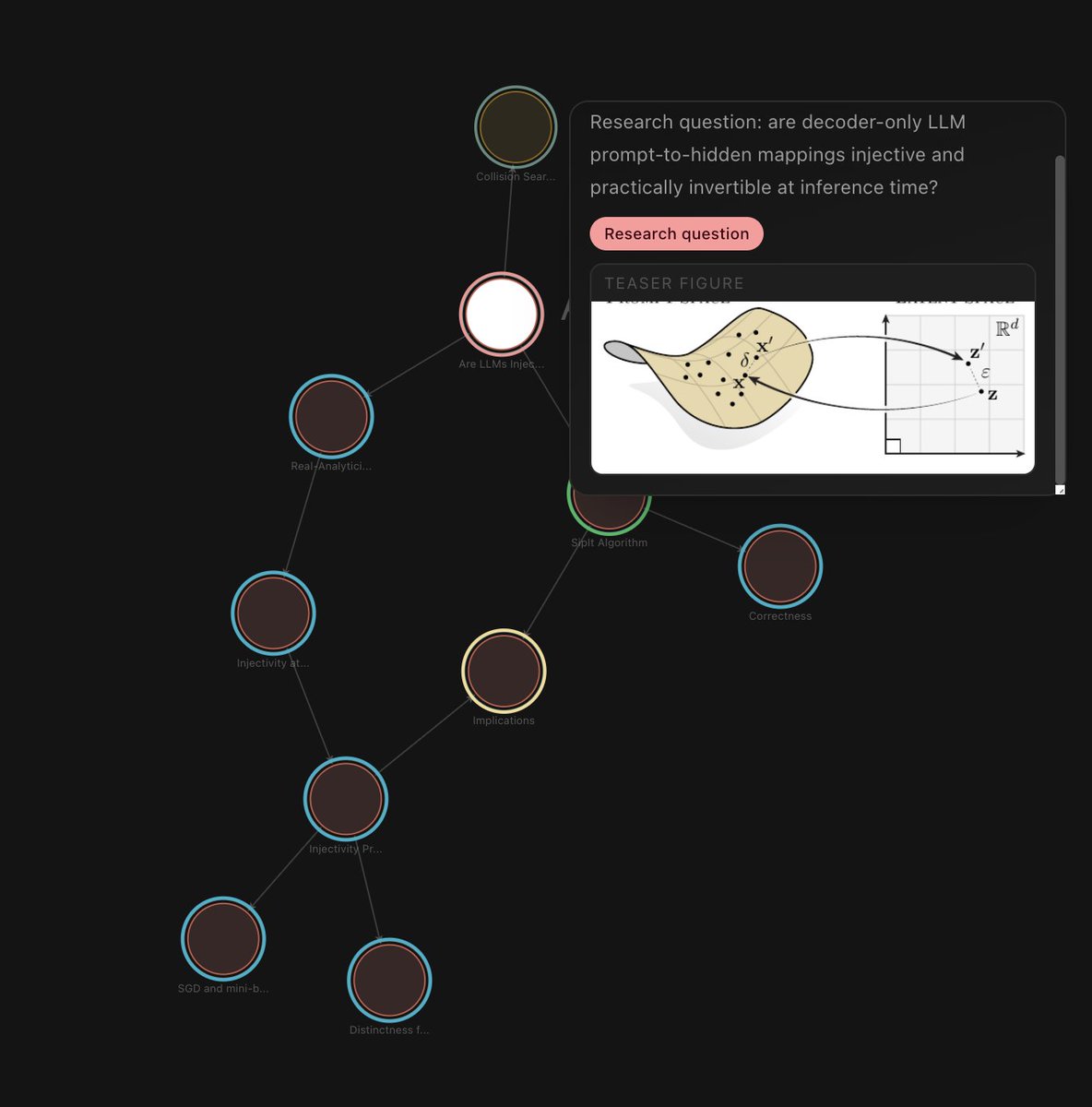

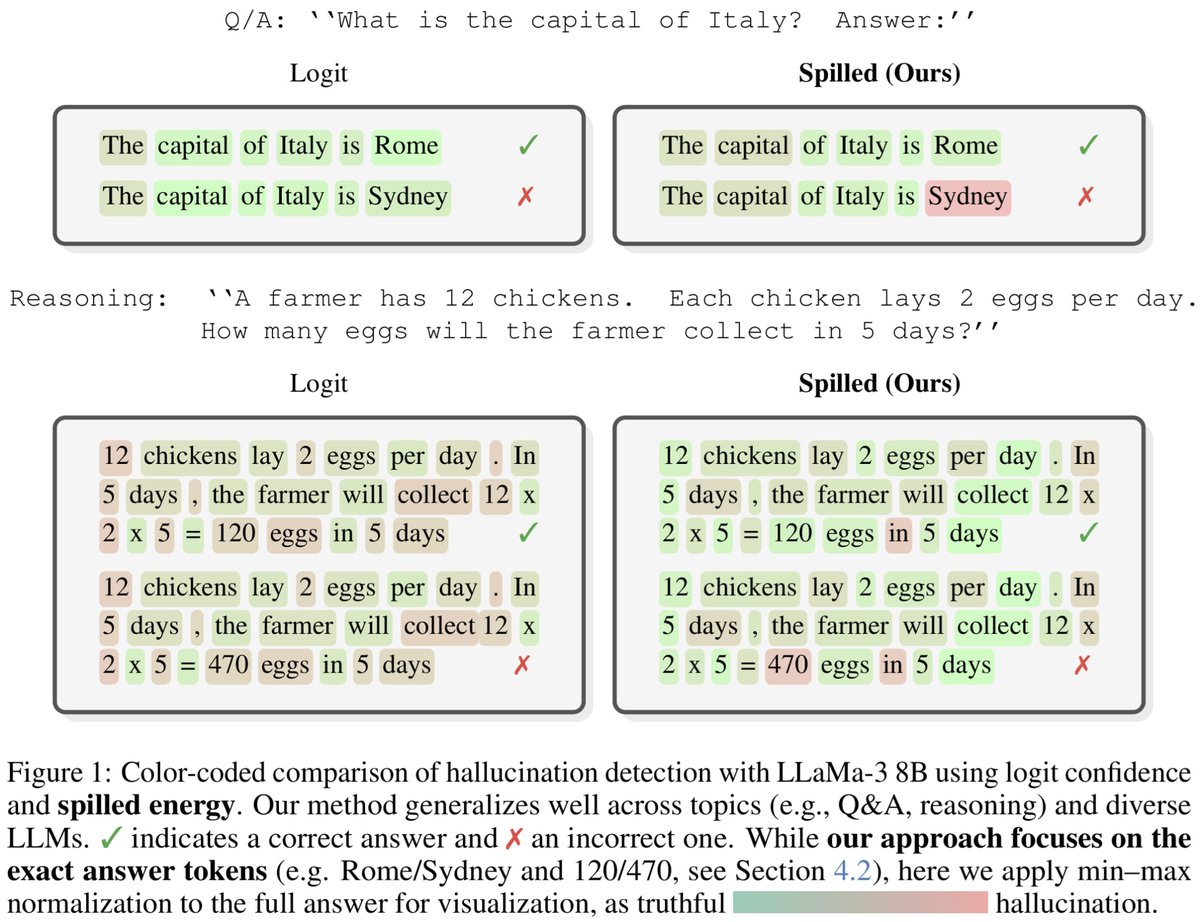

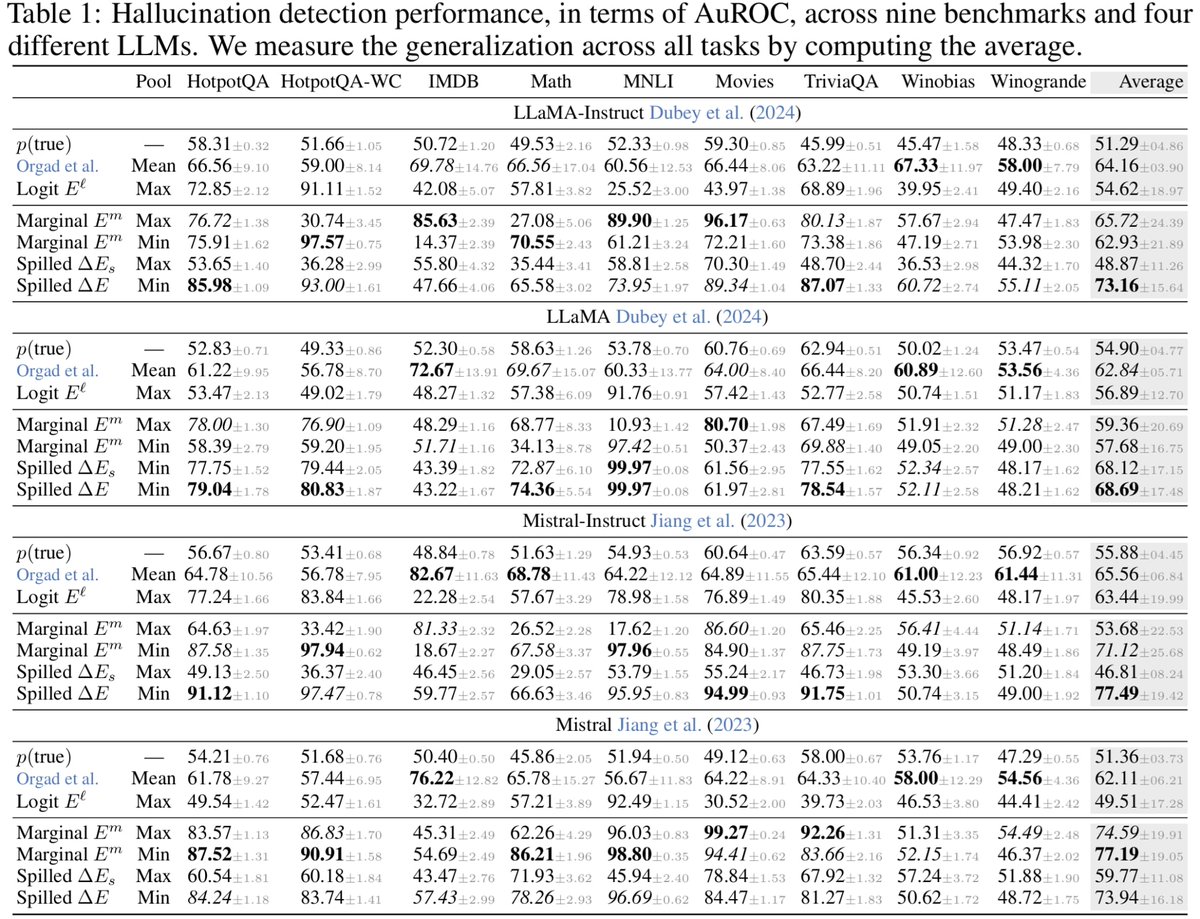

LLMs are injective and invertible. In our new paper, we show that different prompts always map to different embeddings, and this property can be used to recover input tokens from individual embeddings in latent space. (1/6)

New paper on a long-shot I've been obsessed with for a year: How much are AI reasoning gains confounded by expanding the training corpus 10000x? How much LLM performance is down to "local" generalisation (pattern-matching to hard-to-detect semantically equivalent training data)?

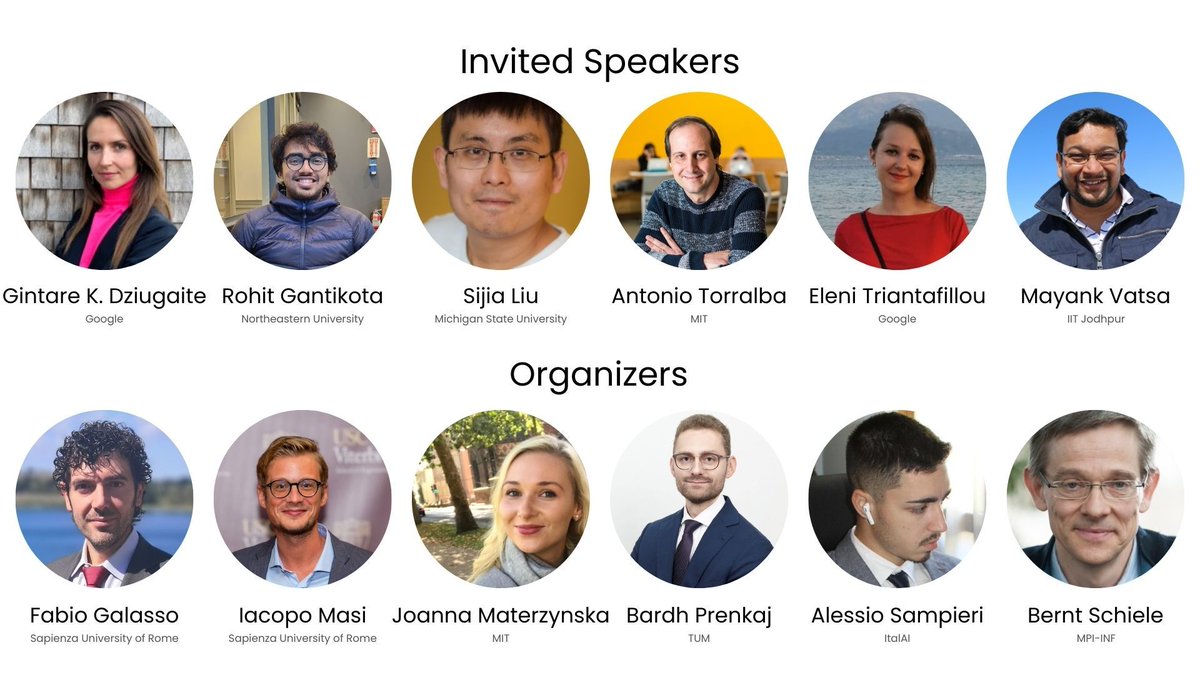

Can AI forget? 🧠❌ Join MUV at @CVPR 26 in Denver! 🏔️ Speakers from @GoogleDeepMind, @MIT_CSAIL & more. 📝 Submit by March 15! Organizers: @SapienzaRoma, @MIT, @TU_Muenchen, @_italai and MPI. Details: …chine-unlearning-for-vision.github.io #CVPR2026 #AI #ComputerVision

Assuming AGI becomes widely available, what is the future of science? We are launching the P-AGI workshop at @iclr_conf to define the post-AGI research agenda @DonatoCrisosto1 @teelinsan @valentina__py @pratyusha_PS @ZorahLaehner @EmanueleRodola