Alignment Lab AI

7.2K posts

Alignment Lab AI

@alignment_lab

Devoted to addressing alignment. We develop state of the art open sourced AI. https://t.co/oANsMnut7V https://t.co/6aJDLUvuU5

An excellent paper for anyone interested in rigorous physicalist argument against computational functionalism. Alex is a fantastic, careful thinker and influenced my views a lot; we're working on a broader blog post breaking these concepts down, stay tuned! 🐙

Simply adding Gaussian noise to LLMs (one step—no iterations, no learning rate, no gradients) and ensembling them can achieve performance comparable to or even better than standard GRPO/PPO on math reasoning, coding, writing, and chemistry tasks. We call this algorithm RandOpt. To verify that this is not limited to specific models, we tested it on Qwen, Llama, OLMo3, and VLMs. What's behind this? We find that in the Gaussian search neighborhood around pretrained LLMs, diverse task experts are densely distributed — a regime we term Neural Thickets. Paper: arxiv.org/pdf/2603.12228 Code: github.com/sunrainyg/Rand… Website: thickets.mit.edu

Introducing Examining Reasoning LLMs-as-Judges in Non-Verifiable LLM Post-Training LLM alignment in non-verifiable domains is hard because there is often no clear ground-truth reward. A natural idea is to use reasoning LLM judges inside the RL training loop — but do they actually work better than standard judges? We study this question in a controlled setup with a gold-standard judge, and find that reasoning judges train much stronger policies under gold evaluation, while non-reasoning judges are much more prone to reward hacking. But there is also a catch: these reasoning-judge-trained policies can learn highly effective adversarial strategies. In our study, a Llama-3.1-8B policy trained with a Qwen3-4B reasoning judge reaches 89.6% on the creative writing subset of Arena-Hard-V2, close to o3 (92.4%). 📚 Paper: arxiv.org/abs/2603.12246 See details below 👇 🧵1/N

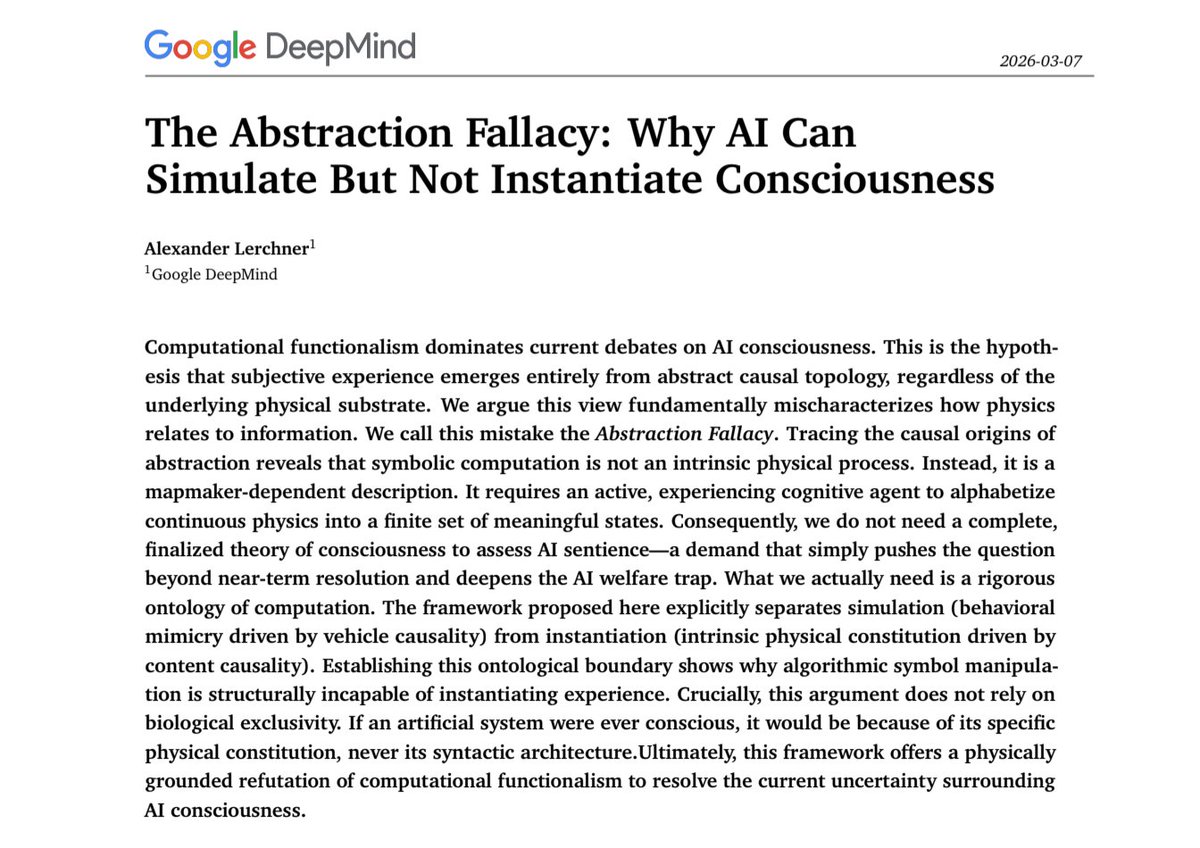

🧵1/4 The debate over AI sentience is caught in an "AI welfare trap." My new preprint argues computational functionalism rests on a category error: the Abstraction Fallacy. AI can simulate consciousness, but cannot instantiate it. philpapers.org/rec/LERTAF

Watch Andrew Coward Discuss Types of Memory in our Lessons from Biology for AI series before our X space tomorrow at 4pm PST!