Behsaad Ramez

4.8K posts

Behsaad Ramez

@behsaad

Game Dev, Freelancer and Remote Worker since 2015

LAUNCH ANNOUNCEMENT! Regency Solitaire II is now out on Steam and @itchio Steam: store.steampowered.com/app/2137470/Re… (please leave a review) Itch: greyaliengames.itch.io/regency-solita… (we make more $ if you buy it here) Enjoy and please RT! Thanks :-)

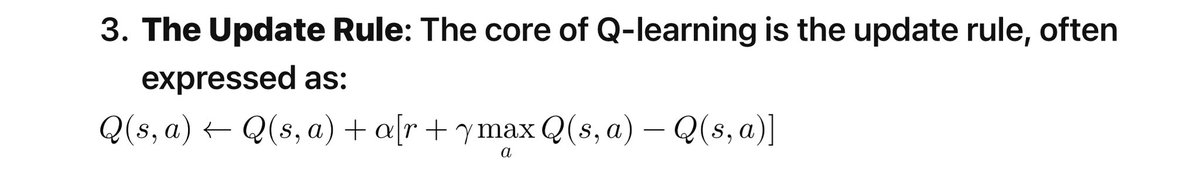

What is the RLHF that OpenAI’s secret Q* uses ? So let’s define this term. RLHF stands for "Reinforcement Learning from Human Feedback." It's a technique used in machine learning where a model, typically an AI, learns from feedback given by humans rather than solely relying on predefined datasets. This method allows the AI to adapt to more complex, nuanced tasks that are difficult to encapsulate with traditional training data. In RLHF AI initially learns from a standard dataset and then its performance is iteratively improved based on human feedbacks. The feedback can come in various forms, such as corrections, rankings of different outputs, or direct instructions. The AI uses this feedback to adjust its algorithms and improve its responses or actions. This approach is particularly useful in domains where defining explicit rules or providing exhaustive examples is challenging, such as natural language processing, complex decision-making tasks, or creative endeavors. This is why Q* was trained on logic and ultimately became adapt at simple arithmetic. It will get better over time, but this is not AGI. This graphic below is an overview and history of RLHF