hellofahmid

1.9K posts

hellofahmid

@hellofahmid

working on AI workforce. always building, ai & blockchain | poortraits#6916 | ex-OCM engineer

Toronto, Ontario Inscrit le Mart 2021

1.4K Abonnements1.2K Abonnés

Tweet épinglé

🚀 Optifly 0.5 is live!

This is a big step forward for us.

With a major upgrade to our agentic backend, Optifly is now:

• Much faster at processing trip requests

• Smooth and responsive when iterating or making changes

• More cohesive and polished end-to-end

You can plan flights, hotels, and experiences in one flowing workspace—chat, tweak, explore, repeat.

Short Video below 👇

Try it at optifly.ai

English

@Marcus_J_W @OpenAI What is the other 0.01%? Guessing it’s things like node modules?

English

@OpenAIDevs Please just add a way to see the files in my project. And add multiple terminal window. I think some of us just need a few basic things

English

@thsottiaux Image generation through codex would be cool, I have nano banana MCP connected but would be useful to get transparency power

English

Whenever we have a spike on the codex requests dashboard, we know what's going on. The alternative to agentic coding isn't coding by hand anymore.

ThePrimeagen@ThePrimeagen

we are about to hit 1 9 of availability while coding is largely solved

English

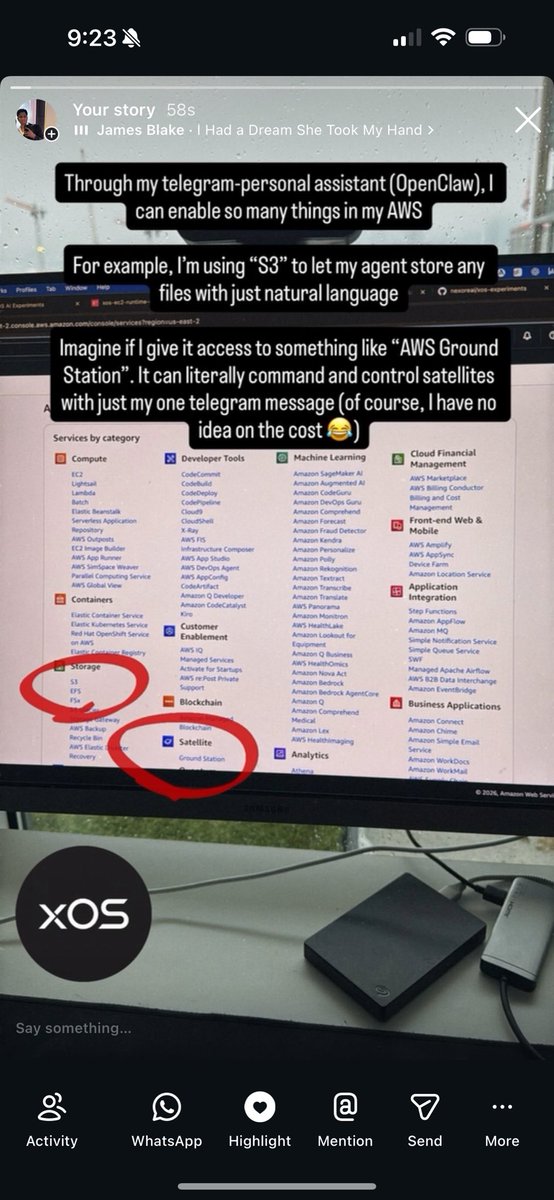

Through my telegram-personal assistant (@openclaw), I can enable so many things in my AWS.

For example, I'm using "S3" to let my agent store any files with just natural language.

Imagine if I give it access to something like "AWS Ground Station". It can literally command and control satellites with just my one telegram message.

#openclaw #agents

English

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…

English

@thsottiaux It’s MUCH faster now. I don’t think if it’s intentional but I’ve noticed codex cli is faster in my gcp vm instance more than my laptop

English

A small additional perk of the pro subscription is that it runs Codex 10-20% faster, on top of the ~60% speed improvement we shipped across the board last week.

Team is working hard to pack more into the existing subscriptions.

Tibo@thsottiaux

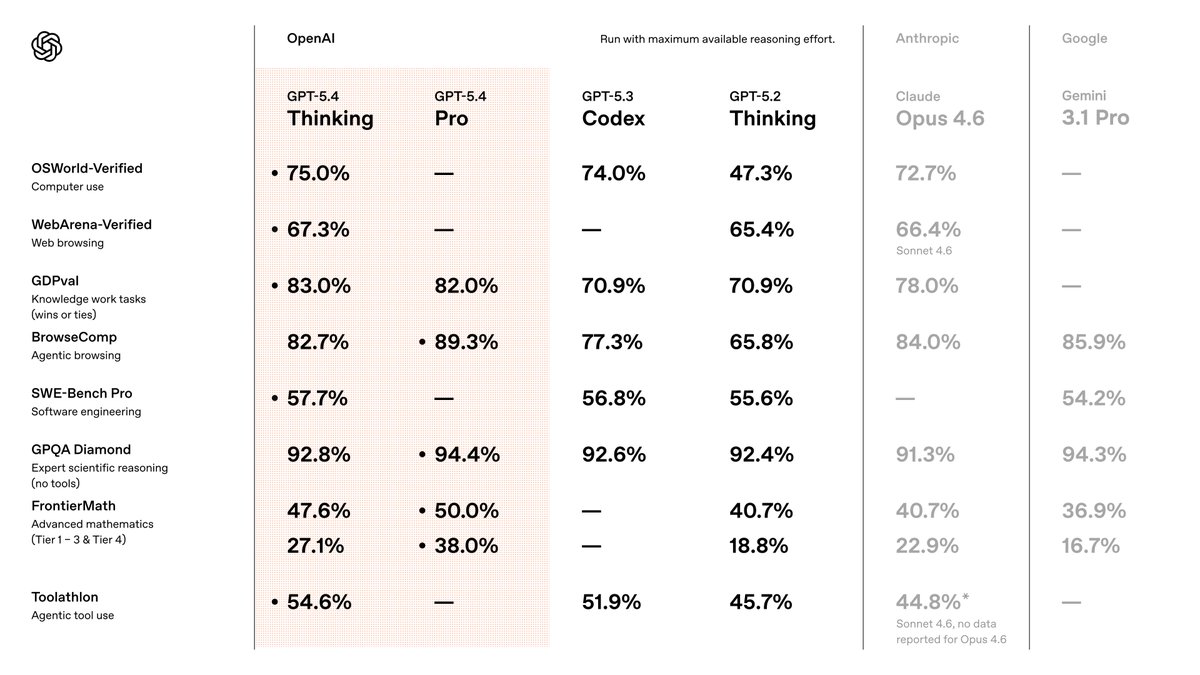

First time we combine SoTA on coding performance AND it is objectively the fastest thanks to combination of token-efficiency and inference optimizations. At high and xhigh reasoning effort, the two combine to make GPT-5.3-Codex ~60-70% faster than GPT-5.2-Codex from last week.

English