image72

815 posts

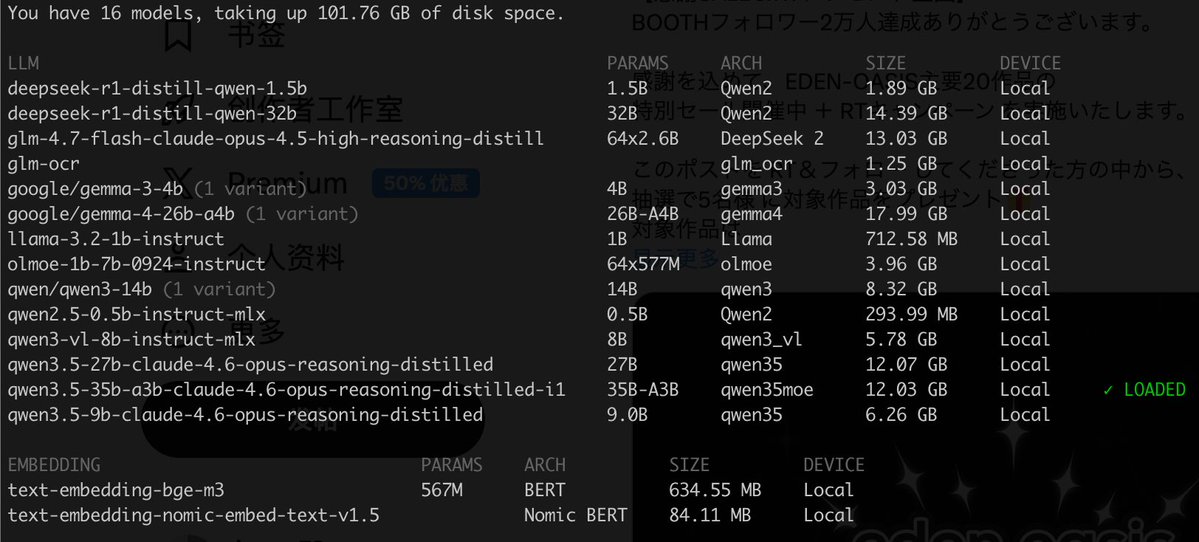

Gemma 4 26B-A4B is now ~2x faster at 375K context with TurboQuant on MLX-VLM v0.4.4 🚀 The model's official max context is 262K but I pushed it to 375K anyway. That's roughly 5–6 full novels (the entire LOTR trilogy + The Hobbit). Up to ~20K tokens they're neck and neck, but after that TBQ dominates with ~1GB memory savings. KV savings are modest (4–17%) because only 5/30 layers get compressed. But those 5 layers dominate decode time at long contexts, so the speed gains are massive. Device: M3 Max 96GB

We just released Gemma 4 — our most intelligent open models to date. Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows. Released under a commercially permissive Apache 2.0 license so anyone can build powerful AI tools. 🧵↓