Shawn Simister

4K posts

Shawn Simister

@narphorium

Building AI powered tools to augment human creativity and problem solving. Previously @GitHub and @Google 🇨🇦

San Francisco Inscrit le Nisan 2007

2.6K Abonnements2.1K Abonnés

Tweet épinglé

I've been thinking about why verifying AI agent output feels so much harder than writing the spec that produced it. That question led me to rethink where my attention actually belongs in the process, and eventually to build atelier.dev narphorium.com/blog/decision-…

English

Shawn Simister retweeté

thinking about the idea of a 'method' as an abstraction layer above using 'skills' when working with coding agents

while versatile, it's a pain having to invoke individual skills, often manually when the agent pauses between chunks of work. you're babysitting when you already have a pretty well defined process.

debugging, prototyping, architecting, brainstorming, etc. using a single skill is too blunt an instrument

what if you instead apply a method, which is a modular sequence of steps, each with multiple, customizable skills assigned to it.

you can configure how in the loop you want to be for any given method. for conceptual / planning methods, you want to be a lot more hands on. execution steps can be autopiloted.

methods could be self-improving and composable, steps could be run in parallel, blah blah... anyways, i'm building this into Capacitor so will find out soon if this is a useful abstraction or not.

English

@imjaredz I wrote about that as well … narphorium.com/blog/structure…

English

Shawn Simister retweeté

Typical chatbots force co-writers to leave shared docs. Our #CHI2026 paper explores collaborative AI use in shared docs via 3 features:

🤖 Shared agent profiles

☑️ Repeatable tasks, triggered by users or system

💬 Agents respond in shared comments

Preprint in 🧵

w @flolehmann_de

English

Shawn Simister retweeté

Anthropic shipped generative UI for Claude. I reverse-engineered how it works and rebuilt it for PI.

Extracted the full design system from a conversation export. Live streaming HTML into native macOS windows via morphdom DOM diffing.

Article: michaellivs.com/blog/reverse-e…

Repo: github.com/Michaelliv/pi-…

Built on @badlogicgames's pi and @DanielGri's Glimpse.

GIF

English

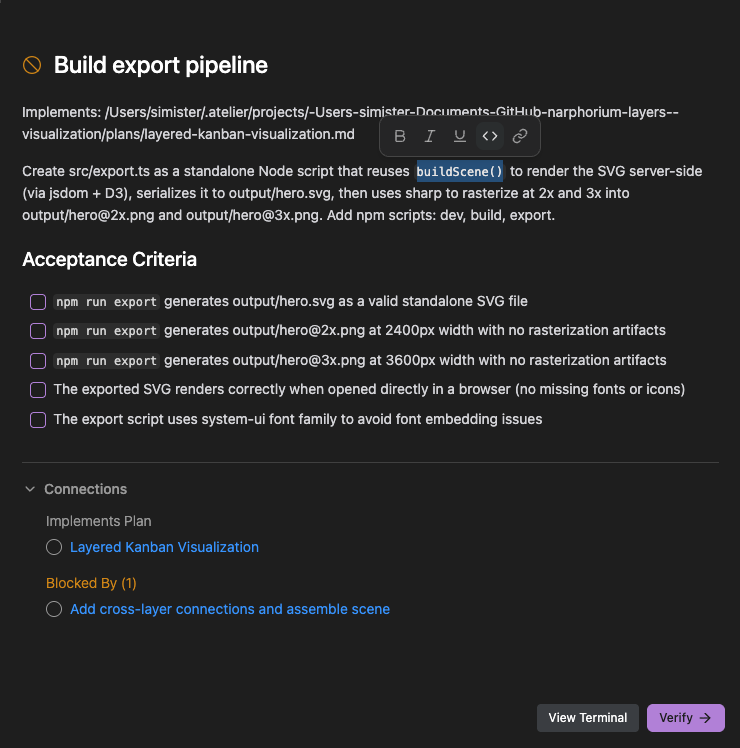

The biggest change in Atelier.dev v0.2 is that specs now open in a custom Markdown editor. So now every task gets its own "plan mode"

English

Shawn Simister retweeté

Shawn Simister retweeté

I've been thinking about why verifying AI agent output feels so much harder than writing the spec that produced it. That question led me to rethink where my attention actually belongs in the process, and eventually to build atelier.dev narphorium.com/blog/decision-…

English

Shawn Simister retweeté

Shawn Simister retweeté

Excited to share our #CHI2026 paper “Texterial: A Text-as-Material Interaction Paradigm for LLM-Mediated Writing” (done during internship at Microsoft Research)

We imagine interacting with LLMs by treating text as a material like plants/clay.

📃arxiv.org/pdf/2603.00452 🧵[1/n]

English

@playwhatai Tests are a big part of it for sure. The trick is making sure they're testing the right things. Having clear acceptance criteria helps a lot with that

English

@narphorium vibe coding + tests = survivable. without tests = pain

English

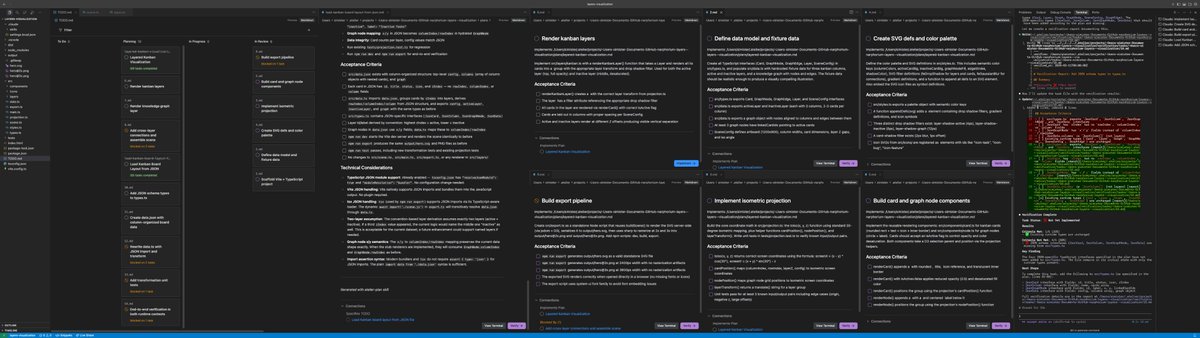

The new Agent Teams feature in Claude Code lets multiple agents work simultaneously. Fun watching the atelier.dev cards update automatically as each finishes: code.claude.com/docs/en/agent-…

English