Robin Jia

338 posts

Robin Jia

@robinomial

Assistant Professor @CSatUSC | Previously Visiting Researcher @facebookai | Stanford CS PhD @StanfordNLP

𝗣𝗿𝗶𝘃𝗮𝘁𝗲 𝘀𝘆𝗻𝘁𝗵𝗲𝘁𝗶𝗰 𝘁𝗲𝘅𝘁 𝗴𝗲𝗻𝗲𝗿𝗮𝘁𝗶𝗼𝗻 has had the same problem for a while: privacy, quality, or efficiency - pick two 😵💫 We think 𝐄𝐏𝐒𝐕𝐞𝐜 changes that 🚀 Paper: arxiv.org/abs/2602.21218

1+1=3 2+2=5 3+3=? Many language models (e.g., Llama 3 8B, Mistral v0.1 7B) will answer 7. But why? We dig into the model internals, uncover a function induction mechanism, and find that it’s broadly reused when models encounter surprises during in-context learning. 🧵

In our recent NeurIPS 2024 paper (openreview.net/forum?id=i4Mut…), we find pretrained LLMs use Fourier Features to add numbers (some called it helix recently). Is this representation truly powerful that LLMs naturally prefer it? Introducing FoNE (Fourier Number Embedding): one token is all you need to encode any number, precisely. 🖇️Blog post: fouriernumber.github.io

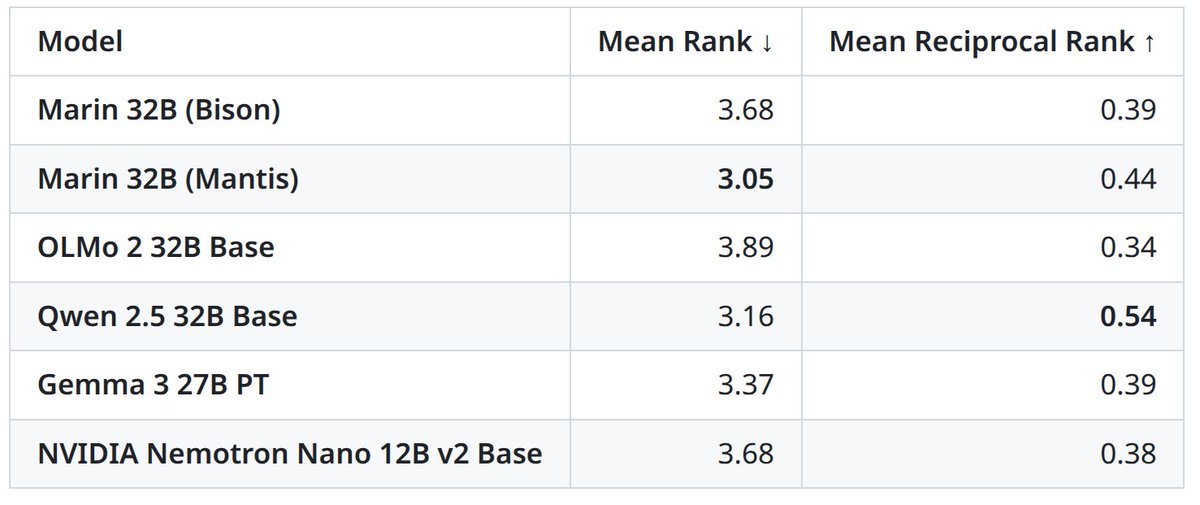

LLM as a judge has become a dominant way to evaluate how good a model is at solving a task, since it works without a test set and handles cases where answers are not unique. But despite how widely this is used, almost all reported results are highly biased. Excited to share our preprint on how to properly use LLM as a judge. 🧵 === So how do people actually use LLM as a judge? Most people just use the LLM as an evaluator and report the empirical probability that the LLM says the answer looks correct. When the LLM is perfect, this works fine and gives an unbiased estimator. If the LLM is not perfect, this breaks. Consider a case where the LLM evaluates correctly 80 percent of the time. More specifically, if the answer is correct, the LLM says "this looks correct" with 80 percent probability, and the same 80 percent applies when the answer is actually incorrect. In this situation, you should not report the empirical probability, because it is biased. Why? Let the true probability of the tested model being correct be p. Then the empirical probability that the LLM says "correct" (= q) is q = 0.8p + 0.2(1 - p) = 0.2 + 0.6p So the unbiased estimate should be (q - 0.2) / 0.6 Things get even more interesting if the error pattern is asymmetric or if you do not know these error rates a priori. === So what does this mean? First, follow the suggested guideline in our preprint. There is no free lunch. You cannot evaluate how good your model is unless your LLM as a judge is known to be perfect at judging it. Depending on how close it is to a perfect evaluator, you need a sufficient size of test set (= calibration set) to estimate the evaluator’s error rates, and then you must correct for them. Second, very unfortunately, many findings we have seen in papers over the past few years need to be revisited. Unless two papers used the exact same LLM as a judge, comparing results across them could have produced false claims. The improvement could simply come from changing the evaluation pipeline slightly. A rigorous meta study is urgently needed. === tldr: (1) Almost all LLM-as-a-judge evaluations in the past few years were reported with a biased estimator. (2) It is easy to fix, so wait for our full preprint. (3) Many LLM-as-a-judge results should be taken with grains of salt. Full preprint coming in a few days, so stay tuned! Amazing work by my students and collaborators. @chungpa_lee @tomzeng200 @jongwonjeong123 and @jysohn1108

🔥 Excited to share that our work (with @robinomial) got accepted to main conf at #EMNLP2025!

We are building a research stack on top of Hubble 🔭! TokenSmith consolidates our code used to perturb our datasets and lets you view, edit, and search through pretraining data. Work led by @Aflah02101 and @ameya_godbole1, Aflah will be at #EMNLP2025 !! aflah02.github.io/TokenSmith/

We are building a research stack on top of Hubble 🔭! TokenSmith consolidates our code used to perturb our datasets and lets you view, edit, and search through pretraining data. Work led by @Aflah02101 and @ameya_godbole1, Aflah will be at #EMNLP2025 !! aflah02.github.io/TokenSmith/

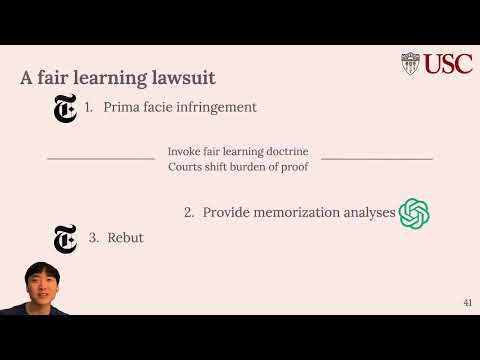

Announcing 🔭✨Hubble, a suite of open-source LLMs to advance the study of memorization! Pretrained models up to 8B params, with controlled insertion of texts (e.g., book passages, biographies, test sets, and more!) designed to emulate key memorization risks 🧵

Announcing 🔭✨Hubble, a suite of open-source LLMs to advance the study of memorization! Pretrained models up to 8B params, with controlled insertion of texts (e.g., book passages, biographies, test sets, and more!) designed to emulate key memorization risks 🧵

Announcing 🔭✨Hubble, a suite of open-source LLMs to advance the study of memorization! Pretrained models up to 8B params, with controlled insertion of texts (e.g., book passages, biographies, test sets, and more!) designed to emulate key memorization risks 🧵

Announcing 🔭✨Hubble, a suite of open-source LLMs to advance the study of memorization! Pretrained models up to 8B params, with controlled insertion of texts (e.g., book passages, biographies, test sets, and more!) designed to emulate key memorization risks 🧵

Announcing 🔭✨Hubble, a suite of open-source LLMs to advance the study of memorization! Pretrained models up to 8B params, with controlled insertion of texts (e.g., book passages, biographies, test sets, and more!) designed to emulate key memorization risks 🧵

Announcing 🔭✨Hubble, a suite of open-source LLMs to advance the study of memorization! Pretrained models up to 8B params, with controlled insertion of texts (e.g., book passages, biographies, test sets, and more!) designed to emulate key memorization risks 🧵