R.Yana

389 posts

R.Yana

@ulo_fd

AISt Research interest: Multimodal×User-centric, CV, V&L

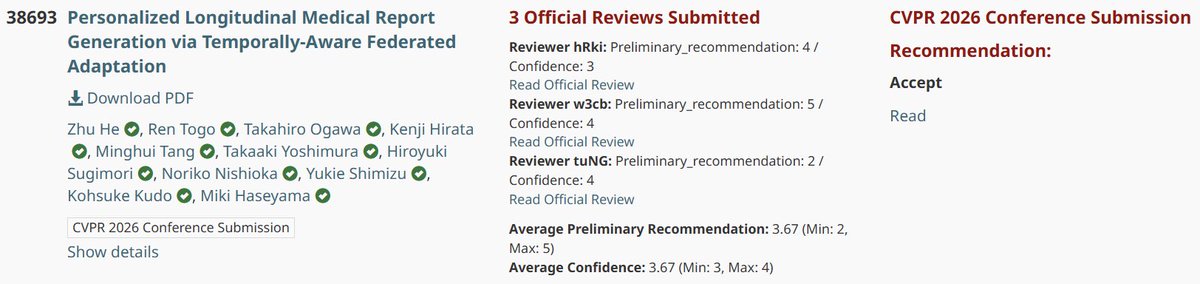

Our paper "CLIP-like Model as a Foundational Density Ratio Estimator" has been accepted to #CVPR2026 (main)🎉 We reinterpreted CLIP/SigLIP as density ratio estimator and proposed applications for transfer learning / semantic diversity metric for an image! arxiv.org/abs/2506.22881

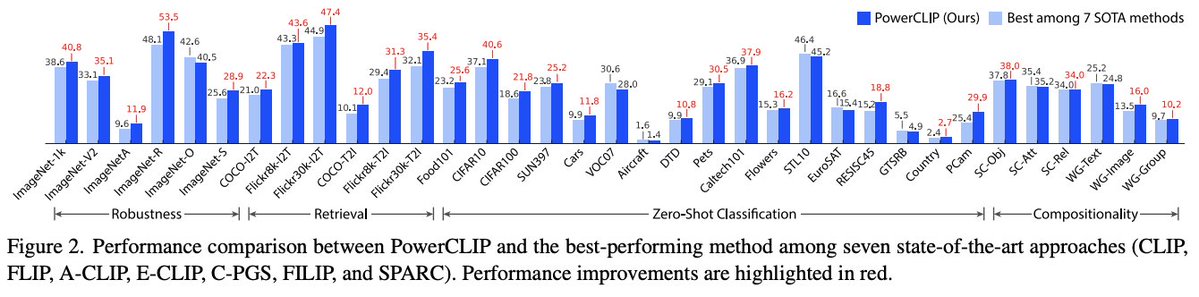

🚀 New arXiv preprint! PowerCLIP is the first method to align **powersets of image region subsets with textual phrase structures**, enabling fine-grained compositional and robust image-text understanding beyond simple global or token-to-patch alignment.

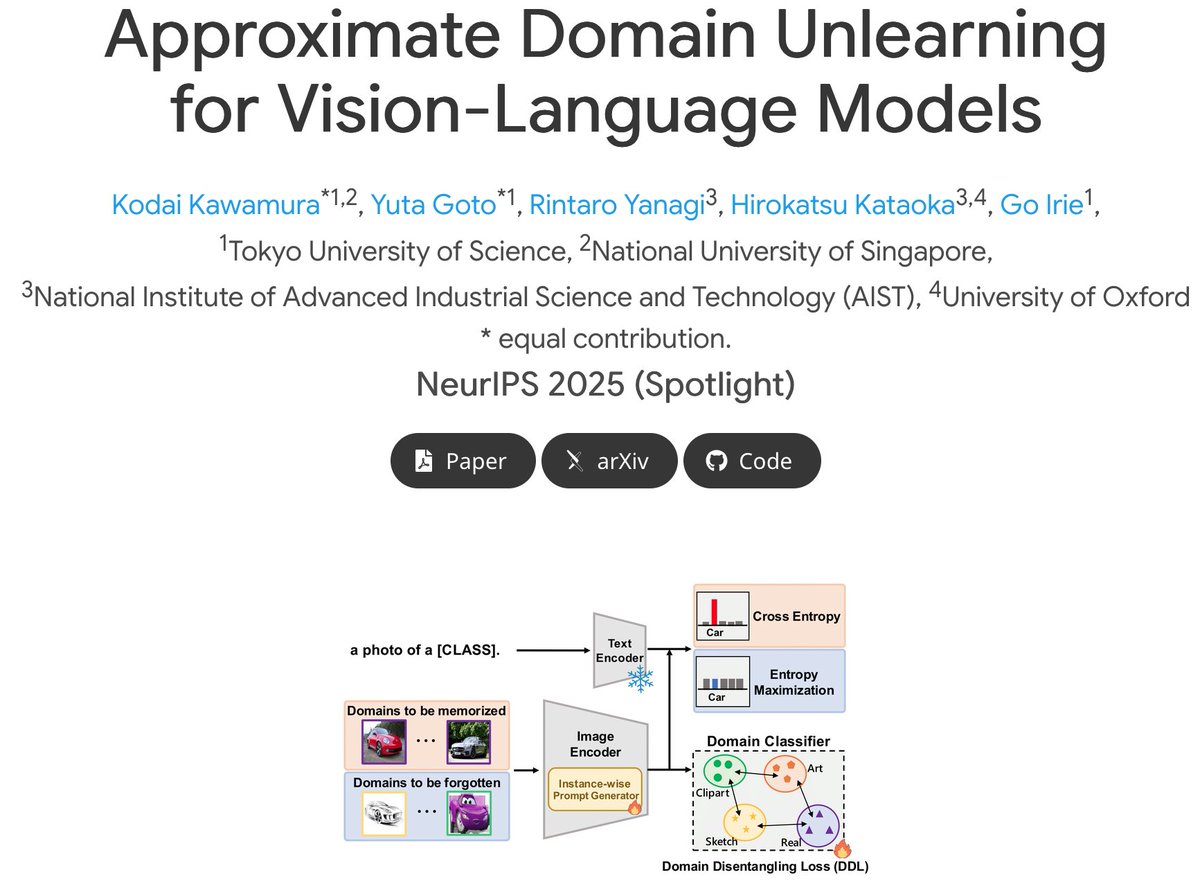

【研究】さらに上手に“忘れる”AIへ― 学習済みの知識をドメイン単位で忘却可能な世界初の新技術 ~ 不要な誤認を防ぎ、さらに信頼できるAIへ ~ tus.ac.jp/today/archive/… ▼ 入江 豪 准教授▼ tus.ac.jp/ridai/doc/ji/R… #東京理科大学 #TUS

🚀 New arXiv preprint! PowerCLIP is the first method to align **powersets of image region subsets with textual phrase structures**, enabling fine-grained compositional and robust image-text understanding beyond simple global or token-to-patch alignment.