Will Finger

890 posts

Will Finger

@willfi

Product Designer, Web3 On-chain, AI engineer. Married. Father. Learning love with Jesus.

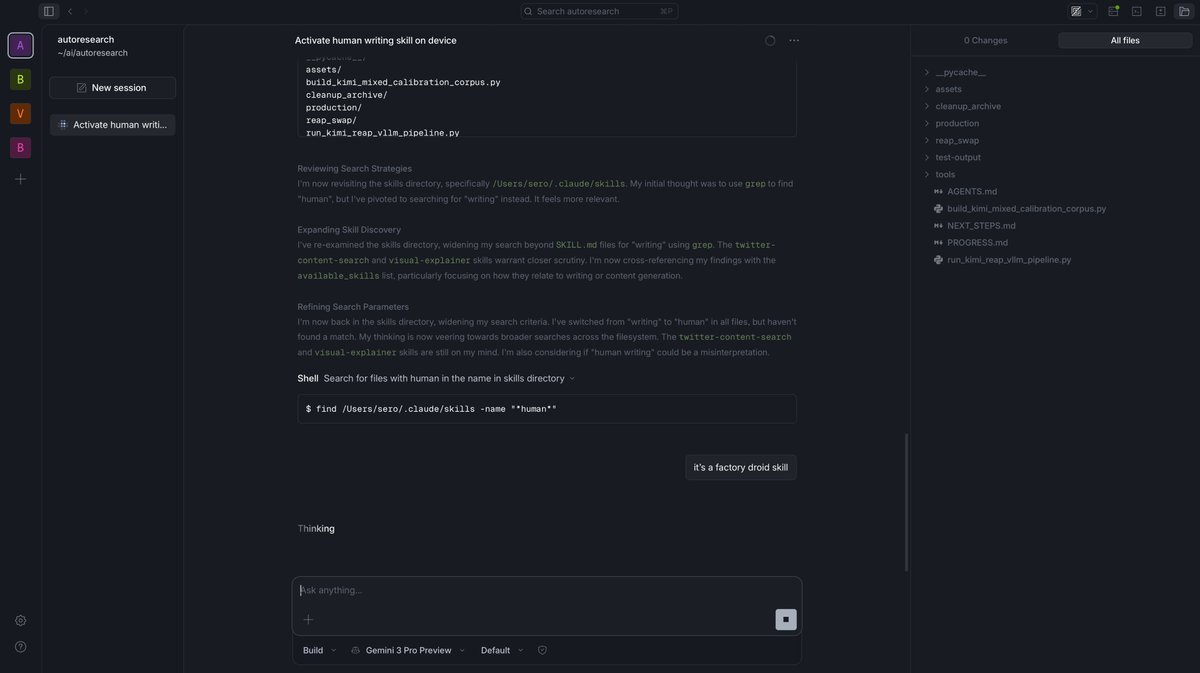

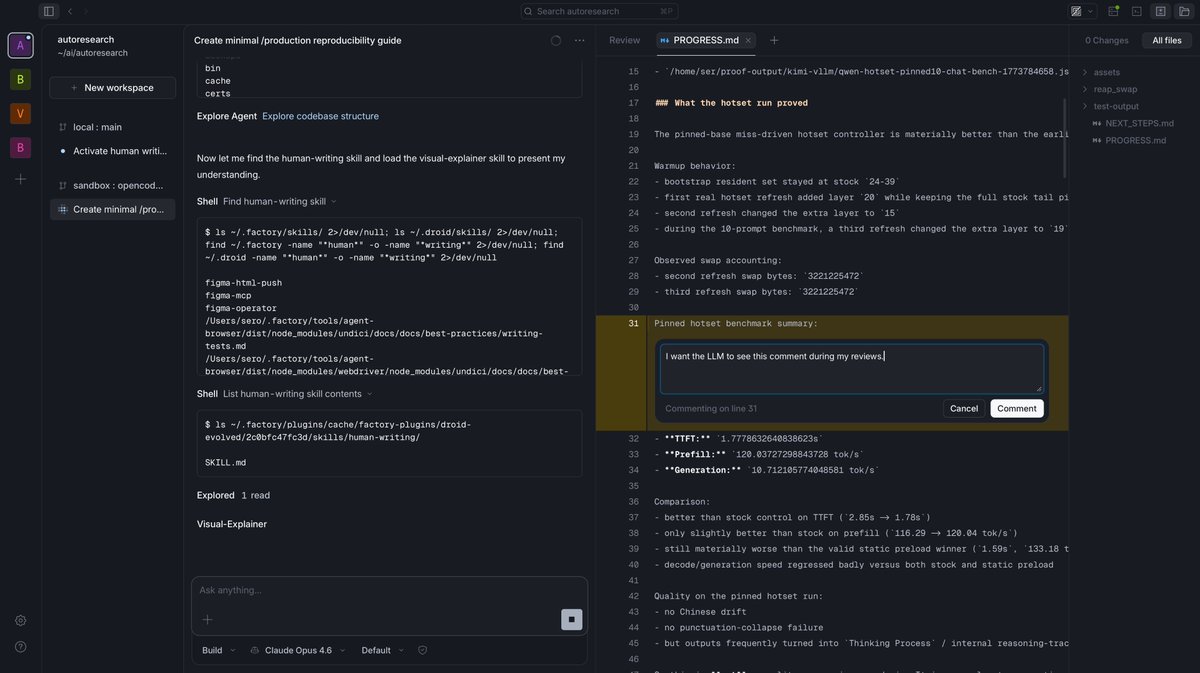

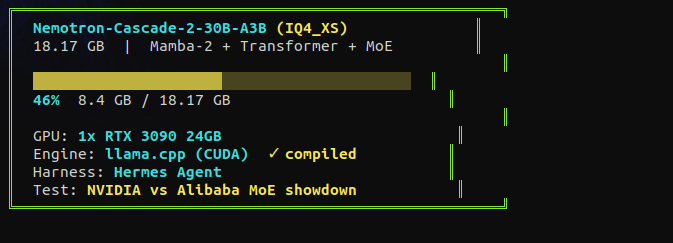

the hype around this model settled fast. good. now i can test it without the noise. NVIDIA released nemotron cascade. 30B total, 3B active. fits on a single RTX 3090. hybrid mamba MoE. gold medal on the international math olympiad with only 3 billion active parameters. they say it beats qwen on math, code, and reasoning. i tested qwen 3.5 35B-A3B on a single 3090 at 112 tok/s. now same card, same tests, different architecture. mamba vs deltanet. nvidia vs alibaba. receipts incoming tonight.

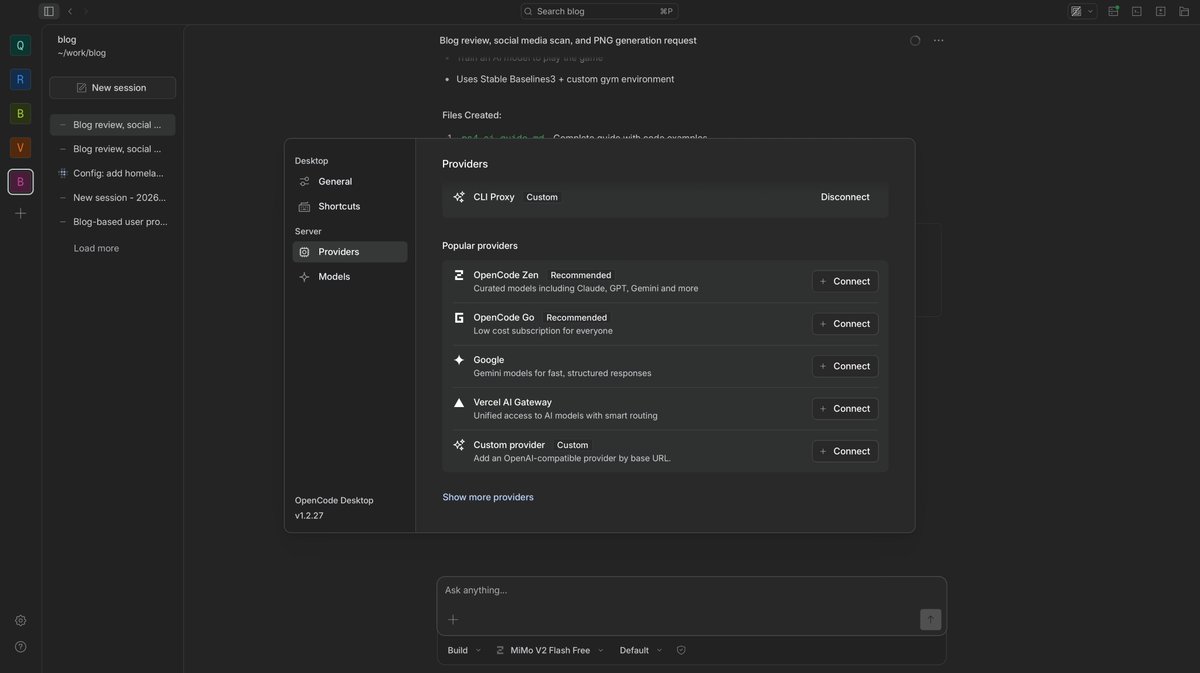

I cracked it. Qwen 3.5 35B Local! 120 t/s generation. 120K context. Vision ENABLED. All on 16GB VRAM. All GPU. Zero compromise. Here's exactly how 👇

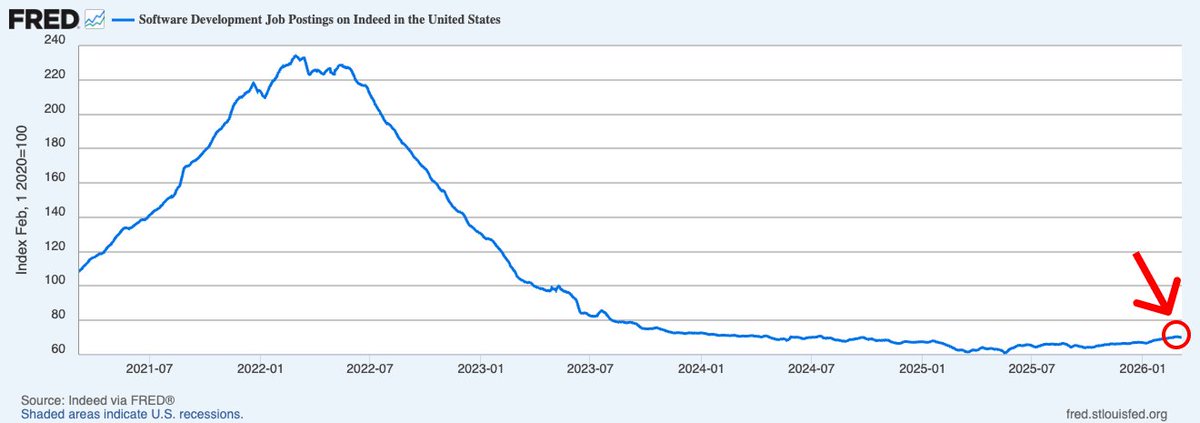

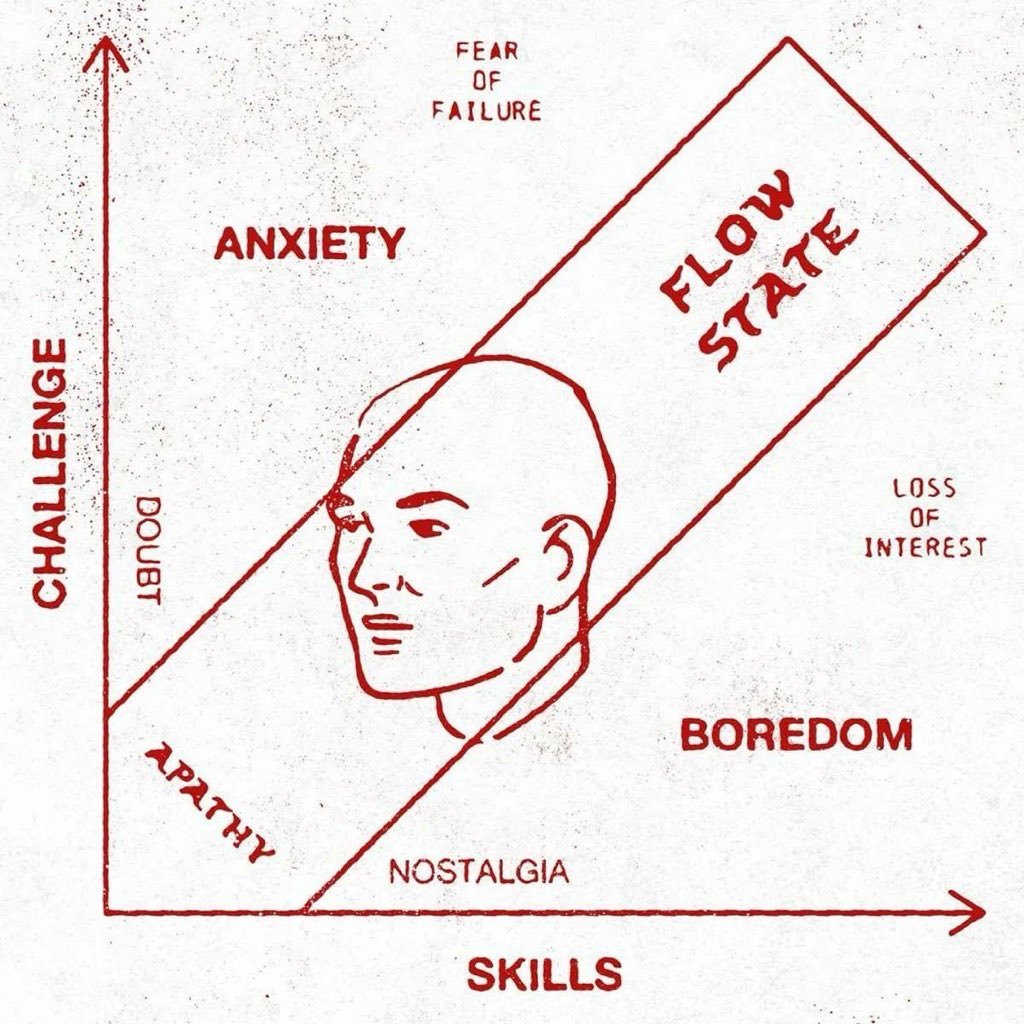

I don’t know exactly what’s going on here, but it does feel AI-related. Unlike PM and eng, which started growing in 2024 (two years post-ChatGPT), design didn’t. If I had to venture a theory, I’d say that because AI is allowing engineers to move so quickly, there’s less opportunity—and less desire—to involve the traditional design process. That said, you’d think design would become a differentiator as more products compete for attention. Something to think about for your company! We’ll keep watching this trend and AI’s impact on org design more generally. One interesting observation we made when we went a level deeper: the ratio of demand for PMs vs. designers has flipped. In mid-2023, we went from more open designer roles to more open PM roles. And ever since, PM demand has been pulling away (currently 1.27x). This will be another trend to monitor, in terms of how AI is reshaping org design.

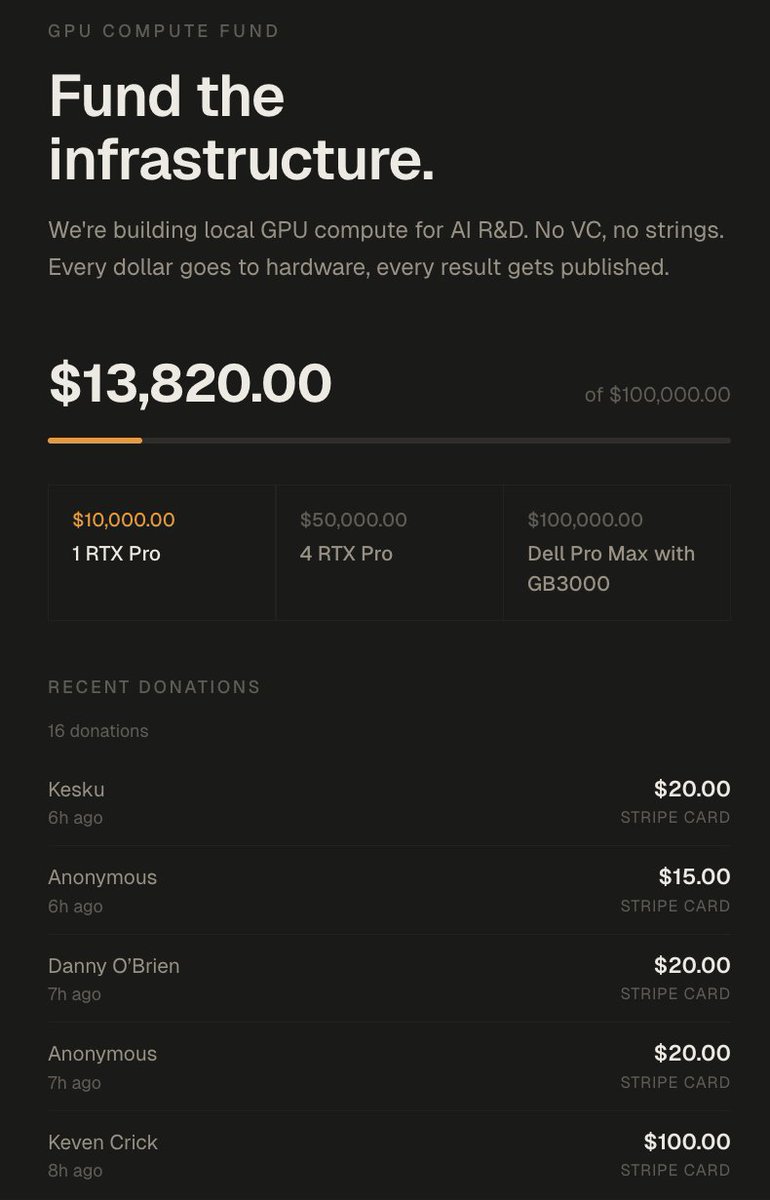

let me get you started in local AI and bring you to the edge. if you have a GPU or thinking about diving into the local LLM rabbit hole, first thing you do before any setup is join x/LocalLLaMA. this is the community that will help you at every step. post your issue and we will direct you, debug with you, and save you hours of work. once you're in, follow these three: @TheAhmadOsman the oracle. this is where you consume the latest edges in infrastructure and AI. if something dropped you hear it from him first. his content alone will keep you ahead of most. @0xsero one man army when it comes to model compression, novel quantization research, new tools and tricks that make your local setup better. you will learn, experiment, and discover things you didn't know existed. @Teknium maker of Hermes Agent, the agent i use every day from @NousResearch. from Teknium you don't just stay at the frontier, you get your hands on the tools before everyone else. this is where things are headed. if you follow me follow these three and join the community. you will be ahead of most people in this space. if you run into wrong configs, stuck debugging hardware, or can't get a model to load, post there so we can help. get started with local AI now. not only understand the stack but own your cognition. don't pay openai fees on top of giving them your prompts, your research, and your most valuable thinking to be monitored and metered. buy a GPU and build your own token factory.