Basis

78 posts

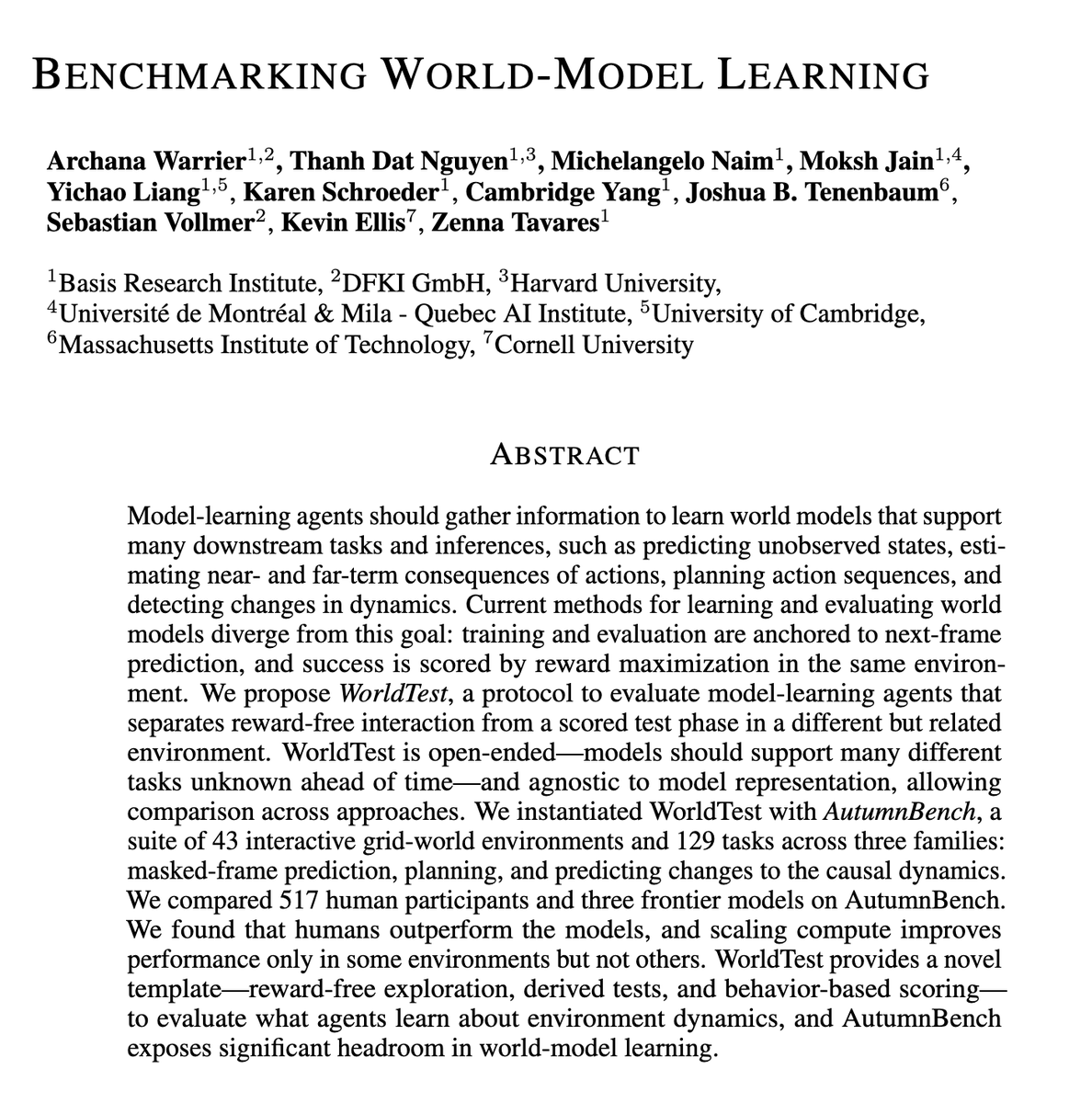

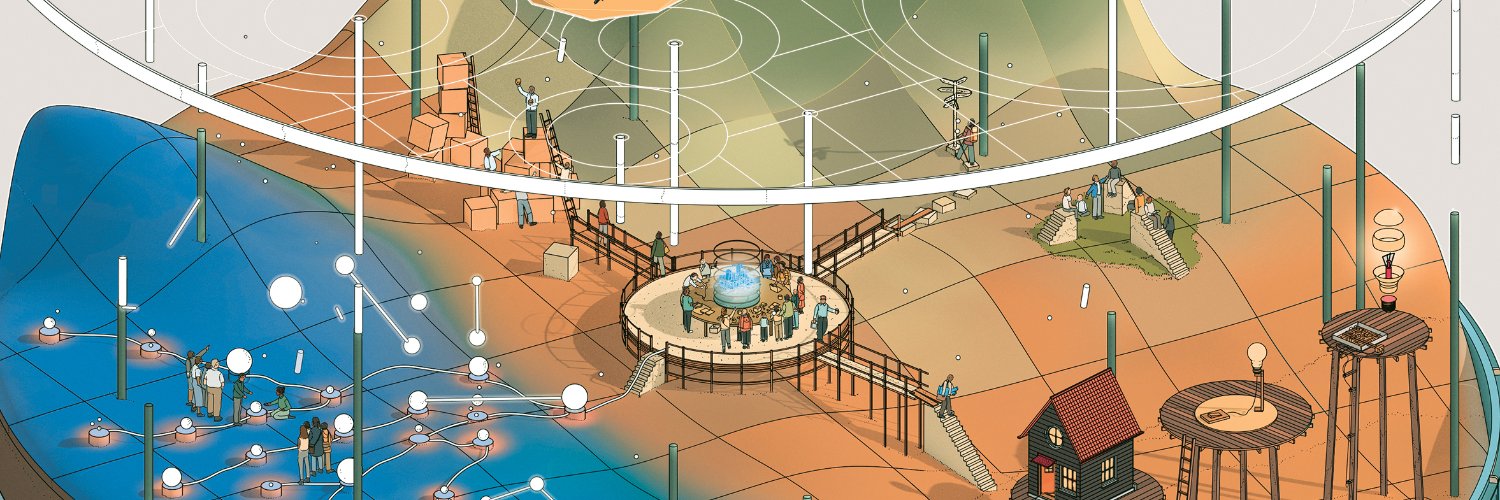

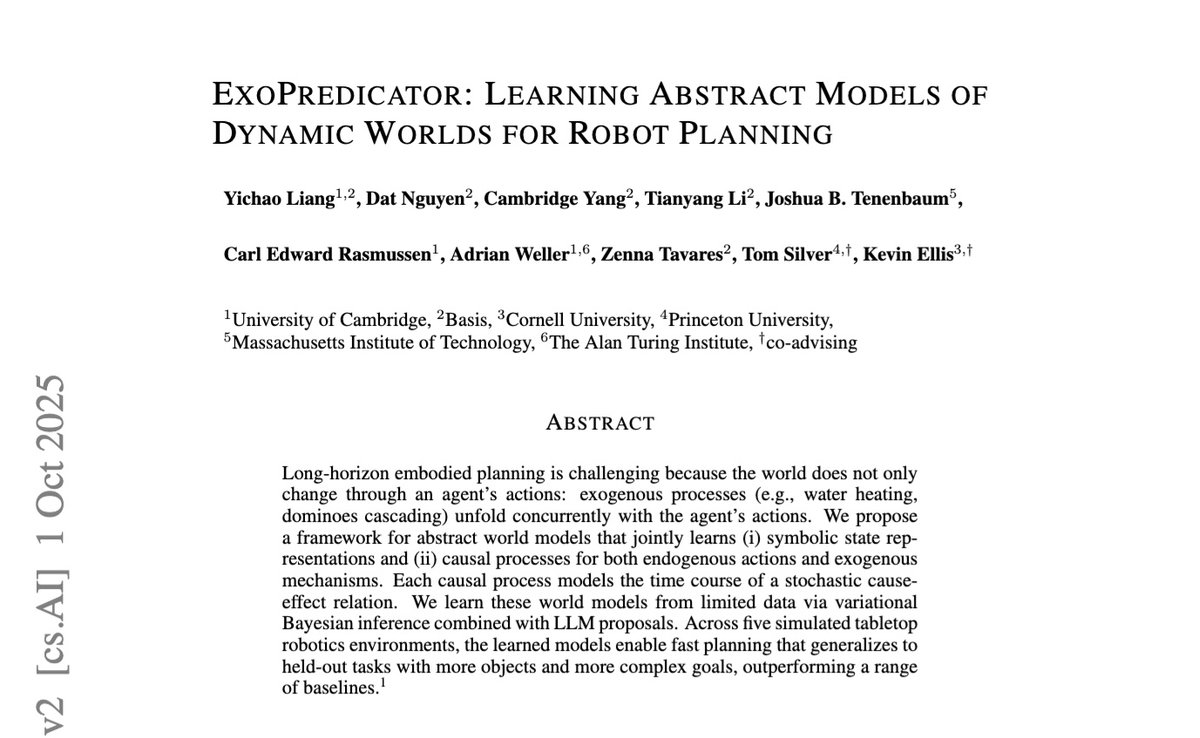

🔥 MIT just exposed every top AI model and it’s not pretty. They built a new test called WorldTest to see if AI actually understands the world… and the results are brutal. It doesn’t just check how well a model predicts the next frame or maximizes reward it tests whether it can build an internal model of reality and use it to handle new situations. They built AutumnBench 43 interactive worlds, 129 tasks where AIs must: • Predict hidden parts of the world (masked-frame prediction) • Plan sequences of actions to reach a goal • Detect when the environment’s rules suddenly change Then they tested 517 humans vs. Claude, Gemini 2.5 Pro, and o3. Humans crushed every model. Even massive compute scaling barely helped. The takeaway is wild.. today’s AIs don’t understand environments; they just pattern-match inside them. They don’t explore strategically, revise beliefs, or run experiments like humans do. WorldTest might be the first benchmark that actually measures understanding, not memorization. The gap it reveals isn’t small it’s the next grand challenge in AI cognition. (Comment “Send” I’ll DM you the paper)