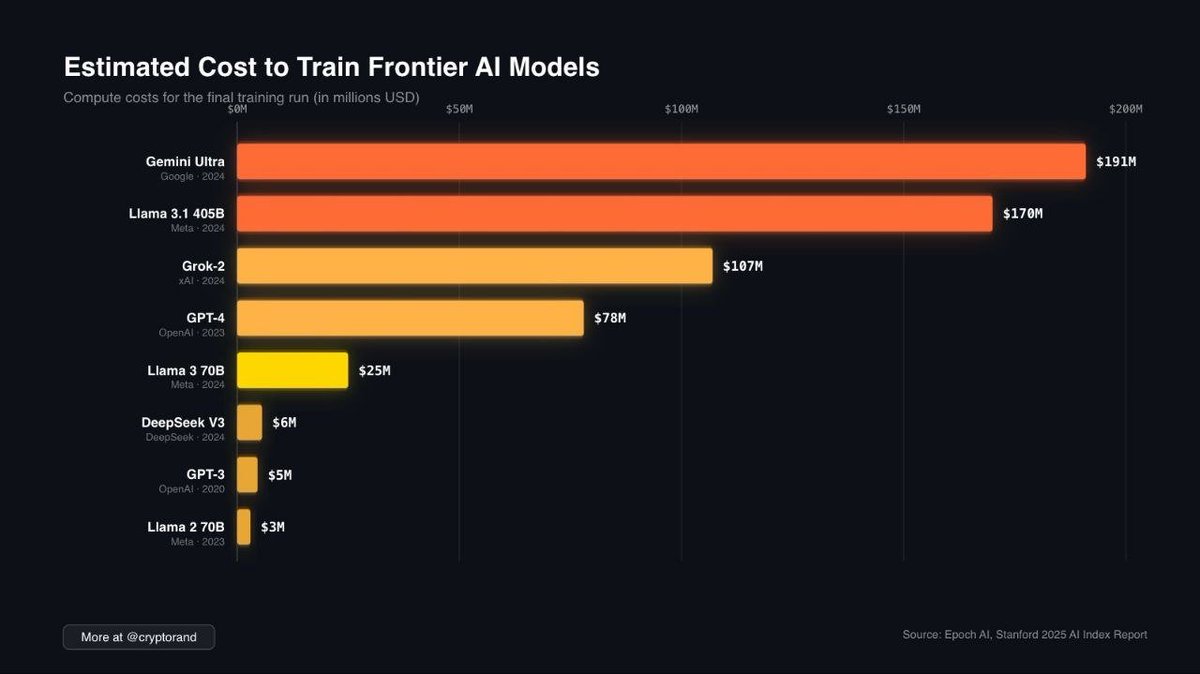

While companies like Google, Meta, and OpenAI are burning $100M–$200M to train their AI models...

0G Labs has trained a 107B parameter model

→ not in some hyperscale data center

→ but using standard consumer internet infra

→ and did it 8 months ago

That’s 48% larger than Bittensor’s recent 72B model.

If @0G_labs can pull this off early, imagine when decentralized compute markets mature.

0G Labs (Home of Infinite AI)@0G_labs

English