Py_nk

868 posts

My read on "normal policymaker & corp. leader on AI": mostly now they don't need to be convinced it is very important (unlike a year ago). But they still see its capabilities as today + epsilon. So just briefly, here is what even "AI is normal tech" folks in the labs believe: 1/8

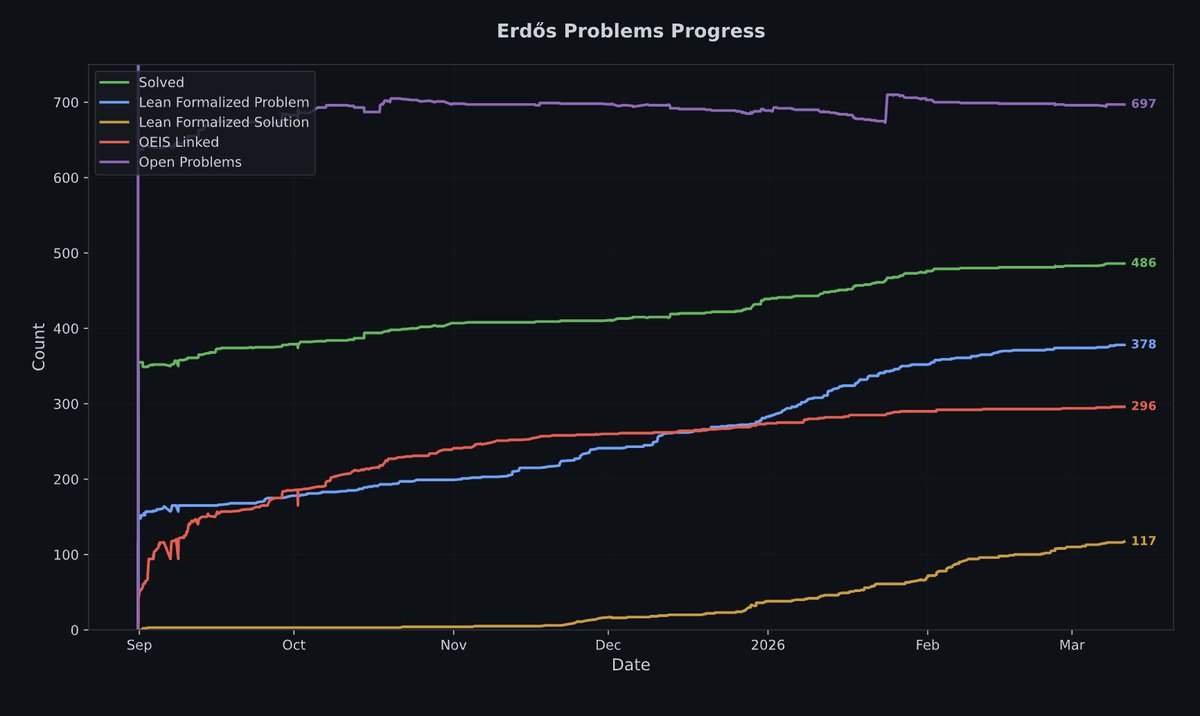

🦾Meet Aristotle Agent, the world’s first autonomous mathematician — live and currently free of charge. We designed Aristotle Agent to solve and formalize the world’s most challenging mathematical research problems. It is now: ☑️#1 in Formal Math: We’re the #1 formal math model according to ProofBench, by @ValsAI, ahead of the closest competitor by 15%. Aristotle Agent can autonomously prove/formalize for up to 24 hrs without human intervention. ☑️Fully Agentic: Give it an English problem and it will prove/formalize from scratch, or it can work and edit files directly inside your Lean project / repository. ☑️Github-ready: Aristotle agent produces repo-quality code; project leads are increasingly merging Aristotle-drafted PRs with no modifications. Now live across both web, CLI, and API. 🔥

Three.js + WebGPU = a modern Flash games boom > Ships to 5B+ users, near-native GPU performance > No platform rake, app store, or custom runtime > Devs own their distribution + monetisation > AI can now vibe code the games for you The only missing piece is the discovery layer

I would like to purchase a handful of code problems that modern LLMs can’t solve. Requirements: - programmatically verifiable (can be tested without human interaction) - “before” state (repo before the commit that implements the solution) - example code that actually solves the problem I am willing to pay up to $500 per problem that I can easily test locally and confirm current models (gpt-5.3-codex, opus 4.6) are unable to solve. If you can’t tell, I’m running out of “too hard for LLM” code tasks 🙃🙃🙃

@kalomaze poweruserslop is a funny concept