Every civilization in history has operated within a defined set of rules. These rules aren't random—they emerge from how a society organizes its core resources. Land ruled the agricultural age. Machines dominated the industrial era. Now, we're entering something new where intelligence itself becomes the primary asset.

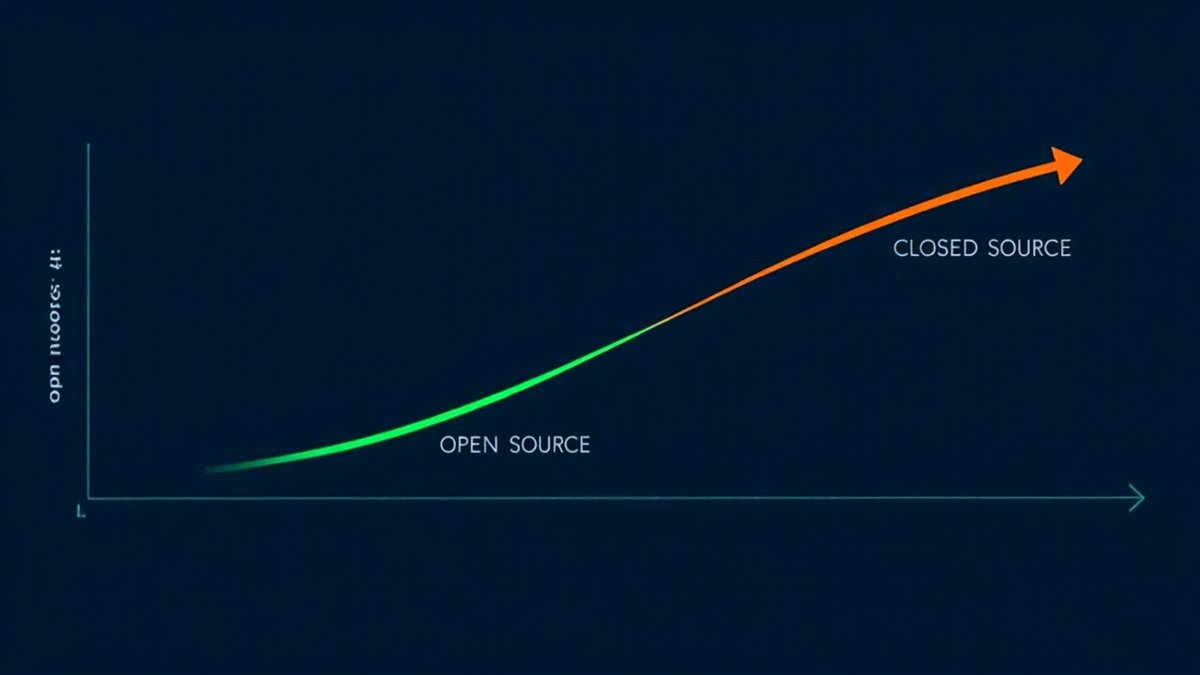

Here's what most people miss: AI isn't actually breaking these stages down. It's doing the opposite. It's locking each phase in place more firmly than ever before. The factory worker doesn't get uplifted by AI—they get replaced and the replacement becomes cheaper. The small business doesn't compete with AI—it gets crushed.

This isn't dystopian. It's just physics. Every stage of civilization has its winners and its losers. The question isn't whether AI will disrupt. It's whether you understand which stage you're in, and whether your energy is flowing with the current or against it.

English