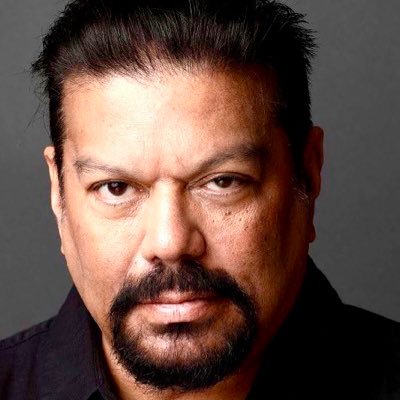

Arvind Singhal

8K posts

Arvind Singhal

@asinghal2004

Chairman, The Knowledge Company LLP

Marc Andreessen highlights why the people who work for Elon Musk echo the exact same sentiment as those who worked for Steve Jobs. Even after difficult interactions or a sudden departure, they inevitably report that they did the best work of their entire lives because they were pushed to their absolute limits. What drives this intense environment is a demand for truth-seeking at all costs. People who criticize Elon often miss this fundamental trait. He genuinely wants to know the ground truth and has zero tolerance for anything else. When confronting bad news, he is absolutely ruthless and relentless in making sure he understands exactly what is actually going on. This level of radical transparency is shockingly rare in the business world. The typical startup founder operates on forced optimism, constantly putting on a brave face, telling everyone to have faith, and promising that everything will be great just to keep talent from leaving. Elon completely flips that standard script. He operates with pure urgency by simply telling the unfiltered truth, even when that truth is that the company will go bankrupt and die if they fail. In almost any other corporate environment, that level of blunt, existential dread would cause the talent pool to immediately bleed out. But for the teams working under him, that brutal honesty acts as the ultimate catalyst. It strips away the corporate fluff and forces them to rise to the occasion, leaving them with the undeniable realization that, much like the engineers who built the first iPhone, they just completed the greatest work of their careers.

Gujarat’s Surat had a factory making 400 KG fake paneer daily without a drop of milk using palm oil, powder, and industrial acid. Nearly 3 lakh kg supplied in 2 years while FSSAI’s “system” kept sleeping

Said this many times, will say again, our national capital deserves a world class stadium. No point in building 20 stadiums around the country when top metro cities like Delhi and Bangalore don’t have modern stadiums.

Ridiculous stuff people say to ingratiate themselves. First there was amalgamation of aviation safety with Hindu mythology and now this. It only dilutes the fight for the issues that actually matter.