Devilal Sharma

3.5K posts

Devilal Sharma

@devilalsharma

CEO @famdo_in | 2x Founder, 2x Father | Tech/AI insights | Sweat daily, live healthy | IIT Madras alum.

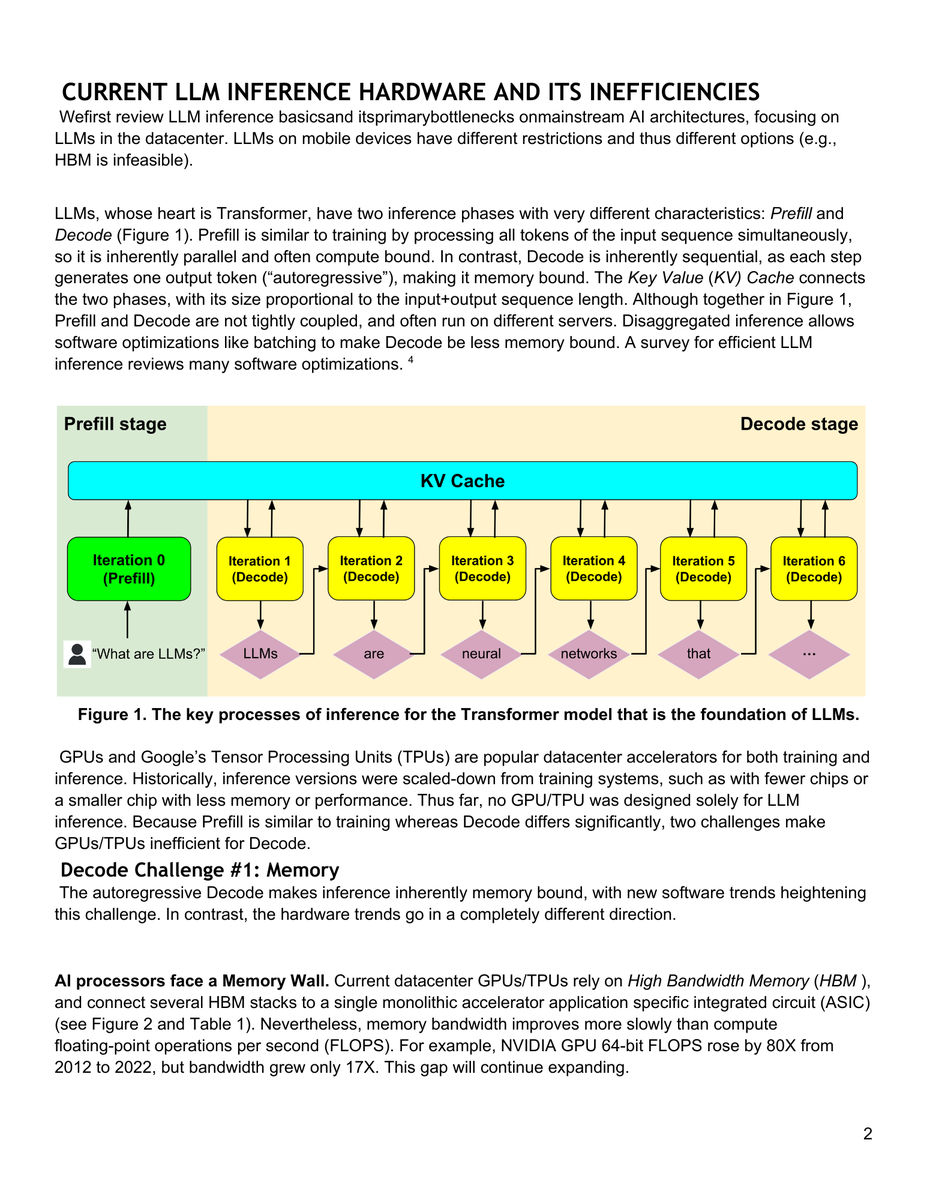

Check out where Systems of Recoard sit in this diagram from @OpenAI Frontier. At least 3, if not 4, layers of context and intelligence sit between them and the end business application. It's one of the clearest representations of how AI companies plan to build next-gen systems of action on top of existing SoR, and why the markets are so worried about the future of software companies. PS: Even the color coding subtly highlights where OpenAI thinks value will accrue. The SoR layer is white and can almost be missed if one don't look closely!

Was fun to be at a dev event in Bengaluru and demo an app I built recently for deep research with multiple models and decision frameworks...think of it as "chain of debate"... Next stop, Copilot!!