announcing @nozomioai's $6.2m fundraise, led by @CRV, @BoxGroup, and @localglobevc. back to work now.

Nozomio Labs

376 posts

@nozomioai

product & research lab. makers of https://t.co/6hz1Zw6tfR (context for ai agents) backed by paul graham, yc, crv, lg, boxgroup, and more. ps: https://t.co/n9W67dONC2

announcing @nozomioai's $6.2m fundraise, led by @CRV, @BoxGroup, and @localglobevc. back to work now.

when i was a kid, i used to care about other people’s opinions and trying to fit in. as i turned 17, i realized all of that is bullshit. none of it matters. all you have to do is be delusional enough to take the biggest risks possible. those words motivate the shit out of me.

today, we’re introducing @nozomioai + @LangChain integration. 20+ tools that give any langchain agent reliable context from docs, codebases, research papers, datasets, and other data sources. python and typescript. available now.

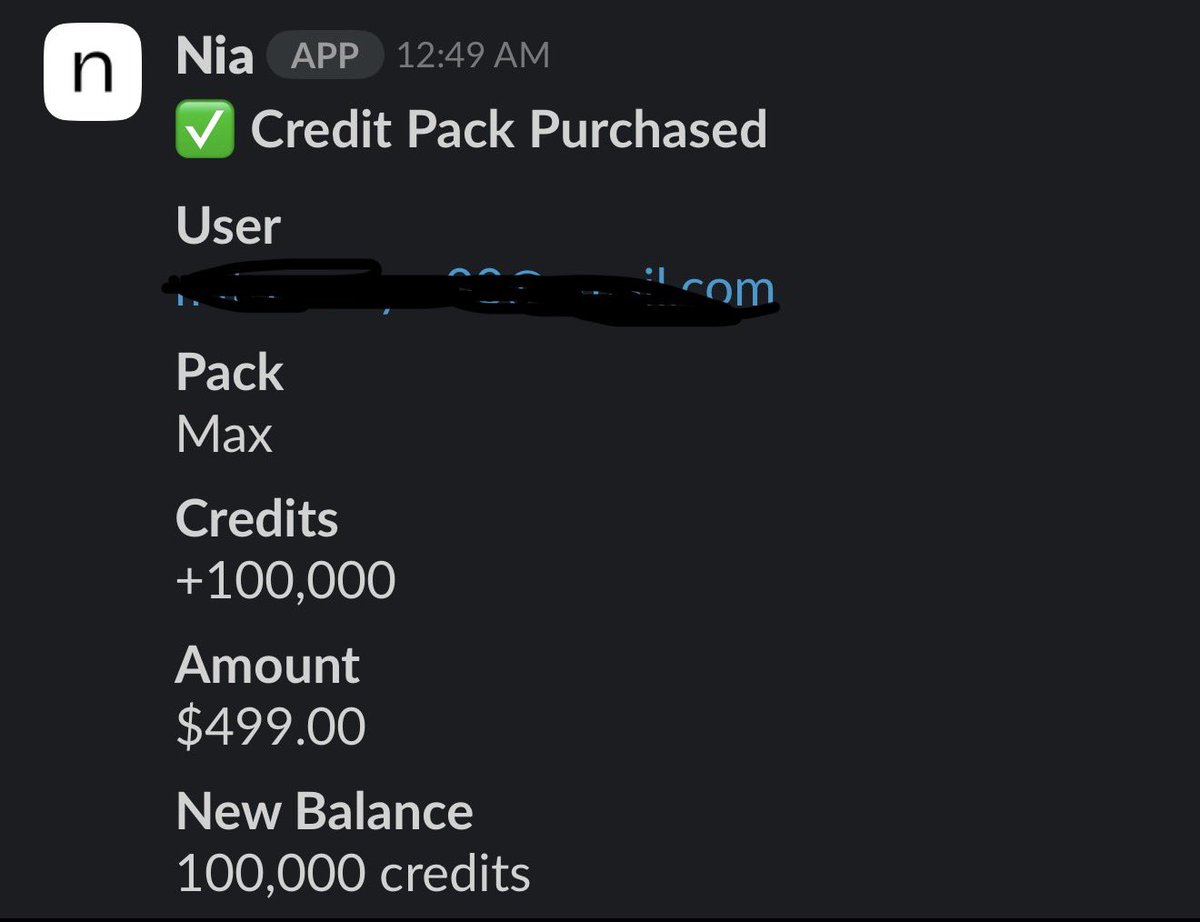

CLI > MCP every MCP server you connect to your agent loads ALL its tool definitions on EVERY turn you're literally burning tokens for nothing, money you're paying that never touches your actual task there are a few tools that fix this, one i tried recently is mcp2cli it converts your MCP servers to simple commands that the agent calls only when needed apparently is saves 96-99% on tokens... definitely worth a try

context hub vs @nozomioai (coding use case): andrew ng just open sourced context hub. it is a curated collection of api documentation that coding agents can fetch via a cli. the idea is solid. agents hallucinate apis, so give them a vetted source of truth. but the architectures are fundamentally different, and that matters for search accuracy. context hub is a documentation catalog. you run chub search openai and it does bm25 keyword matching over three metadata fields: doc name, tags, and description. it does not search the actual content of the documentation. it searches the titles. if someone tagged it well you find it. if not you do not. it currently covers around 30 libraries. each one is a hand written markdown file contributed by maintainers or the community. that curation is valuable, but it means you are limited to what someone has already written and committed. nia takes the opposite approach. you point it at any documentation url, github repository, research paper, or local folder and it indexes the full content automatically. the difference shows up in real queries. if you search "how to verify stripe webhooks with raw body" in context hub, it can only match if someone put those words in the doc title or tags. nia has 15+ tools. it can semantically search across indexed content, read specific pages directly, grep for exact patterns in source code, explore file structures, and more. so it finds the answer even if the page is titled something completely different, because it is actually reading the documentation, not just matching against metadata. i have a lot of respect for what andrew ng is building, and the annotation system where agents leave notes for future sessions is a genuinely smart idea (we also have it btw). but when it comes to search accuracy on documentation, searching titles and searching content are two different problems. we chose to solve the harder one. the context layer for agents cannot be a static catalog. it has to be dynamic, indexable on demand, and semantically deep. that is what we are building at @nozomioai.

Introducing the Nia CLI: the best way for agents to index and agentically search over technical and general data sources. - Index: Index papers, docs, codebases, internal wikis, and more. - Search: Search your indexed sources such as docs, APIs, Slack, and Google Drive. - Research: Run deep research across entire GitHub repositories or your personal data. - Save: Save conversation histories and plans. Resume them in another agent without losing the thread. Get started with a single command in your terminal: bunx nia-wizard@latest

> Be Arlan Rakhmetzhanov > 18 years old > Born in Almaty, Kazakhstan 🇰🇿 > Take your first coding class at 15 > Build an app that helps high school kids find money for college > 20,000 kids use it > Make a few thousand dollars > Build the whole thing just to get into Stanford > Get rejected > Apply to every startup program in the world > Get rejected > Get rejected > Get rejected > Get into one in Europe > Pack up and move to London > Quit school > Apply to YC with one line: "Hi YC. I'm Arlan, an 18-year-old who dropped out of junior year of high school." > Get a WhatsApp call at 1am > Scream on the call > Call your dad > Fly to San Francisco > Become the youngest founder in the whole YC batch > Spot Paul Graham (@paulg) walking out of a session > Run across the parking lot to catch him > Yell: "PG please just give me 3 minutes" > He says just email me > Pull out your phone right there in the parking lot > Email him while he is still walking to his car > Subject line: "The kid who stopped you at the end" > Get a reply > Get the meeting > PG puts in money > Raise $850K to build your company > Build @nozomioai ("hope" in Japanese) > Make a tool that helps coders write better code faster > Saves developers 2 to 5 hours every single week > Get an O1 visa and he's still 18.. @arlanr is absolutely cracked.