🏆 @neutrl Audit Contest Results 🏆 Congrats to: $118,000 rewards ➡️ $16.4M+ paid out in rewards.

4nescient

22 posts

🏆 @neutrl Audit Contest Results 🏆 Congrats to: $118,000 rewards ➡️ $16.4M+ paid out in rewards.

🚨🤯Someone built an AI tool that one-shots the threat model & invariants of your Solidity codebase. Companies used to charge >$20k for this. It's called X-ray, free and fully open-source. My security team will be using this. Check it out below👇 github.com/pashov/skills/…

🚨Solidity Devs: this FREE AI security tool's been used by 1000+ people and has found tens of Critical/High vulns in real codebases. solidity-auditor v2 is OUT - now with 7 specialized sub-agents on top of v1. Free. Open Source. 1min install. Pls share if you find it valuable🫡

Reading about others' findings was really inspiring. The coolest report was submitted by @4nescient. They figured out how to farm staking rewards out of thin air by exploiting integer division truncation in Rust. Link to the original report: github.com/Frankcastleaud…

The $40,000 SukukFi Audit Competition results are officially in! 🏆 Huge congrats to everyone who submitted valid findings! And a special shoutout to @Audittens for for earning a spot on the Top 10 leaderboard for the last 90 days Shoutout to @sukukfi for their commitment to security, full list of winners in thread!👇

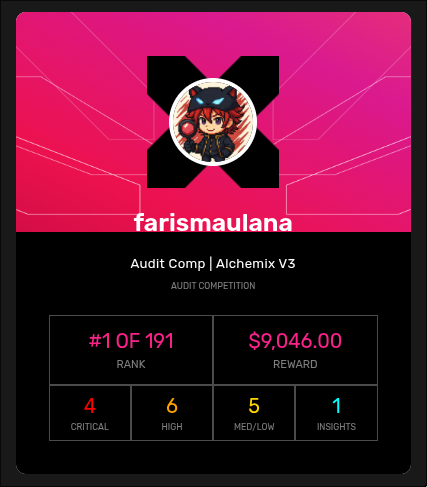

The $100,000 USD @AlchemixFi V3 Audit Competition is finished, and the full results have been posted. 100% of the pool has been paid out! 🥇@0xfrsmln: $12,446 🥈 @ZeroK_____: $8,714 🥉 @niroh30: $7,780 4⃣ @magtentic: $6,997 5⃣ @PaludoX0: $6,748 Check the link below for the full leaderboard and bug reports! 📷👇

Thrilled to see the results from my very first contest ✅ I submitted just one finding… it got validated… and ended up being the only valid bug in the entire contest🤯 Guess that means 100% coverage on my first attempt 😅 Beginner’s luck? Sure, but I’ll take it 😏 Big thanks to @sherlockdefi for the fun experience. On to the next hunt 🕵️♂️