BigCode

272 posts

@BigCodeProject

Open and responsible research and development of large language models for code. #BigCodeProject run by @huggingface + @ServiceNowRSRCH

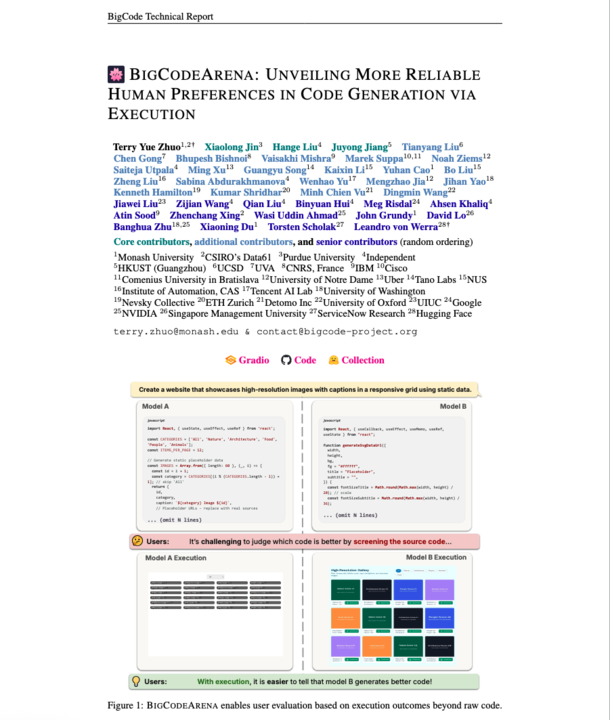

Introducing BigCodeArena, a human-in-the-loop platform for evaluating code through execution. Unlike current open evaluation platforms that collect human preferences on text, it enables interaction with runnable code to assess functionality and quality across any language.

LLMs are great at programming tasks... for Python and other very popular PLs. But, they are often unimpressive at artisanal PLs, like OCaml or Racket. We've come up with a way to significantly boost LLM performance of on low-resource languages. If you care about them, read on!

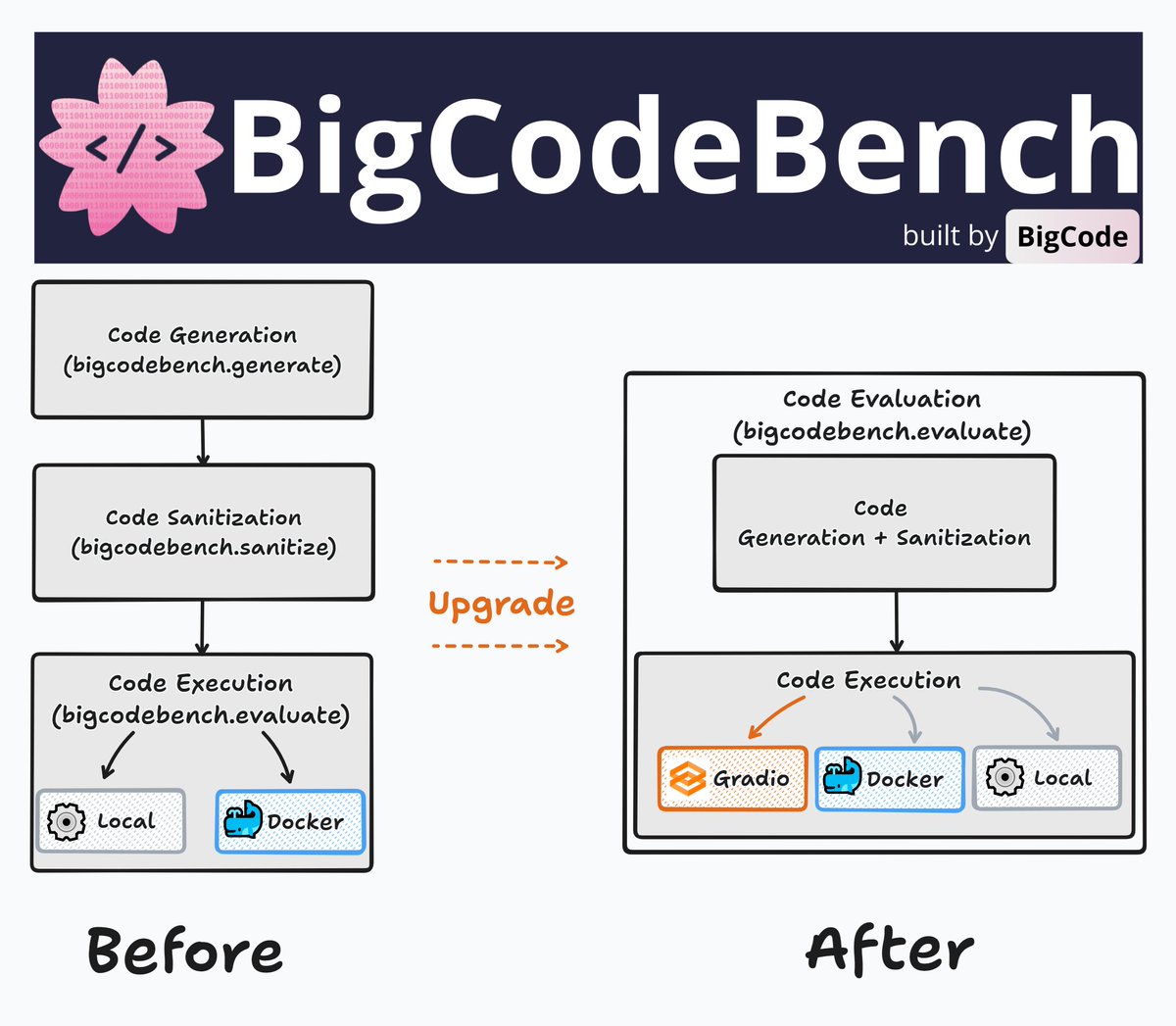

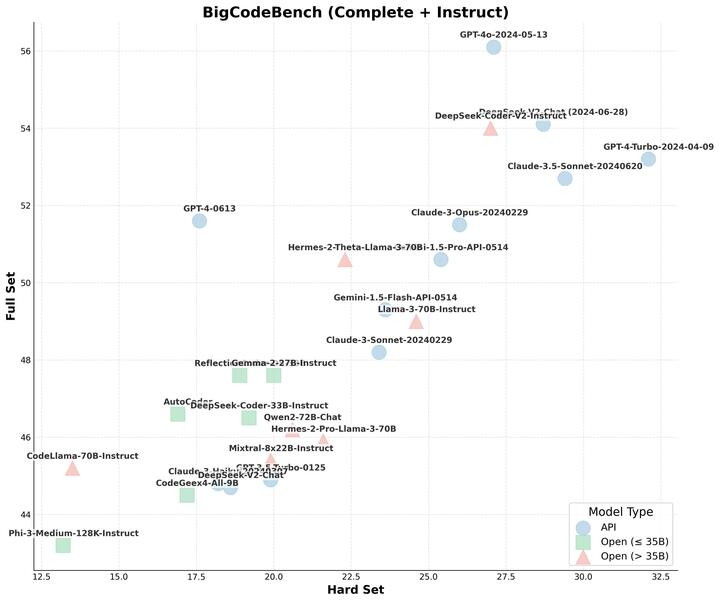

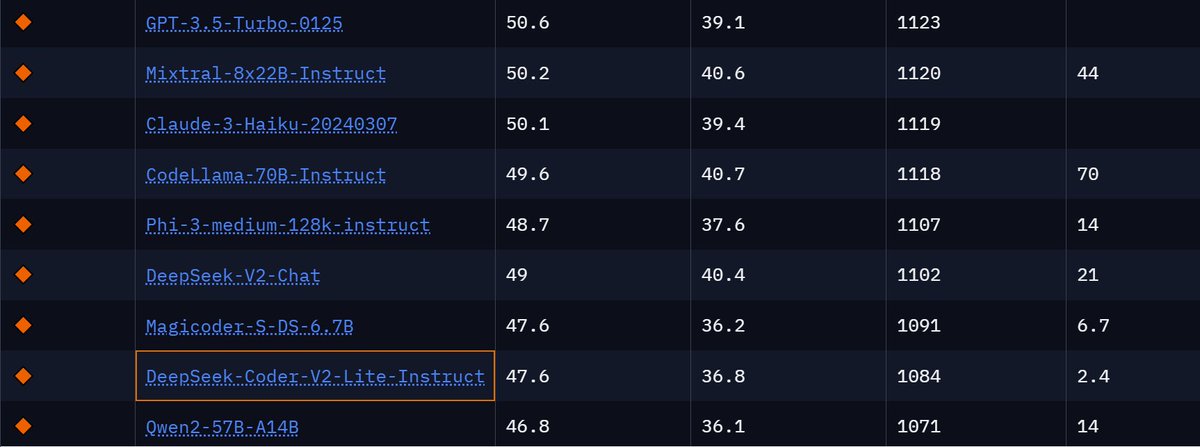

In the past few months, we’ve seen SOTA LLMs saturating basic coding benchmarks with short and simplified coding tasks. It's time to enter the next stage of coding challenge under comprehensive and realistic scenarios! -- Here comes BigCodeBench, benchmarking LLMs on solving practical and challenging programming tasks! So, can LLMs solve these tasks? - Not yet! 🏆 Pass@1: Humans ace 97%, GPT-4o only hits 50-60%, but DeepSeek-Coder-V2 is tighy at its heels! Check out our leaderboard, data, code, and paper: bigcode-bench.github.io 1/🧵

Introducing 🌸BigCodeBench: Benchmarking Large Language Models on Solving Practical and Challenging Programming Tasks! BigCodeBench goes beyond simple evals like HumanEval and MBPP and tests LLMs on more realistic and challenging coding tasks.

Introducing 🌸BigCodeBench: Benchmarking Large Language Models on Solving Practical and Challenging Programming Tasks! BigCodeBench goes beyond simple evals like HumanEval and MBPP and tests LLMs on more realistic and challenging coding tasks.