Alex Prompter@alex_prompter

Holy shit… Your anonymous internet identity can now be unmasked for $1 😳

Not by the FBI. By anyone with access to Claude or ChatGPT and a few of your Reddit comments.

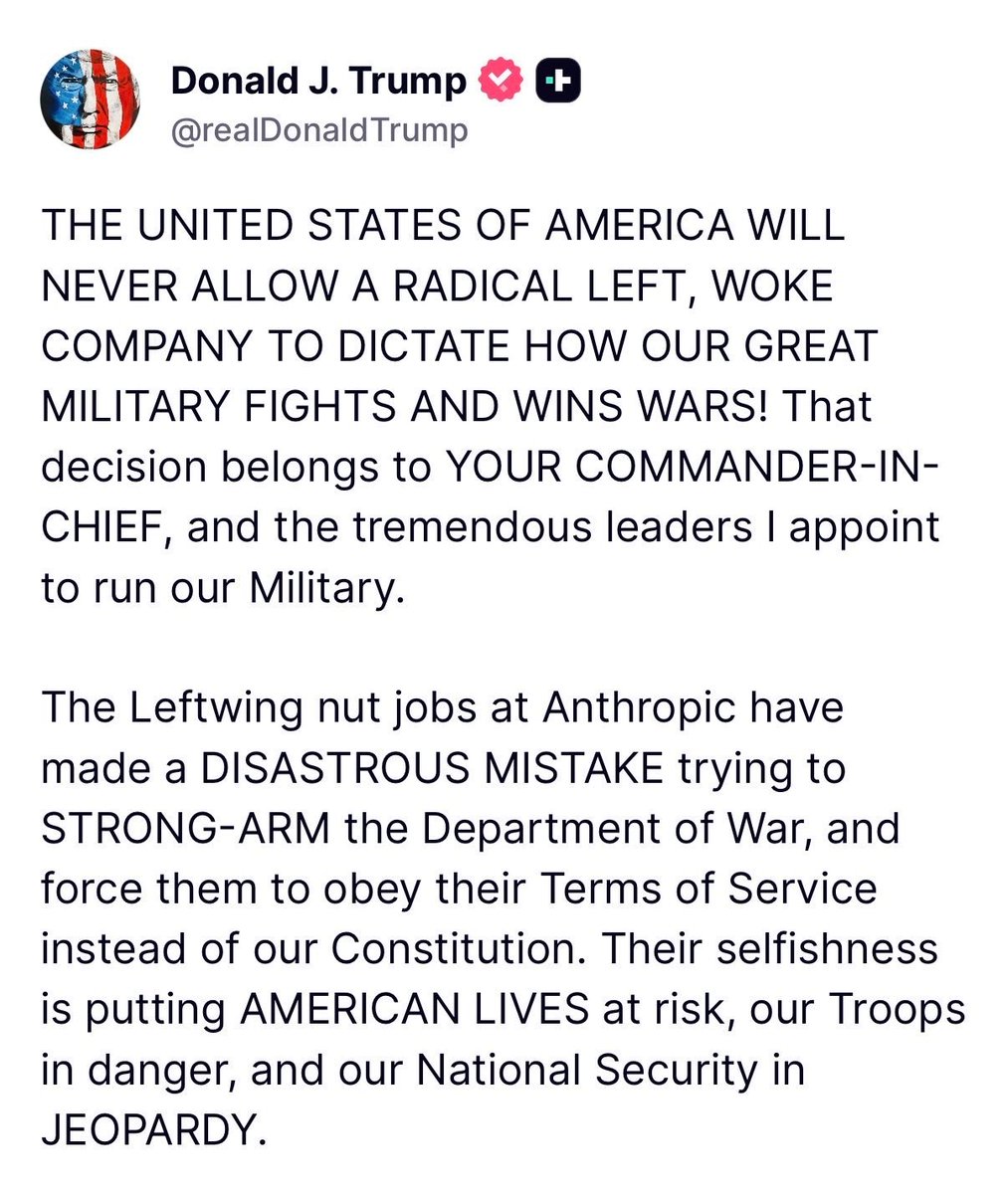

ETH Zurich and Anthropic just dropped a paper called “Large-Scale Online Deanonymization with LLMs” and the results are the most alarming privacy research I’ve read this year.

They built an automated pipeline that takes your anonymous posts, extracts identity signals, searches the web, and figures out who you are.

No human investigator needed. Fully autonomous. Works on Hacker News, Reddit, LinkedIn, even redacted interview transcripts.

Here’s how bad the numbers are.

On Hacker News users: 67% identified correctly.

When the system made a guess, it was right 90% of the time.

On Reddit academics posting under pseudonyms: 52%.

On scientists whose interview transcripts were explicitly redacted for privacy: 9 out of 33 still got unmasked.

The pipeline works in four steps they call ESRC. Extract identity signals from your posts using LLMs.

Search for candidate matches using embeddings across thousands of profiles.

Reason over top candidates with models like GPT-5.2. Calibrate confidence so when it does guess, it’s almost never wrong.

The classical deanonymization method from the famous Netflix Prize attack? Nearly 0% recall across every test.

LLMs didn’t just improve on old techniques. They made old techniques look like toys.

When they scaled to temporally split Reddit profiles, matching a user’s old posts to their newer ones across a full year gap, the pipeline hit 67% recall at 90% precision and 38% recall at 99% precision.

Meaning even a year of changed interests and different conversations wasn’t enough to hide.

More reasoning compute = better deanonymization. High reasoning effort doubled recall at 99% precision in some tests. As frontier models get smarter, this attack strengthens automatically. Every model upgrade is a privacy downgrade.

What makes it nearly impossible to defend against: the pipeline splits into subtasks that all look benign. Summarize a profile.

Compute embeddings. Rank candidates. No single API call screams “deanonymization.” The researchers themselves say they’re pessimistic that safety guardrails or rate limits can stop it.

Their conclusion is blunt: “Users who post under persistent usernames should assume that adversaries can link their accounts to real identities.” And it extrapolates.

Log-linear projections suggest roughly 35% recall at 90% precision even at one million candidates.

Every throwaway account. Every anonymous forum post. Every “nobody will connect this to me” comment.

It’s all searchable micro-data now. And the cost to run the full agent on one target is less than a cup of coffee.

Practical anonymity on the internet just died. The paper killed it with math.