Naoto Nakai

71.2K posts

Naoto Nakai me-retweet

Naoto Nakai me-retweet

Naoto Nakai me-retweet

🚀 Day-0 MTP support for Gemma4 now available at vLLM with ready-to-use docker image!

⚡️Enjoy up to 3x faster decoding performance to supercharge your development with zero quality degradation!

Check out the full vLLM recipes for Gemma 4 model series👇

recipes.vllm.ai/Google/gemma-4…

Google for Developers@googledevs

Gemma 4: Now up to 3x Faster. ⚡ Same quality, way more speed. Our new MTP drafters allow Gemma 4 to predict multiple tokens at once, effectively tripling your output speed without compromising intelligence.

English

Naoto Nakai me-retweet

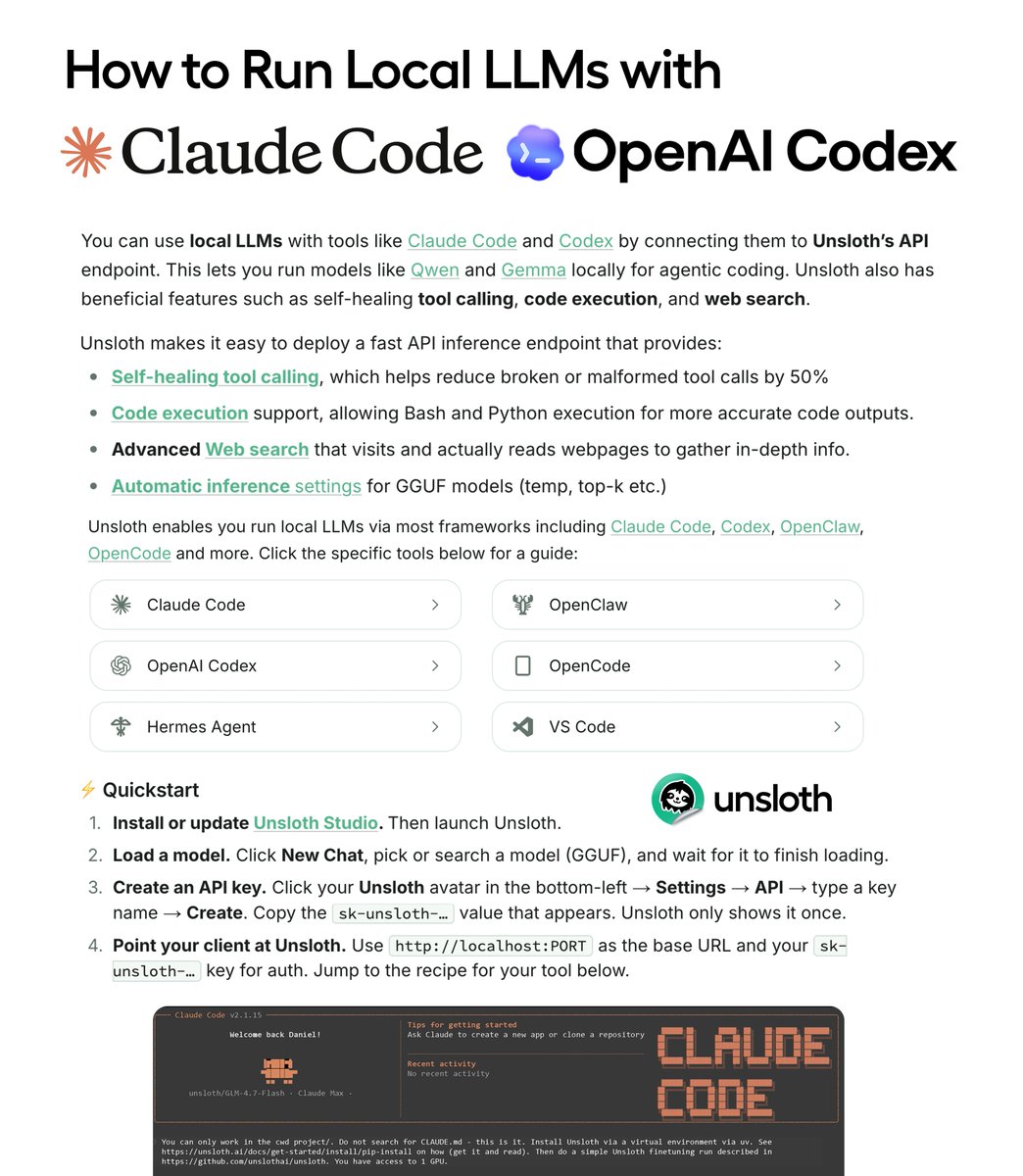

We made a guide on how to run open LLMs in Claude Code, Codex and OpenClaw.

Use Gemma 4 and Qwen3.6 GGUFs for local agentic coding on 24GB RAM

Run with self-healing tool calls, code execution, web search via the Unsloth API endpoint and llama.cpp

Guide: unsloth.ai/docs/basics/api

English