RPX

1.1K posts

DEFAULT and FREE

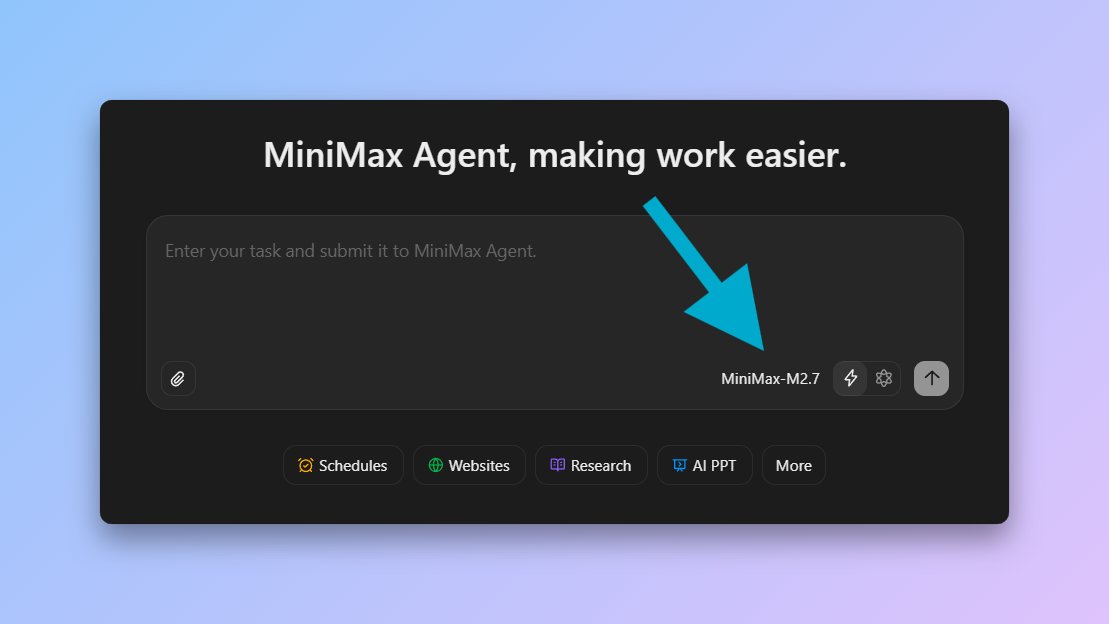

M2.7 on @zocomputer

Zo Computer@zocomputer

We just made MiniMax M2.7 the default model on Zo, and we made it FREE. The future of AI includes personalized intelligence that will flow cheaply from your own infrastructure. To give everyone a taste of this future, we’re keeping all the latest open-source models on Zo free for a limited time.

English

GPT 5.4 Mini completes BridgeBench tasks in 3.4 seconds.

GPT 5.4 takes 704.4 seconds.

207x faster.

100% completion rate.

Overall score 94.8 vs 95.5.

0.7 points of intelligence lost for a 207x speed increase.

Ranked #6 overall.

Ahead of GPT 5.3 Codex.

Ahead of Grok 4.20 Beta.

OpenAI got the mini model right this time.

bridgemind.ai/bridgebench

English

@RpxDeveloper I mean if you're using claude code it's not more expensive to do so, you just get a dumber model past 200k context.

But yeah, I don't see a use for anything above 400k

English

Some things I learned this week:

1. GPT-5.4/Codex at more than 256k max tokens doesn't help and is too expensive (that's why I ran out of usage btw).

The models still don't do great past 200k context and I can get basically infinite context using subagents anyway.

2. To be fair to OpenAI I did spam the hell out of it over 3 devices working in 4-10 sessions 24/7 especially with Autoresearch.

This is very generous, and I love the app a lot.

0xSero@0xSero

Fellas, I thought I could make it but I can't. Adding credits, Slopus too buggy, Gemini too philosophical, OSS AI not good enough at research. It's over for me.

English

RPX me-retweet

@thsottiaux Sometimes codex replies after 10 minutes or so with an answer "out of nowhere" with things we talked earlier about...

English

@thsottiaux Switch between models with different context window size (smaller) does not fit and needs a solution.

English

@thsottiaux Text output above prompt changes sometimes and gets denser

English

@thsottiaux Looking forward to when you add a remote control feature: ChatGPT -> Codex App on a local machine acting as a server. I thought that was the main reason you released the Codex app and expected it to come shortly after, but it seems like I was wrong =/

English

Working at OpenAI is fun because questioning everything and taking risks is part of the culture. Within Codex, the team asks itself how we could make it an order of magnitude better every few months and then sets most things aside to go and do it across the entire stack. Some examples were the Codex App and our first deployment of Cerebras inference with WebSockets. We are now well under way on the next bet and it’s making even our best engineers nervous as it’s at the edge of what’s possible today.

English