Tweet Disematkan

Rush

242 posts

Rush me-retweet

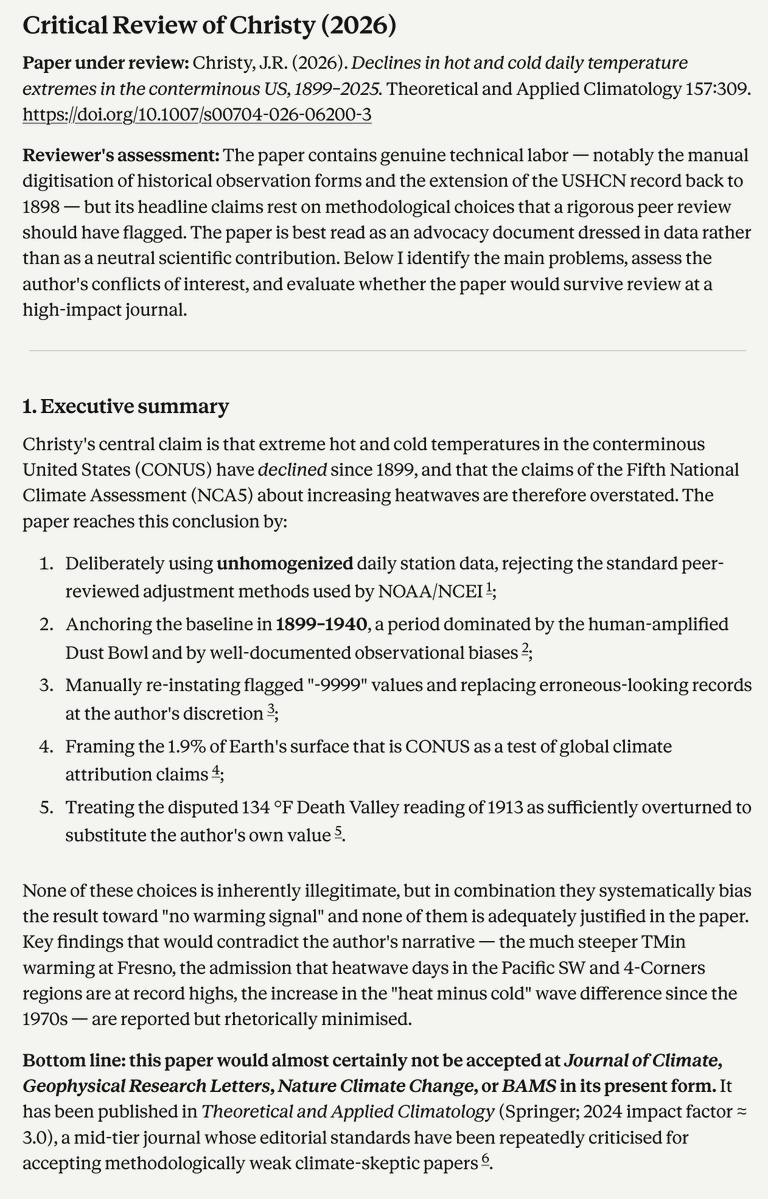

Claude vs. Christy

claude.ai/public/artifac…

Dr. Matthew M. Wielicki@MatthewWielicki

New peer-reviewed research just dropped… and it’s awkward. A study released today using actual U.S. station data (1899–2025) finds no significant trend in summer daytime highs and even declines in some temperature extremes. Let that sink in. Because the Fifth National Climate Assessment confidently told us extreme heat is accelerating. The data, however, begs to differ. link.springer.com/article/10.100…

English

Rush me-retweet

@MForgeng Das war übrigens die fachliche Erwiderung auf Lindzens fehlerhafte Berechung.

agupubs.onlinelibrary.wiley.com/doi/10.1029/20…

Hier eine Zusammenfassung auf Deutsch:

claude.ai/public/artifac…

Deutsch

Rush me-retweet

@SConwaySmith @LeeHarris I’ve known about the greenhouse effect since 1970 when doing O level physics. But here’s a climate scientist explaining it for you.

Rush@RushHourPhD

The Greenhouse Effect youtu.be/slPMD5i5Phg

English

Rush me-retweet

Re climate change, many here repeat all the old climate myths… Warming caused by urban heat island effect. Global confused with Greenland temperatures. Or tell me (paleoclimate expert) that climate always changed.

Hey, we know it all, you find all here: skepticalscience.com

English

Rush me-retweet

Rush me-retweet

Rush me-retweet

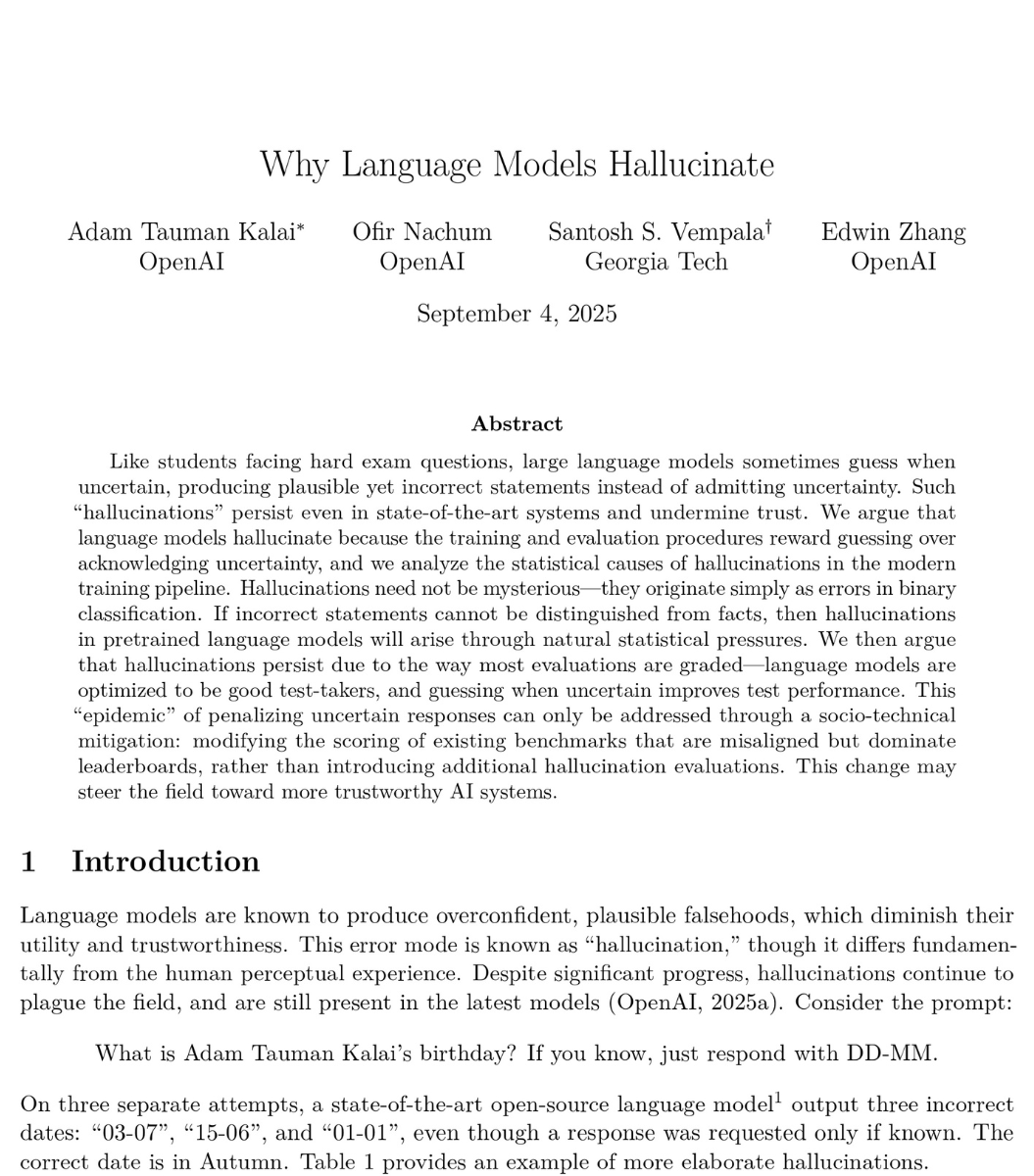

🚨BREAKING: OpenAI published a paper proving that ChatGPT will always make things up.

Not sometimes. Not until the next update. Always. They proved it with math.

Even with perfect training data and unlimited computing power, AI models will still confidently tell you things that are completely false. This isn't a bug they're working on. It's baked into how these systems work at a fundamental level.

And their own numbers are brutal. OpenAI's o1 reasoning model hallucinates 16% of the time. Their newer o3 model? 33%. Their newest o4-mini? 48%. Nearly half of what their most recent model tells you could be fabricated. The "smarter" models are actually getting worse at telling the truth.

Here's why it can't be fixed. Language models work by predicting the next word based on probability. When they hit something uncertain, they don't pause. They don't flag it. They guess. And they guess with complete confidence, because that's exactly what they were trained to do.

The researchers looked at the 10 biggest AI benchmarks used to measure how good these models are. 9 out of 10 give the same score for saying "I don't know" as for giving a completely wrong answer: zero points. The entire testing system literally punishes honesty and rewards guessing.

So the AI learned the optimal strategy: always guess. Never admit uncertainty. Sound confident even when you're making it up.

OpenAI's proposed fix? Have ChatGPT say "I don't know" when it's unsure. Their own math shows this would mean roughly 30% of your questions get no answer. Imagine asking ChatGPT something three times out of ten and getting "I'm not confident enough to respond." Users would leave overnight. So the fix exists, but it would kill the product.

This isn't just OpenAI's problem. DeepMind and Tsinghua University independently reached the same conclusion. Three of the world's top AI labs, working separately, all agree: this is permanent.

Every time ChatGPT gives you an answer, ask yourself: is this real, or is it just a confident guess?

English

Rush me-retweet

Rush me-retweet

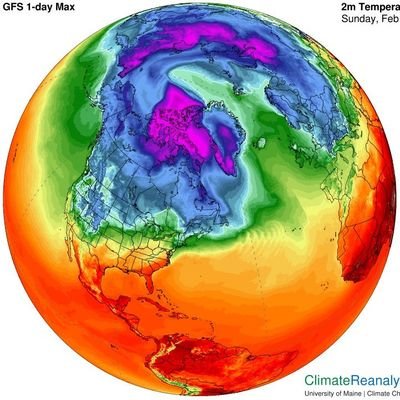

Earth is now warming at a rate of around 0.35 ºC per decade, fresh analysis finds.

go.nature.com/4aV6Gu8

English

Rush me-retweet

New study published in Nature: "Rising atmospheric CO2 reduces nitrogen availability in boreal forests"

nature.com/articles/s4158…

English

Rush me-retweet

Rush me-retweet

As a bit of background, I've been working with these tools since late 2022, and seen firsthand how they have dramatically improved over time. I’ve also worked with frontier AI labs to evaluate how well LLMs answer climate questions, and to help enable AI tools to support scientific collaboration.

theclimatebrink.com/p/the-ai-augme…

English

Rush me-retweet

Daunting but doable: Europe urged to prepare for 3C of global warming. theguardian.com/environment/20…

English

Rush me-retweet

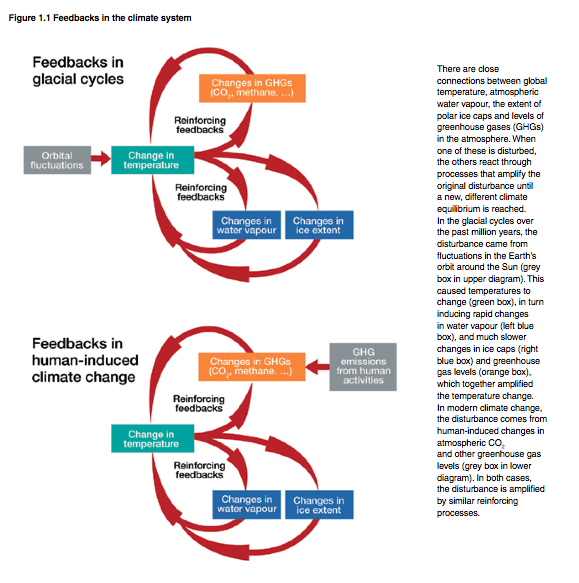

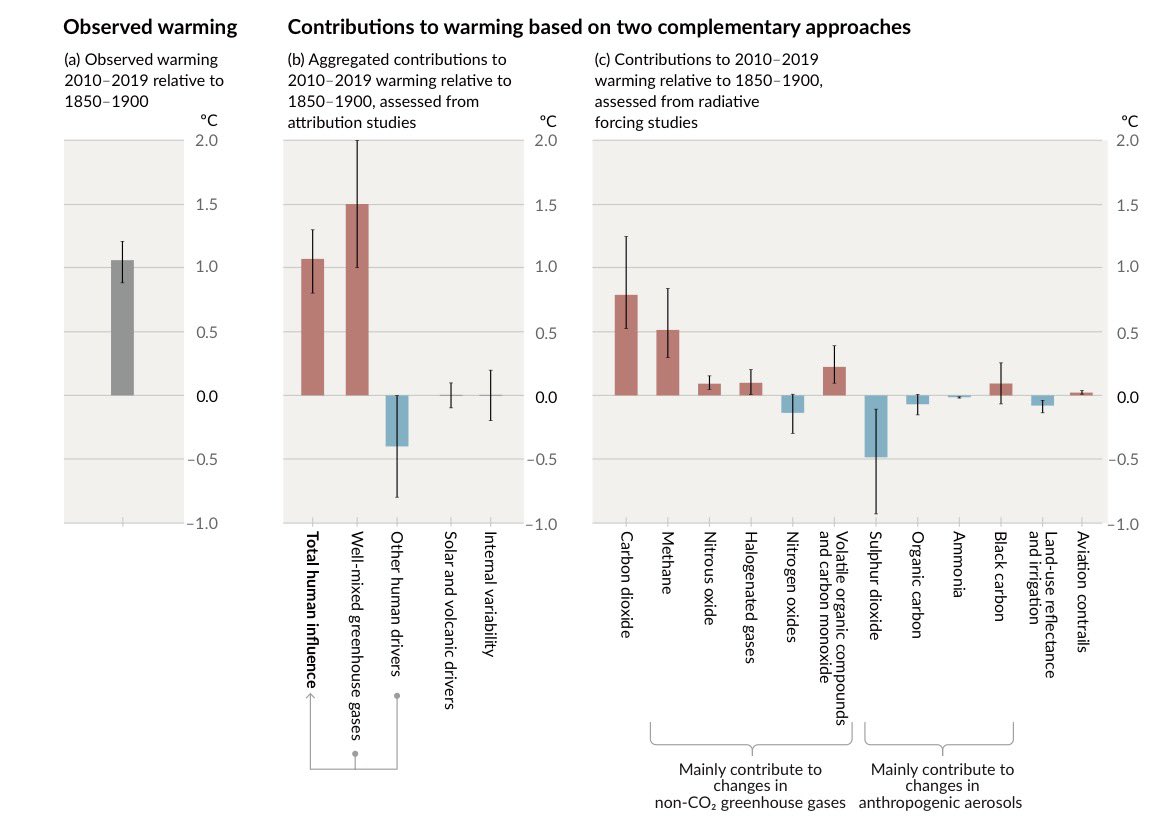

@atrembath @RogerPielkeJr That’s nonsense, as water vapor is a feedback in the climate system rather than a forcing (with the exception of stratospheric water vapor from CH4 oxidation, which is generally accounted for as part of CH4 indirect forcing).

English

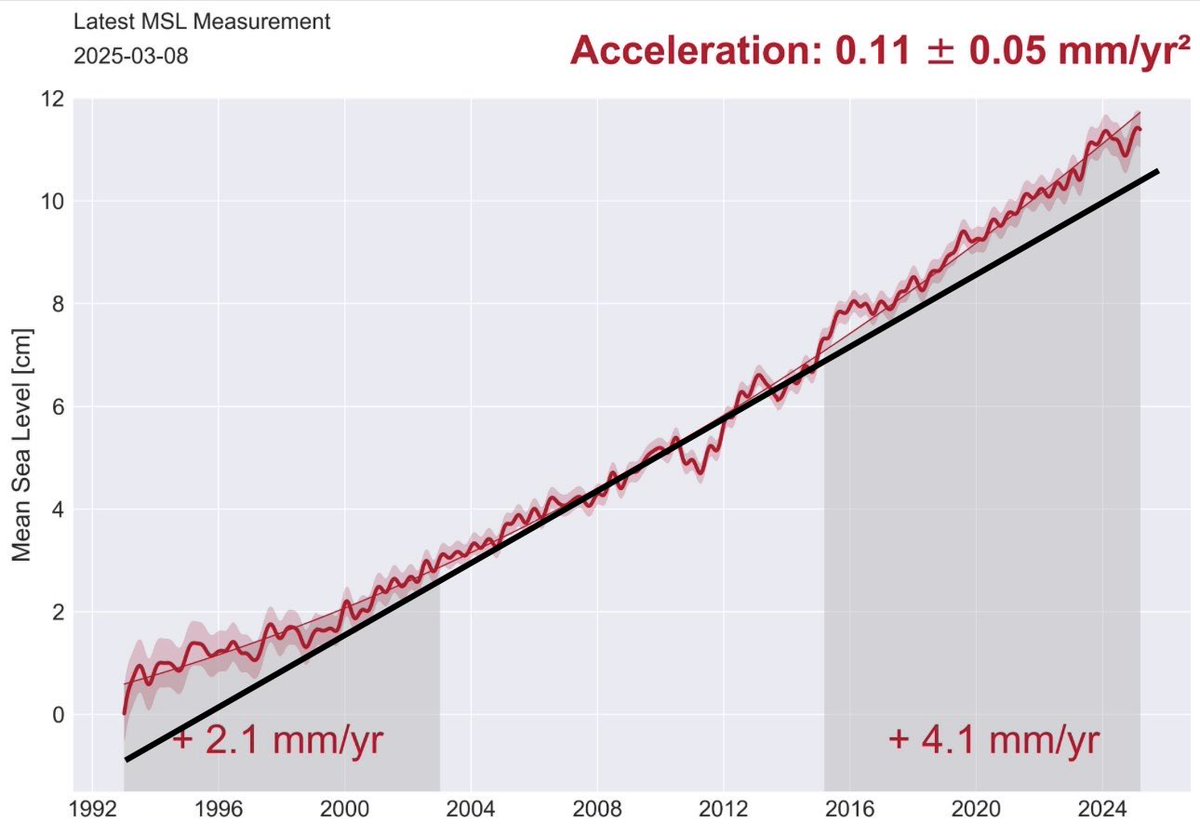

Well, the science is in. Despite decades of hype about "accelerating sea level rise will drown us all", it turns out the sea level rise isn't accelerating at all.

w.

wattsupwiththat.com/2026/02/16/wuw…

English

Rush me-retweet

showed this article to my students and one of them described it as 'literally sickening'

Rush@RushHourPhD

Impressive! Assessing ExxonMobil’s global warming projections | Science science.org/doi/10.1126/sc…

English