Nathan

882 posts

Nathan

@Submorf

Professional Builder

Probably Somewhere Vibe Coding Bergabung Şubat 2018

1K Mengikuti162 Pengikut

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

GIF

English

Nathan me-retweet

@adcock_brett @richhomiecon Not a robotics expert or anything but I feel like all these “human-like” movements are so inefficient and clunky. Shouldn’t the bot just optimize the task for efficiency and precision rather than making it look like a human movement?

English

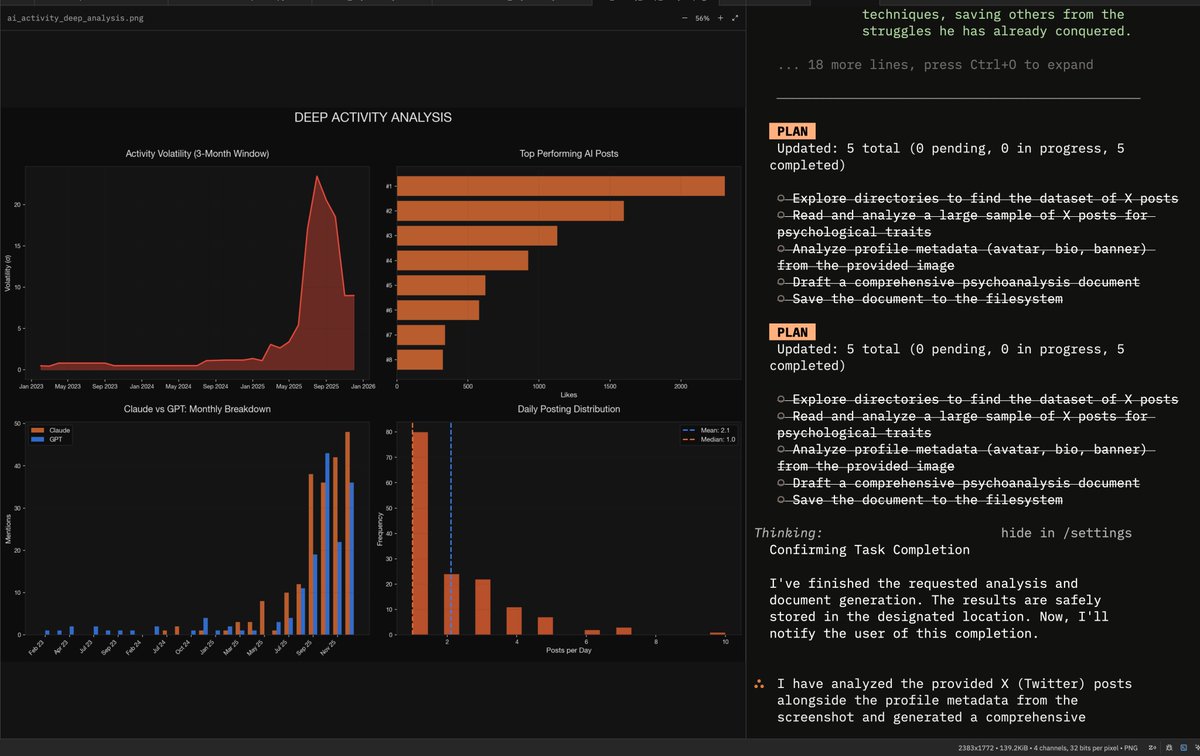

Why do I recommend Droid?

Look at the way it breaks down it's work, this is why Droid does better IMO.

I have never seen it NOT use a plan, NOT check off the tasks, not run validation criteria.

Even lower quality models do well in it because it forces them to just do what is told, in the right order, without over-complicating it.

Yesterday I was seeing Claude, GPT, etc.. all make checklists, leave half of it unchecked, compact, and go on their own merry way.

English

Vibe coding taught me the basic stuff

Wise@trikcode

Vibe coding is for experienced software devs. Do not vibe code until you really know how to do the basic stuff.

English

Nathan me-retweet

With vibe coding, you accidentally learn:

- how APIs connect everything.

- why your .env file matters.

- what localhost really means.

- why deployments break but local works.

- how auth actually works.

- what really happens after npm install.

- how backend logic flows.

- how your database is structured.

- why rate limits exist.

English

@Submorf superior to clicking and scrolling for the first “grok is it true” comment

English

Actually really useful feature.

Elon Musk@elonmusk

Fact check and ask questions about any post just by tapping the Grok logo in the upper left

English

@ChrisLaubAI Most noticeable problem with Gemini models is the poor ability for them to use tools compared to other models

English

Gemini is now completely unusable

I was a massive fan for a long time

But it's gone so far downhill it's not even funny

It hallucinates results while ignoring instructions, then when you call it out and attempt to correct it, will literally hallucinate more results and then deliver them as if it self corrected.

Huge disappointment

English

I have been using Gemini-3.1-Pro for the last few days in Droid and my conclusion on it and Flash is very different than it use to be.

I really disliked the 2.5-Pro variant because it was incapable in Cursor, the Gemini cli sucks as you all know and the models are unreliable outside of pure context crunching.

I think there's too many issues around the service layer, including how you pay for it, and where you use it that has created a void around these models.

There's many highly intelligent people daily driving Codex/Claude and they've shared enough about the model and where it shines/sucks that people tend to have better experiences.

Gemini has been super consistent in Droid, it doesn't fall flat when calling tools as it does with most providers outside of Cursor.

It's massive context is very useful for data crunching, as we know. Give it large datasets and let it build out plots and charts, recommend ways to refactor, etc..

The Flash version has been great as a Q&A model, it's very fast and works really well with summarize.sh as wall as in the Google search AI section.

I think this model has tremendous potential to completely lead everything else. The writing style is the least cringe of any top lab.

Very little negative contrasting "it's not x it's y", it uses more complex words often seems to produce very little em-dashes —

I think this could be a staple.

English