ani

114.4K posts

and I got 4 kids. I still think that body count matters 😭

@NittyScottMC uh she actually sounds the same, she just code switching due to the space she's in lmfao. ik common sense not so common these days so had to elaborate.

Trump: We can't take care of daycare. We're a big country. We're fighting wars. It's not possible for us to take care of daycare, Medicaid, Medicare, all these things.

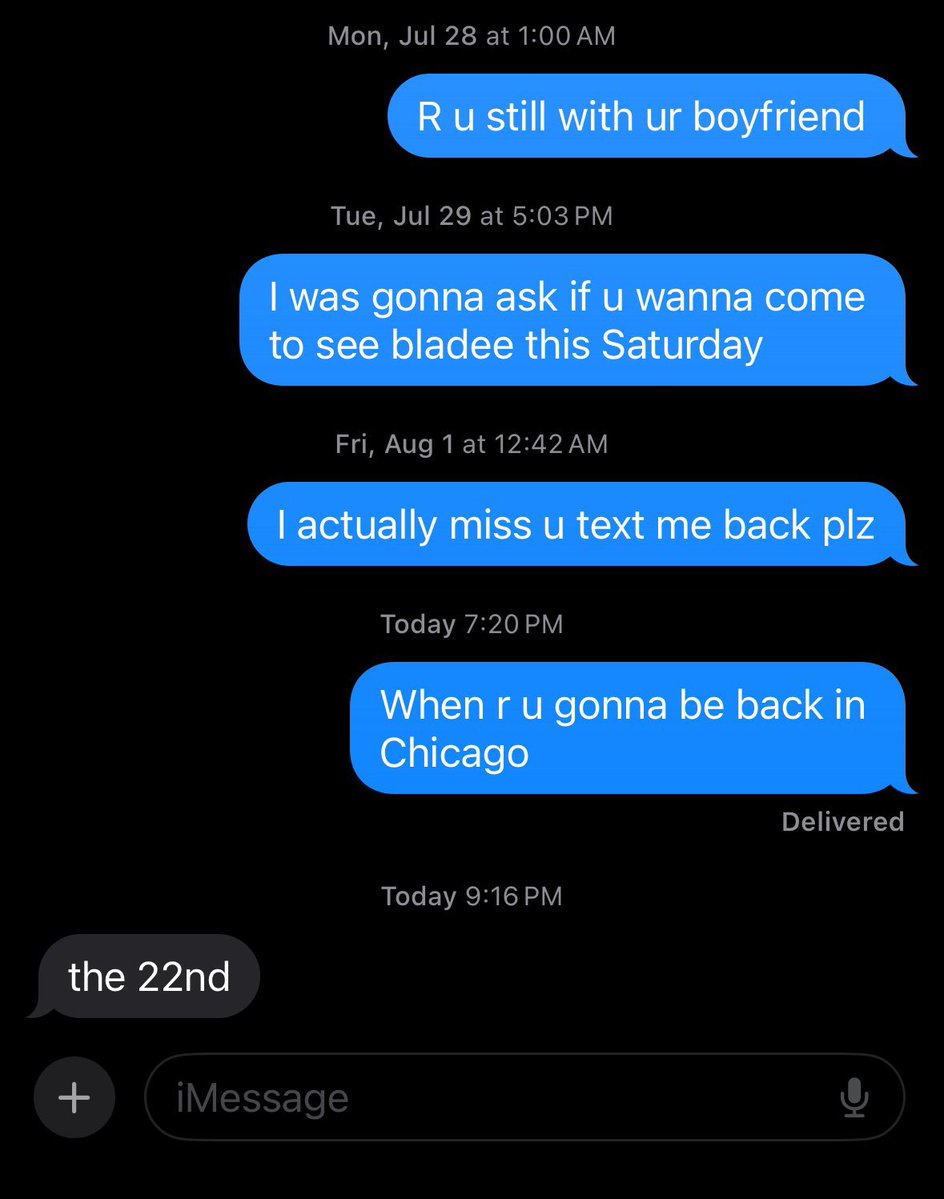

Relationships deserve this type of energy 💕😂🫶🏾

let latinas date black men thx

President Biden visits with fans while flying commercial. 📹: lattesbeforelife on TikTok.

slut shaming really tryna make a come back. i can’t stand you fake puritan, wanna be nun ass hos. leave us alone

They are eating her UP for this 😭😭😭

Be honest… does ANYONE actually use these words in real life? 🤣 Bamboozled Flabbergasted Discombobulated Shenanigans Cattywampus Lollygag Malarkey Kerfuffle Brouhaha Nincompoop Skedaddle Tomfoolery Flibbertigibbet Pumpernickel

u ever got so freaky that u lowkey be ashamed of yourself? LMFAOOOOOOO